# Technical Document Extraction: MoDE Architecture and Performance

This document provides a detailed technical extraction of the provided image, which consists of a performance line chart and two architectural diagrams for neural network layers.

---

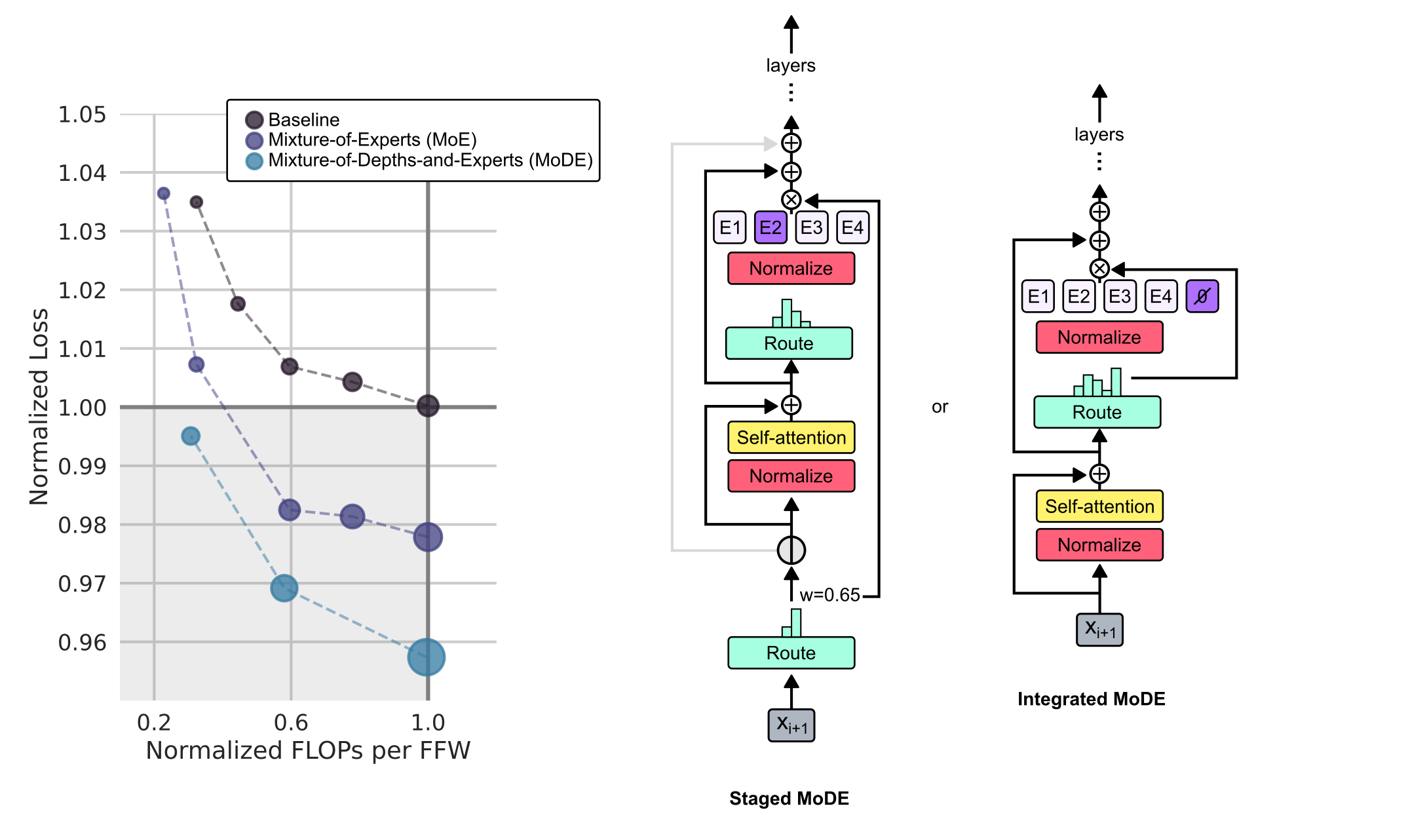

## 1. Performance Chart: Loss vs. FLOPs

### Chart Metadata

- **X-Axis Title:** Normalized FLOPs per FFW

- **X-Axis Markers:** 0.2, 0.6, 1.0

- **Y-Axis Title:** Normalized Loss

- **Y-Axis Markers:** 0.96, 0.97, 0.98, 0.99, 1.00, 1.01, 1.02, 1.03, 1.04, 1.05

- **Legend Location:** Top-center [x=0.3, y=0.1]

- **Legend Categories:**

- **Baseline** (Dark Grey/Black circle)

- **Mixture-of-Experts (MoE)** (Purple circle)

- **Mixture-of-Depths-and-Experts (MoDE)** (Teal/Light Blue circle)

### Data Series Analysis

All three series exhibit a downward trend (negative slope), indicating that as Normalized FLOPs increase, Normalized Loss decreases. Larger marker sizes correlate with higher FLOP counts.

| Normalized FLOPs (Approx) | Baseline Loss | MoE Loss | MoDE Loss |

| :--- | :--- | :--- | :--- |

| ~0.25 | 1.035 | 1.037 | N/A |

| ~0.35 | 1.018 | 1.008 | 0.995 |

| ~0.60 | 1.007 | 0.983 | 0.969 |

| ~0.80 | 1.004 | 0.981 | N/A |

| 1.00 | 1.000 | 0.978 | 0.957 |

**Key Observation:** The MoDE (Teal) series consistently achieves the lowest Normalized Loss for any given FLOP count compared to the Baseline and MoE.

---

## 2. Architectural Diagrams

The image presents two variations of the "Mixture-of-Depths-and-Experts" (MoDE) architecture.

### A. Staged MoDE (Center Diagram)

This architecture uses a sequential routing process.

1. **Input:** $X_{i+1}$ enters from the bottom.

2. **Initial Routing:** Passes through a **Route** block (teal) with a histogram visualization.

3. **Gating/Weighting:** A weight $w=0.65$ is applied. A bypass connection exists here.

4. **Attention Block:**

* **Normalize** (Red)

* **Self-attention** (Yellow)

* Summation node ($\oplus$) with a residual connection.

5. **Expert Routing:**

* **Route** block (teal) with histogram.

* **Normalize** (Red)

* **Experts:** Four expert blocks labeled **E1, E2, E3, E4**. E2 is highlighted in purple, indicating selection.

6. **Output:** The selected expert output is multiplied ($\otimes$) and summed ($\oplus$) through two residual layers before proceeding to the next **layers**.

### B. Integrated MoDE (Right Diagram)

This architecture integrates the "depth" decision (skipping) directly into the expert routing.

1. **Input:** $X_{i+1}$ enters from the bottom.

2. **Attention Block:**

* **Normalize** (Red)

* **Self-attention** (Yellow)

* Summation node ($\oplus$) with a residual connection.

3. **Integrated Routing:**

* **Route** block (teal) with histogram.

* **Normalize** (Red)

* **Experts + Null:** Blocks labeled **E1, E2, E3, E4** and a **$\emptyset$ (Null/Empty set)** block. The $\emptyset$ block is highlighted in purple, suggesting a "skip" or "no-op" expert choice.

4. **Logic Flow:** A bypass connection originates from before the Route block and connects to a multiplication node ($\otimes$) after the experts.

5. **Output:** Summation nodes ($\oplus$) lead to the next **layers**.

---

## 3. Component Summary Table

| Component | Color | Function |

| :--- | :--- | :--- |

| **Route** | Teal | Determines which expert or path the data follows. |

| **Normalize** | Red | Layer normalization or similar scaling. |

| **Self-attention**| Yellow | Standard transformer attention mechanism. |

| **E1 - E4** | White/Grey | Individual Experts in the MoE layer. |

| **$\emptyset$** | Purple | Represents the "Depth" skip option in Integrated MoDE. |

| **$\oplus$ / $\otimes$** | White Circle | Addition (Residual) and Multiplication (Gating) operations. |