\n

## Diagram: Architectures of Language Models and Agents

### Overview

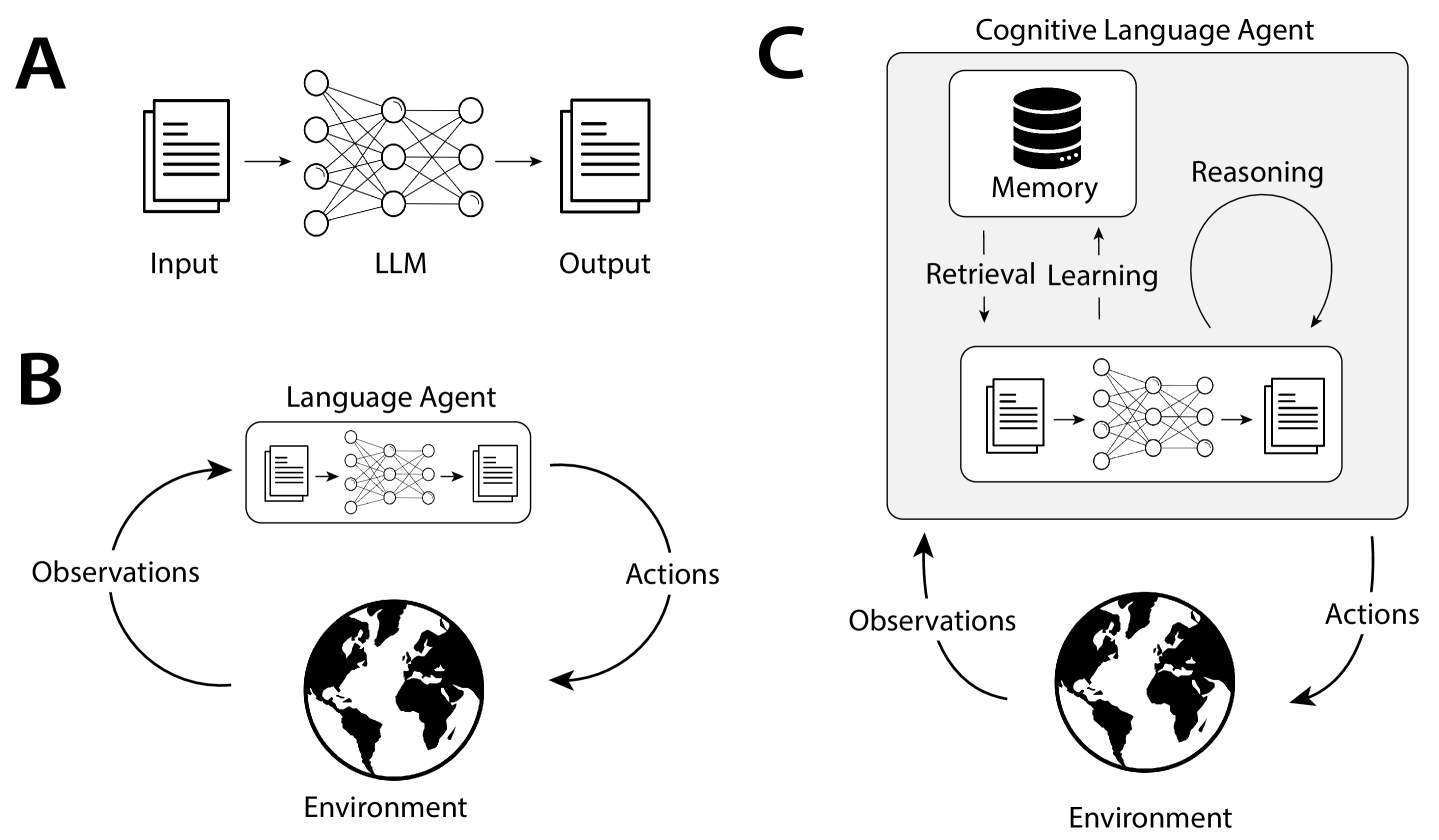

The image is a technical diagram composed of three distinct panels labeled **A**, **B**, and **C**, illustrating a progression in the architecture and capability of AI systems based on Large Language Models (LLMs). The diagrams are presented in a clean, black-and-white schematic style on a light gray background.

### Components/Axes

The diagram is segmented into three labeled regions:

* **A (Top-Left):** A linear pipeline.

* **B (Bottom-Left):** A cyclic, interactive system.

* **C (Right):** A complex, cognitive agent architecture.

**Common Visual Elements:**

* **Document Icon:** Represents text input/output.

* **Neural Network Icon:** Represents the LLM processing core.

* **Globe Icon:** Represents the external "Environment".

* **Arrows:** Indicate the flow of data or control.

### Detailed Analysis

#### **Panel A: Basic LLM Pipeline**

* **Components & Flow:** This panel shows a simple, feed-forward process.

1. **Input:** Represented by a document icon on the left.

2. **LLM:** Represented by a neural network icon in the center. An arrow points from "Input" to "LLM".

3. **Output:** Represented by a document icon on the right. An arrow points from "LLM" to "Output".

* **Labels:** The text labels "Input", "LLM", and "Output" are placed directly below their respective icons.

#### **Panel B: Language Agent**

* **Components & Flow:** This panel introduces a closed-loop interaction with an environment.

1. **Language Agent:** A rounded rectangle containing the same "Input -> LLM -> Output" pipeline from Panel A.

2. **Environment:** Represented by a globe icon at the bottom center.

3. **Observations:** A label on a curved arrow flowing from the "Environment" up to the "Language Agent".

4. **Actions:** A label on a curved arrow flowing from the "Language Agent" down to the "Environment".

* **Spatial Layout:** The "Language Agent" box is centered above the "Environment" globe. The "Observations" arrow curves up the left side, and the "Actions" arrow curves down the right side, creating a clockwise cycle.

#### **Panel C: Cognitive Language Agent**

* **Components & Flow:** This is the most complex panel, detailing internal cognitive processes.

1. **Cognitive Language Agent:** A large rounded rectangle encompassing all internal components.

2. **Core LLM Pipeline:** Inside the agent, at the bottom, is the familiar "Input -> LLM -> Output" pipeline.

3. **Memory:** Represented by a database/cylinder icon in the top-left of the agent box.

4. **Reasoning:** Represented by a circular, self-referential arrow in the top-right of the agent box.

5. **Retrieval Learning:** A bidirectional process indicated by two vertical arrows between "Memory" and the core LLM pipeline. The label "Retrieval Learning" is placed between these arrows.

6. **Environment Interaction:** Similar to Panel B, the agent interacts with an external "Environment" (globe icon) via "Observations" (incoming arrow) and "Actions" (outgoing arrow).

* **Spatial Layout:** The "Memory" and "Reasoning" modules are positioned above the core LLM pipeline within the agent's boundary. The "Environment" is outside and below the agent box.

### Key Observations

1. **Progressive Complexity:** The diagrams show a clear evolution from a static, one-way processor (A) to a reactive, interactive agent (B), and finally to a proactive agent with internal state and learning capabilities (C).

2. **Encapsulation:** Each subsequent architecture encapsulates the previous one. The core LLM pipeline from A is the foundation inside the agent in B, which is itself a component within the cognitive agent in C.

3. **Introduction of State:** Panel C introduces "Memory," a component absent in A and B, allowing the system to retain and recall information across interactions.

4. **Internal vs. External Loop:** Panel B shows only an external loop with the environment. Panel C adds an internal loop ("Reasoning") and a memory-retrieval loop, suggesting deliberation and learning separate from immediate environmental interaction.

### Interpretation

This diagram visually argues for a conceptual progression in AI system design:

* **A represents the foundational technology:** A pure LLM as a text-in, text-out function. It is stateless and reactive only to its immediate prompt.

* **B represents the first step toward agency:** By placing the LLM in a loop with an environment, it becomes an *agent* that can perceive (via observations) and affect the world (via actions). However, its "intelligence" is limited to the immediate context; it has no long-term memory or internal thought process.

* **C represents a model of cognitive agency:** This architecture aims to mimic higher-order cognitive functions. The **Memory** component provides continuity and context over time. The **Reasoning** loop suggests the capacity for internal deliberation, planning, or self-correction before acting. **Retrieval Learning** implies the system can actively update its memory and improve how it accesses stored information based on experience.

The overall message is that moving from a simple LLM to a truly capable AI agent requires integrating **interactive perception-action loops** with **internal cognitive architectures** for memory and reasoning. The diagram serves as a blueprint for building more autonomous, context-aware, and learning-capable AI systems.