## Diagram: Cognitive Language Agent System Architecture

### Overview

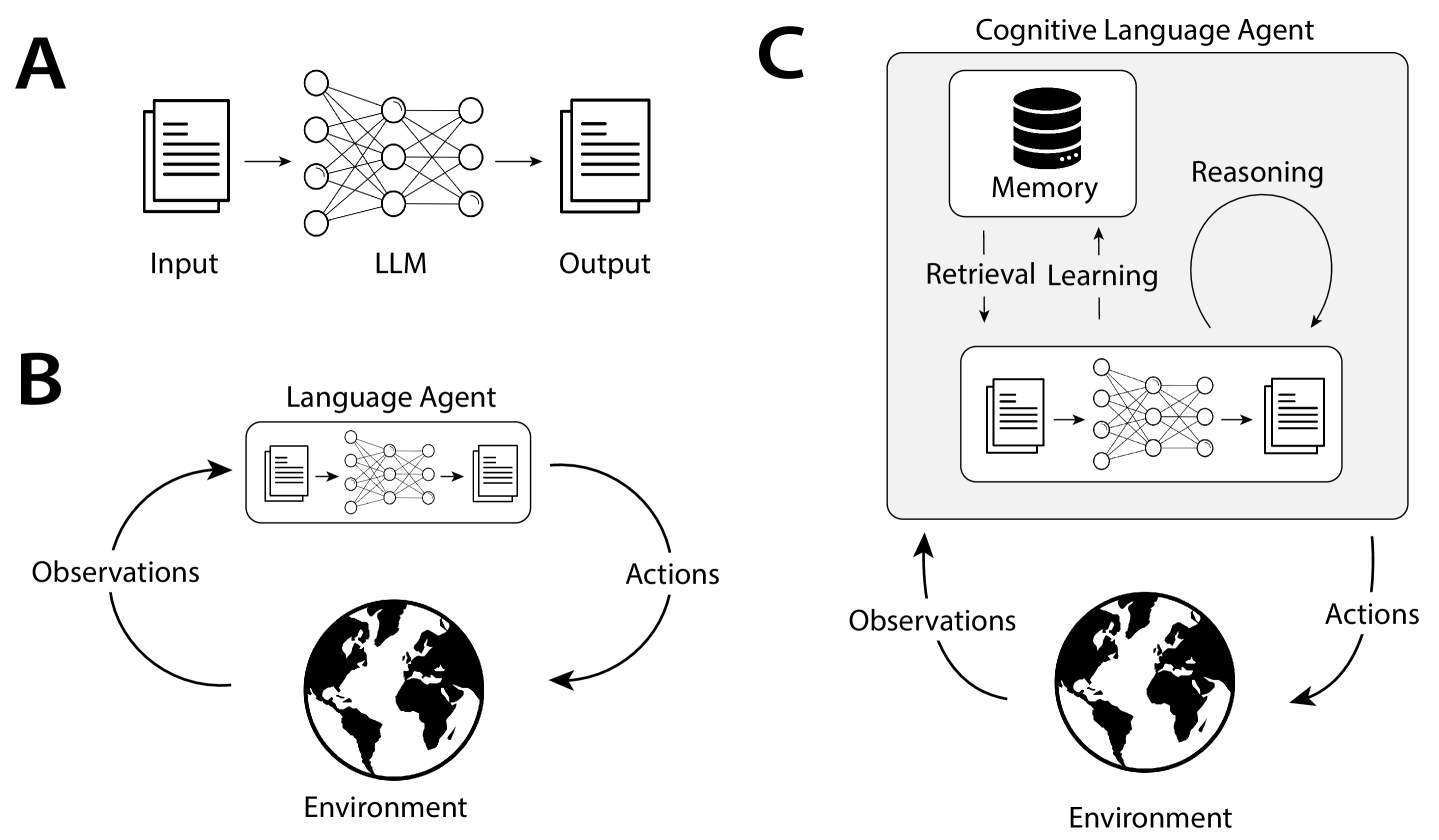

The image depicts a three-part system architecture for a cognitive language agent, illustrating input processing, environmental interaction, and memory-reasoning integration. Sections A, B, and C represent distinct components of the system.

### Components/Axes

#### Section A: Basic Language Model (LLM)

- **Input**: Text document icon (left)

- **LLM**: Neural network diagram (center)

- **Output**: Text document icon (right)

- **Flow**: Input → LLM → Output

#### Section B: Language Agent with Environment Interaction

- **Language Agent**: Neural network diagram (center)

- **Environment**: Globe icon (bottom)

- **Observations**: Curved arrow from Environment to Language Agent

- **Actions**: Curved arrow from Language Agent to Environment

- **Flow**: Observations → Language Agent → Actions → Environment → Observations

#### Section C: Cognitive Language Agent

- **Memory**: Stacked disk icon (top-left)

- **Retrieval Learning**: Arrow from Memory to Language Agent

- **Reasoning**: Circular arrow connecting Memory and Language Agent

- **Language Agent**: Neural network diagram (center)

- **Environment**: Globe icon (bottom)

- **Observations/Actions**: Arrows connecting Language Agent to Environment

- **Flow**: Memory → Retrieval Learning → Language Agent → Observations/Actions → Environment → Observations → Reasoning → Memory

### Detailed Analysis

1. **Section A**:

- Represents a standard language model pipeline.

- Input text is processed by the LLM to generate output text.

- No feedback loops or environmental interaction.

2. **Section B**:

- Introduces a **Language Agent** that interacts with an **Environment**.

- The agent receives **Observations** (e.g., sensory data) and produces **Actions** (e.g., responses).

- The Environment provides new Observations based on the Agent’s Actions, creating a closed-loop system.

3. **Section C**:

- Expands the Language Agent into a **Cognitive Language Agent** with **Memory** and **Reasoning**.

- **Memory** stores historical data, which is retrieved via **Retrieval Learning** to inform the Language Agent.

- **Reasoning** creates a feedback loop between Memory and the Language Agent, enabling adaptive decision-making.

- The Environment remains integral, but the agent now uses Memory to refine Observations and Actions.

### Key Observations

- **Hierarchical Complexity**:

- Section A → B → C shows increasing sophistication: from static LLM processing (A) to dynamic environmental interaction (B) to memory-augmented cognition (C).

- **Feedback Mechanisms**:

- Sections B and C emphasize closed-loop systems, where outputs (Actions) influence future inputs (Observations).

- **Memory Integration**:

- Section C introduces **Retrieval Learning** and **Reasoning**, enabling the agent to leverage past experiences for improved performance.

### Interpretation

This diagram illustrates the evolution of language agents from basic text processors (A) to autonomous, memory-aware systems (C). Key insights:

1. **Environmental Interaction**: Sections B and C highlight the importance of real-world feedback for adaptive learning.

2. **Memory as a Cognitive Enhancer**: Section C’s Memory component suggests that integrating historical data improves decision-making, akin to human episodic memory.

3. **Reasoning as a Feedback Loop**: The circular arrow between Memory and the Language Agent implies that reasoning is not a one-time process but an ongoing refinement mechanism.

The system’s design emphasizes **adaptability** (via environmental interaction) and **contextual awareness** (via memory and reasoning), positioning it as a step toward generalizable AI systems.