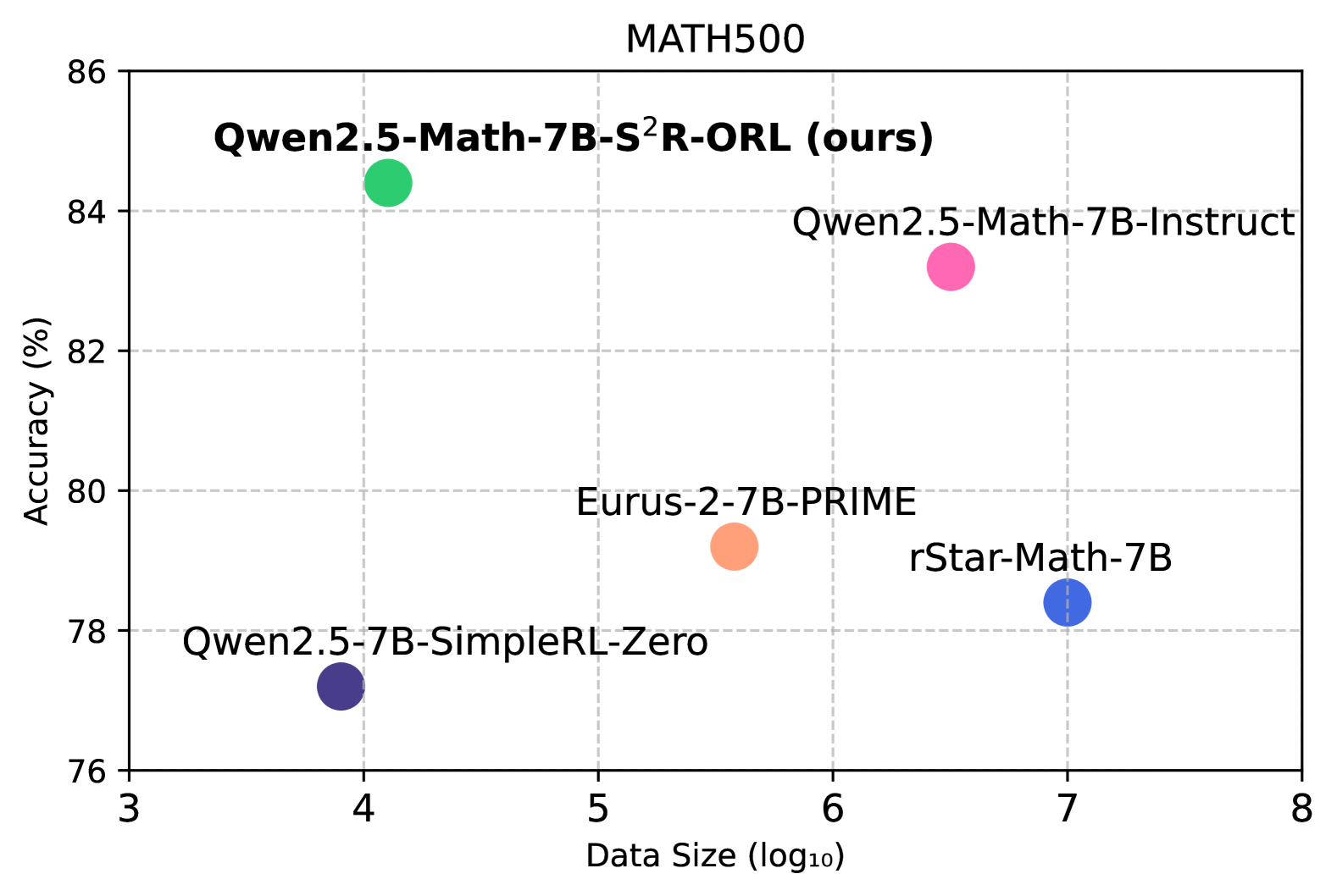

## Scatter Plot: Model Performance vs. Data Size on MATH500

### Overview

This image is a scatter plot that visualizes the performance (Accuracy in %) of different language models against their data size (log10) on the MATH500 dataset. Each point represents a specific model, with its position indicating its accuracy and data size.

### Components/Axes

* **Title:** MATH500

* **X-axis:**

* **Title:** Data Size (log10)

* **Scale:** Logarithmic, ranging from 3 to 8.

* **Markers:** 3, 4, 5, 6, 7, 8.

* **Y-axis:**

* **Title:** Accuracy (%)

* **Scale:** Linear, ranging from 76 to 86.

* **Markers:** 76, 78, 80, 82, 84, 86.

* **Data Points:** Five distinct colored circles, each labeled with the name of a language model.

* **Green Circle:** Labeled "Qwen2.5-Math-7B-S²R-ORL (ours)"

* **Pink Circle:** Labeled "Qwen2.5-Math-7B-Instruct"

* **Orange Circle:** Labeled "Eurus-2-7B-PRIME"

* **Blue Circle:** Labeled "rStar-Math-7B"

* **Dark Purple Circle:** Labeled "Qwen2.5-7B-SimpleRL-Zero"

### Detailed Analysis

The plot displays the following data points:

1. **Qwen2.5-Math-7B-S²R-ORL (ours)** (Green Circle):

* **Trend:** This point is positioned at the top-left of the cluster, indicating high accuracy with a relatively smaller data size compared to some other models.

* **Approximate Coordinates:** Data Size (log10) ≈ 3.9, Accuracy (%) ≈ 84.5

2. **Qwen2.5-Math-7B-Instruct** (Pink Circle):

* **Trend:** This point is located in the upper-right quadrant of the plot, showing a good balance of high accuracy and a larger data size.

* **Approximate Coordinates:** Data Size (log10) ≈ 6.5, Accuracy (%) ≈ 83.5

3. **Eurus-2-7B-PRIME** (Orange Circle):

* **Trend:** This point is situated in the middle-lower section of the plot, suggesting moderate accuracy with a medium data size.

* **Approximate Coordinates:** Data Size (log10) ≈ 5.5, Accuracy (%) ≈ 79.5

4. **rStar-Math-7B** (Blue Circle):

* **Trend:** This point is in the lower-right section, indicating lower accuracy with a larger data size.

* **Approximate Coordinates:** Data Size (log10) ≈ 7.0, Accuracy (%) ≈ 78.2

5. **Qwen2.5-7B-SimpleRL-Zero** (Dark Purple Circle):

* **Trend:** This point is at the bottom-left, showing the lowest accuracy among the plotted models, with a relatively small data size.

* **Approximate Coordinates:** Data Size (log10) ≈ 4.0, Accuracy (%) ≈ 77.0

### Key Observations

* The model "Qwen2.5-Math-7B-S²R-ORL (ours)" achieves the highest accuracy (approximately 84.5%) among the plotted models, despite having one of the smallest data sizes (approximately 3.9 log10).

* "Qwen2.5-Math-7B-Instruct" also demonstrates high accuracy (approximately 83.5%) but with a significantly larger data size (approximately 6.5 log10).

* "Qwen2.5-7B-SimpleRL-Zero" has the lowest accuracy (approximately 77.0%) and a relatively small data size (approximately 4.0 log10).

* "rStar-Math-7B" has a larger data size (approximately 7.0 log10) but a lower accuracy (approximately 78.2%) compared to "Eurus-2-7B-PRIME".

### Interpretation

This scatter plot suggests a general trend where increased data size might not always directly correlate with improved accuracy, or that model architecture and training methods play a crucial role. The "Qwen2.5-Math-7B-S²R-ORL (ours)" model stands out as being highly efficient, achieving top-tier accuracy with a comparatively smaller data footprint. This could imply a more effective learning process or better generalization capabilities. Conversely, models like "rStar-Math-7B" show that simply increasing data size doesn't guarantee superior performance, as it has a larger data size but lower accuracy than "Eurus-2-7B-PRIME". The "Qwen2.5" family of models shows varying performance based on their specific training (e.g., Instruct vs. SimpleRL-Zero vs. S²R-ORL), highlighting the impact of fine-tuning and reinforcement learning techniques. The plot effectively allows for a quick comparison of model trade-offs between performance and data requirements on the MATH500 benchmark.