## Grouped Bar Chart: Accuracy Comparison of Human and AI Models on Attribute Tasks

### Overview

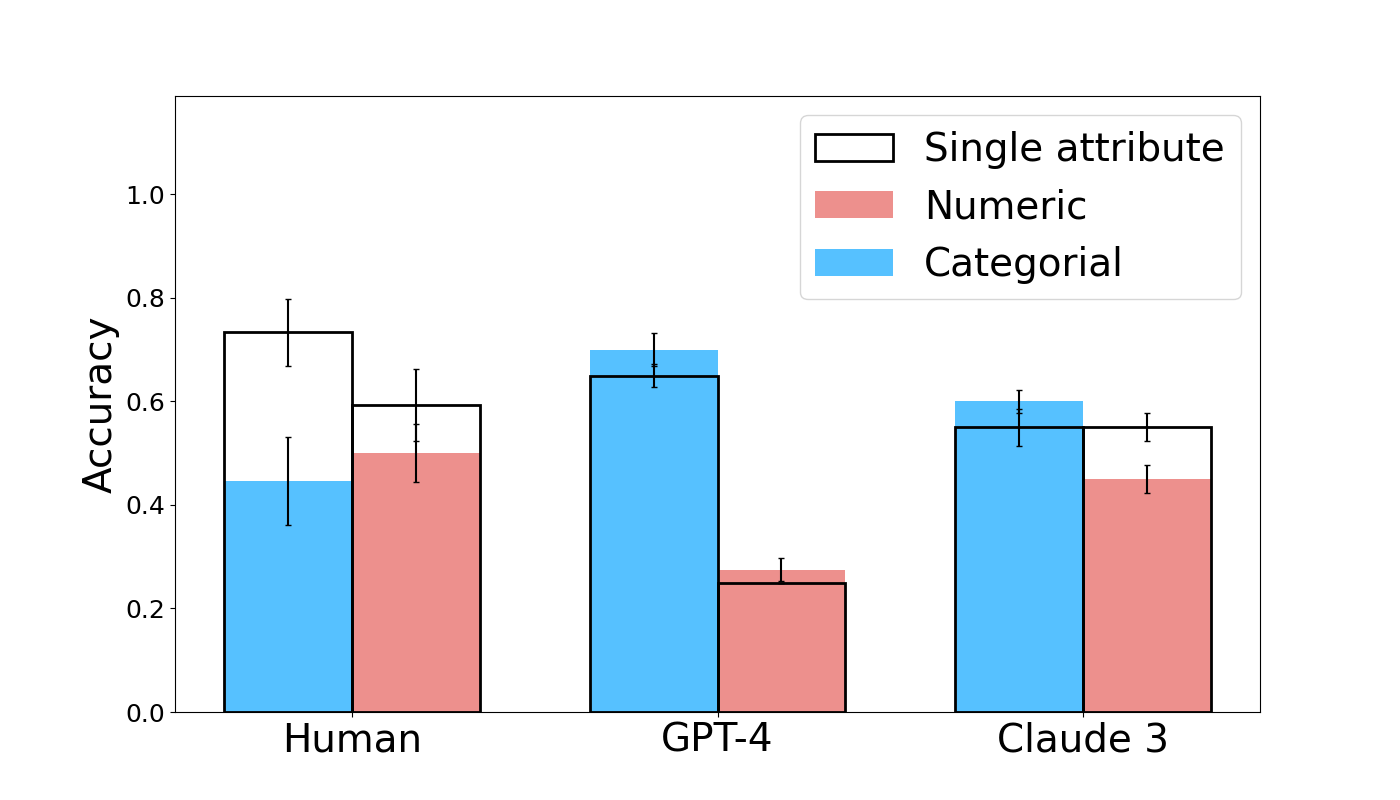

The image is a grouped bar chart comparing the accuracy of three entities—Human, GPT-4, and Claude 3—on tasks involving different attribute types. The chart measures performance on "Single attribute," "Numeric," and "Categorical" tasks, with accuracy plotted on the y-axis. Error bars are included for each data point, indicating variability or confidence intervals.

### Components/Axes

- **Chart Type**: Grouped bar chart with error bars.

- **Y-Axis**: Labeled "Accuracy," with a linear scale from 0.0 to 1.0, marked at intervals of 0.2 (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

- **X-Axis**: Three categorical groups: "Human," "GPT-4," and "Claude 3."

- **Legend**: Located in the top-right corner of the chart area. It defines three bar types:

- **Single attribute**: White bar with a black outline.

- **Numeric**: Solid pink/salmon-colored bar.

- **Categorical**: Solid light blue bar.

- **Data Series**: Each group (Human, GPT-4, Claude 3) contains two or three bars, representing the attribute types. The "Single attribute" bar appears only for Human and Claude 3, not for GPT-4.

### Detailed Analysis

**Human Group (Leftmost Cluster):**

- **Categorical (Blue Bar)**: Height is approximately 0.45. The error bar extends from roughly 0.36 to 0.53.

- **Numeric (Pink Bar)**: Height is approximately 0.50. The error bar extends from roughly 0.44 to 0.56.

- **Single attribute (White Bar)**: Height is approximately 0.73. The error bar extends from roughly 0.67 to 0.80.

**GPT-4 Group (Center Cluster):**

- **Categorical (Blue Bar)**: Height is approximately 0.70. The error bar extends from roughly 0.63 to 0.74.

- **Numeric (Pink Bar)**: Height is approximately 0.25. The error bar extends from roughly 0.22 to 0.29.

- **Single attribute**: No bar is present for this category.

**Claude 3 Group (Rightmost Cluster):**

- **Categorical (Blue Bar)**: Height is approximately 0.60. The error bar extends from roughly 0.52 to 0.62.

- **Numeric (Pink Bar)**: Height is approximately 0.45. The error bar extends from roughly 0.42 to 0.47.

- **Single attribute (White Bar)**: Height is approximately 0.55. The error bar extends from roughly 0.51 to 0.58.

### Key Observations

1. **Performance Disparity by Task Type**: There is a clear divergence in performance between Numeric and Categorical tasks for the AI models. GPT-4 shows the largest gap, with high Categorical accuracy (~0.70) but very low Numeric accuracy (~0.25). Humans show a smaller gap, with Numeric (~0.50) slightly outperforming Categorical (~0.45).

2. **Human Superiority on Single Attribute Tasks**: The "Single attribute" task, which appears to be a composite or different benchmark, shows Humans achieving the highest overall accuracy (~0.73) on the chart. Claude 3's performance on this task (~0.55) is notably lower.

3. **Model Comparison**: GPT-4 leads in Categorical accuracy among the AI models. Claude 3 shows more balanced performance between Numeric and Categorical tasks compared to GPT-4, but its accuracy in both is moderate.

4. **Error Bar Variability**: The error bars for Human performance on Categorical tasks and GPT-4 performance on Numeric tasks appear relatively large, suggesting higher uncertainty or variability in those measurements. Claude 3's error bars are comparatively tighter.

### Interpretation

This chart suggests a fundamental difference in how humans and current large language models (LLMs) process different types of information. Humans demonstrate a more balanced and robust capability across numeric and categorical reasoning, with a particular strength in integrated "single attribute" tasks.

The LLMs, however, show a pronounced specialization or weakness. GPT-4's profile indicates a strong capability for categorical reasoning (e.g., classifying, sorting) but a significant deficit in numeric reasoning (e.g., arithmetic, quantitative comparison). Claude 3 mitigates this weakness somewhat, achieving a more even performance profile, but at the cost of lower peak accuracy in its stronger category compared to GPT-4.

The absence of a "Single attribute" bar for GPT-4 is notable. It could imply that this specific benchmark was not run for GPT-4, or that the task was not applicable to its evaluation framework. The data highlights that while AI models can excel in specific domains (like GPT-4 in categorical tasks), they have not yet achieved the generalized, cross-domain accuracy of humans, particularly in tasks that may require integrating multiple reasoning skills. The variability indicated by the error bars also suggests that model performance on these tasks is not yet fully consistent.