TECHNICAL ASSET FINGERPRINT

f41c5329c5f1b27616eff048

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: LeanAgent Workflow

### Overview

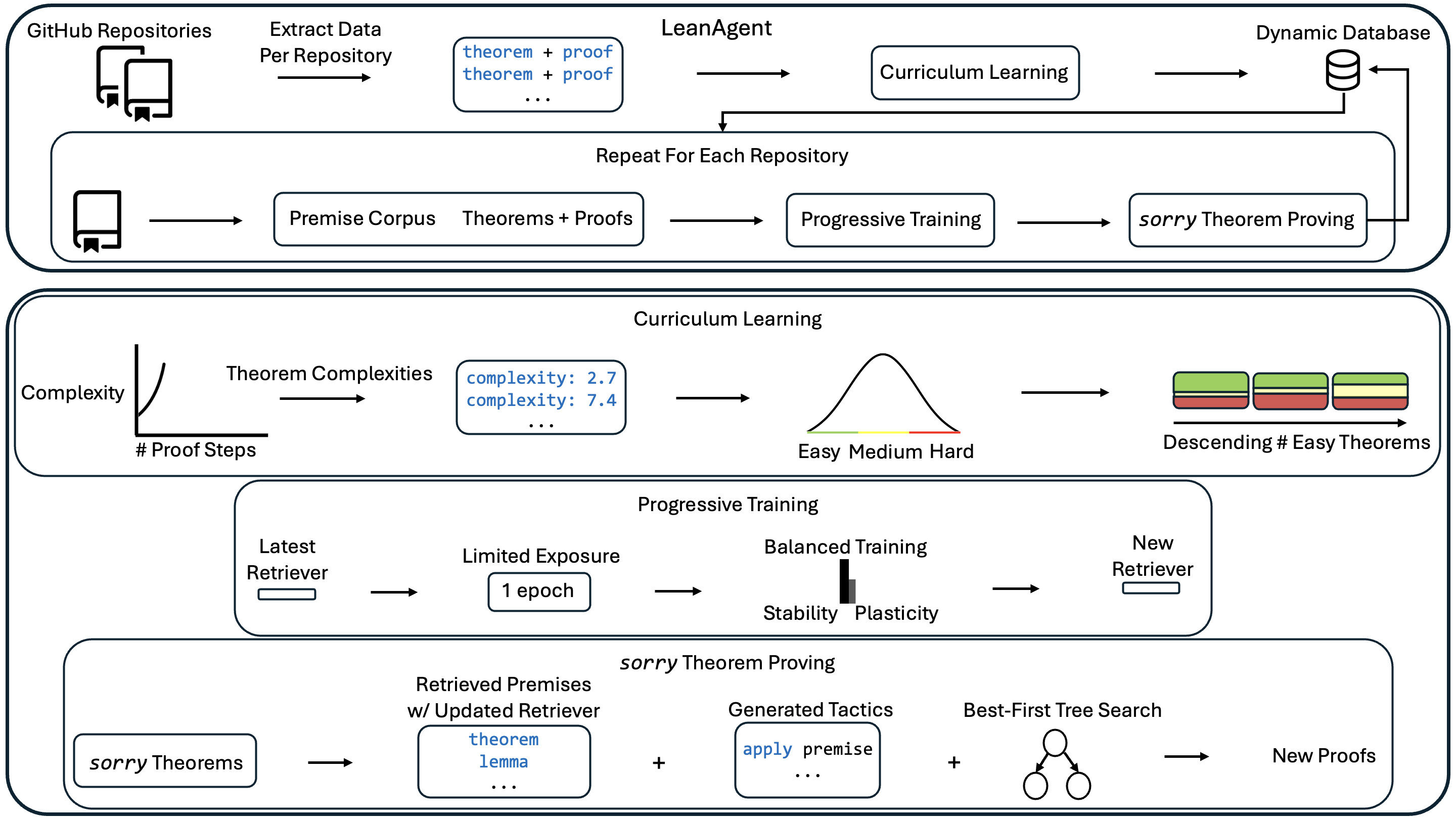

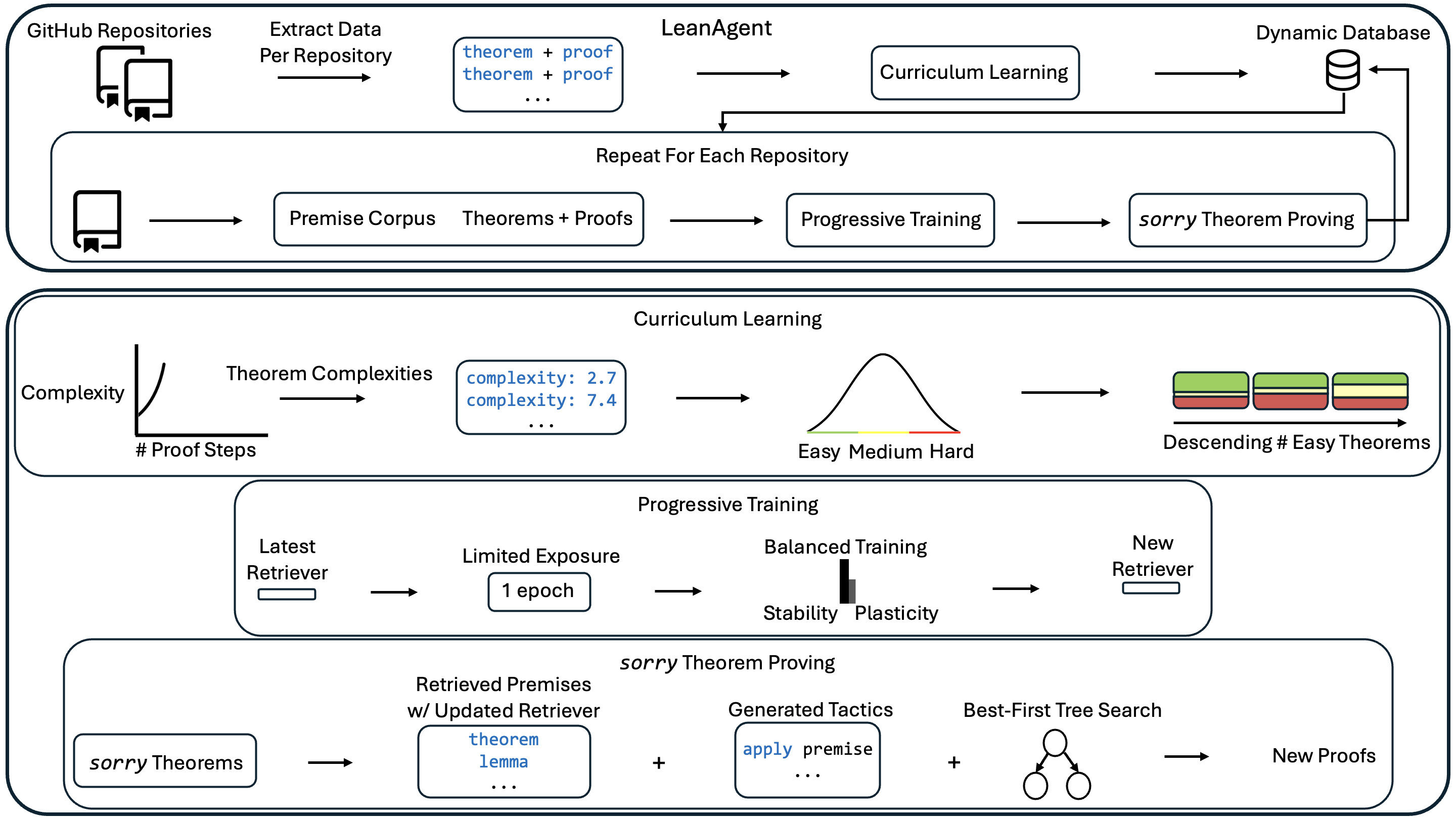

The image is a diagram illustrating the workflow of LeanAgent, a system for automated theorem proving. The diagram is divided into several stages, including data extraction from GitHub repositories, curriculum learning, progressive training, and theorem proving. The workflow involves iterative processes and feedback loops.

### Components/Axes

* **GitHub Repositories:** Represents the source of theorem and proof data.

* **Extract Data Per Repository:** Process of extracting theorem and proof data from GitHub repositories.

* **LeanAgent:** The overall system for automated theorem proving.

* **Dynamic Database:** A database used to store and retrieve theorems and proofs.

* **Curriculum Learning:** A stage involving learning curricula.

* **Repeat For Each Repository:** Indicates an iterative process.

* **Premise Corpus Theorems + Proofs:** A collection of premises, theorems, and proofs.

* **Progressive Training:** A training stage.

* **sorry Theorem Proving:** The final stage of theorem proving.

* **Complexity vs. # Proof Steps:** A graph showing the relationship between theorem complexity and the number of proof steps. The y-axis is labeled "Complexity" and the x-axis is labeled "# Proof Steps". The graph shows a curve that increases rapidly.

* **Theorem Complexities:** Indicates the complexity of theorems. Examples given are "complexity: 2.7" and "complexity: 7.4".

* **Easy Medium Hard:** Labels indicating the difficulty levels in curriculum learning. These are associated with a bell curve.

* **Descending # Easy Theorems:** Indicates a descending order of easy theorems. The blocks are colored green, yellow, and red.

* **Latest Retriever:** The most recent retriever model.

* **Limited Exposure:** A stage with limited exposure, indicated as "1 epoch".

* **Balanced Training:** A training stage with balanced stability and plasticity.

* **Stability Plasticity:** Labels indicating the balance between stability and plasticity.

* **New Retriever:** The updated retriever model.

* **sorry Theorems:** Theorems that need to be proven.

* **Retrieved Premises w/ Updated Retriever:** Premises retrieved using an updated retriever. Examples given are "theorem" and "lemma".

* **Generated Tactics:** Tactics generated for theorem proving. Example given is "apply premise".

* **Best-First Tree Search:** A search algorithm used for theorem proving.

* **New Proofs:** The resulting proofs.

### Detailed Analysis

* **Data Extraction:** The process starts with extracting data from GitHub repositories. The extracted data includes theorems and proofs.

* **LeanAgent Core:** The extracted data is fed into the LeanAgent system.

* **Curriculum Learning Loop:** The system uses curriculum learning, which involves a dynamic database. The process is repeated for each repository.

* **Complexity Graph:** The graph shows that as the number of proof steps increases, the complexity of the theorem also increases.

* **Curriculum Stages:** The curriculum learning stage involves easy, medium, and hard difficulty levels. The number of easy theorems decreases.

* **Progressive Training Stages:** The progressive training stage involves a latest retriever, limited exposure (1 epoch), and balanced training.

* **Theorem Proving Stages:** The theorem proving stage involves retrieved premises, generated tactics, and a best-first tree search.

### Key Observations

* The diagram illustrates a cyclical workflow, with feedback loops between different stages.

* The curriculum learning stage involves a progression from easy to hard difficulty levels.

* The progressive training stage aims to balance stability and plasticity.

* The theorem proving stage uses a best-first tree search algorithm.

### Interpretation

The diagram provides a high-level overview of the LeanAgent workflow for automated theorem proving. The system leverages data from GitHub repositories, employs curriculum learning and progressive training techniques, and uses a best-first tree search algorithm for theorem proving. The iterative nature of the workflow suggests a continuous learning and improvement process. The balance between stability and plasticity in the progressive training stage is crucial for adapting to new data while maintaining existing knowledge. The complexity graph indicates that more complex theorems require more proof steps.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: LeanAgent Training Pipeline

### Overview

This diagram illustrates the training pipeline for a "LeanAgent" system, likely an AI agent designed for theorem proving. The pipeline involves extracting data from GitHub repositories, utilizing curriculum learning and progressive training techniques, and ultimately generating new proofs. The diagram is segmented into four main sections, visually separated by colored backgrounds: Data Extraction, Curriculum Learning, Progressive Training, and sorry Theorem Proving. Arrows indicate the flow of data and processes.

### Components/Axes

The diagram contains the following key components:

* **Data Sources:** GitHub Repositories, Premise Corpus

* **Processes:** Extract Data Per Repository, Curriculum Learning, Progressive Training, sorry Theorem Proving, Best-First Tree Search

* **Data Representations:** theorem + proof, Theorems + Proofs, Theorem Complexities, Retrieved Premises w/ Updated Retriever, Generated Tactics

* **Metrics:** Complexity, # Proof Steps

* **Databases:** Dynamic Database

* **Visualizations:** Distribution of Theorem Complexities (Easy, Medium, Hard), Descending # of Easy Theorems.

* **Parameters:** 1 epoch, Stability, Plasticity

### Detailed Analysis or Content Details

**1. Data Extraction (Top Section - Light Orange)**

* Data is extracted from "GitHub Repositories" and a "Premise Corpus".

* The extracted data consists of "theorem + proof" pairs.

* This data feeds into "Curriculum Learning" and "Progressive Training" respectively.

* The process is repeated for each repository.

**2. Curriculum Learning (Second Section - Light Green)**

* **Complexity vs. # Proof Steps:** A visual representation shows the relationship between theorem complexity and the number of proof steps.

* The x-axis is labeled "# Proof Steps".

* The y-axis is labeled "Complexity".

* A data point is shown with "complexity: 2.7" and another with "complexity: 7.4". The ellipsis ("...") indicates more data points exist.

* **Theorem Complexity Distribution:** A bell curve represents the distribution of theorem complexities, categorized as "Easy", "Medium", and "Hard".

* **Descending # of Easy Theorems:** A horizontal bar chart shows a descending number of easy theorems. The bars are colored green, yellow, and red.

**3. Progressive Training (Third Section - Light Blue)**

* **Latest Retriever -> Limited Exposure:** Data flows from the "Latest Retriever" to "Limited Exposure" (1 epoch).

* **Limited Exposure -> Balanced Training:** "Limited Exposure" feeds into "Balanced Training", which has parameters "Stability" and "Plasticity".

* **Balanced Training -> New Retriever:** "Balanced Training" outputs a "New Retriever".

**4. sorry Theorem Proving (Bottom Section - Light Purple)**

* **sorry Theorems -> Retrieved Premises:** "sorry Theorems" are input into a process that retrieves premises with an updated retriever. The retrieved premises include "theorem" and "lemma".

* **Retrieved Premises + Generated Tactics -> Best-First Tree Search:** Retrieved premises are combined with "Generated Tactics" (e.g., "apply premise").

* **Best-First Tree Search -> New Proofs:** The combined data is processed by "Best-First Tree Search" to generate "New Proofs".

### Key Observations

* The pipeline is iterative, with the "New Retriever" from Progressive Training feeding back into the "sorry Theorem Proving" stage.

* The Curriculum Learning section emphasizes the importance of complexity and proof step length in organizing the training data.

* The Progressive Training section highlights the balance between stability and plasticity in the learning process.

* The diagram uses visual cues (color-coding, arrows) to clearly indicate the flow of data and processes.

* The "sorry Theorems" component suggests the system is initially dealing with incomplete or unproven theorems.

### Interpretation

The diagram depicts a sophisticated AI training pipeline designed to learn theorem proving. The use of curriculum learning suggests a strategy of starting with simpler theorems and gradually increasing complexity. Progressive training, with its emphasis on stability and plasticity, likely aims to prevent catastrophic forgetting while still allowing the agent to adapt to new information. The iterative nature of the pipeline, with the "New Retriever" feeding back into the system, indicates a continuous learning process. The "Best-First Tree Search" component suggests a search algorithm is used to explore potential proof paths. The overall goal appears to be to automate the process of theorem proving by leveraging data from existing repositories and continuously refining the agent's learning capabilities. The use of "sorry Theorems" suggests the system is designed to tackle challenging problems where initial attempts at proving theorems may fail. The diagram provides a high-level overview of the system's architecture and training methodology, without delving into the specific algorithms or implementation details.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: LeanAgent - Automated Theorem Proving Pipeline

### Overview

This image is a technical system architecture diagram illustrating a multi-stage pipeline for automated theorem proving, specifically within the Lean formal proof assistant ecosystem. The diagram is divided into three primary, nested functional blocks that describe a continuous learning and data processing loop. The overall flow depicts how data is extracted from repositories, processed through curriculum learning and progressive training, and used to prove previously unproven ("sorry") theorems, with the results feeding back into a dynamic database.

### Components/Axes

The diagram is structured into three main rounded rectangular containers, each representing a major subsystem or process:

1. **Top Container (LeanAgent Core Loop):**

* **Input:** "GitHub Repositories" (represented by a folder icon).

* **Process Flow:** "Extract Data Per Repository" → A data box containing "theorem + proof" entries (in blue text) → "LeanAgent" → "Curriculum Learning" → "Dynamic Database" (represented by a cylinder icon).

* **Feedback Loop:** A large arrow labeled "Repeat For Each Repository" encloses a secondary process: A repository icon → "Premise Corpus" and "Theorems + Proofs" → "Progressive Training" → "*sorry* Theorem Proving" → which feeds back into the "Dynamic Database".

2. **Middle Container (Curriculum Learning Subsystem):**

* **Input Graph:** A small line graph with Y-axis "Complexity" and X-axis "# Proof Steps", showing a positive correlation.

* **Process Flow:** "Theorem Complexities" → A data box with example values: "complexity: 2.7", "complexity: 7.4" (in blue text) → A bell curve distribution labeled "Easy Medium Hard" on its X-axis → A set of three stacked, colored blocks (green, yellow, red) with an arrow pointing to the label "Descending # Easy Theorems".

3. **Bottom Container (Progressive Training & Proving Subsystems):**

* **Progressive Training Sub-block:**

* "Latest Retriever" (represented by a small rectangle) → "Limited Exposure" with a box labeled "1 epoch" → "Balanced Training" (represented by a bar chart icon comparing "Stability" and "Plasticity") → "New Retriever".

* **sorry Theorem Proving Sub-block:**

* "*sorry* Theorems" → "Retrieved Premises w/ Updated Retriever" (a data box containing "theorem", "lemma" in blue text) + "Generated Tactics" (a data box containing "apply premise" in blue text) + "Best-First Tree Search" (represented by a tree search icon) → "New Proofs".

### Detailed Analysis

* **Data Flow & Iteration:** The system is fundamentally iterative. It processes one GitHub repository at a time ("Repeat For Each Repository"), extracting formal mathematical theorems and their proofs. This data is used to train and update the system, which then attempts to prove new theorems. The results ("New Proofs") are stored in a "Dynamic Database," which presumably informs future training cycles.

* **Curriculum Learning Mechanism:** This component organizes training data by difficulty. Theorems are assigned a complexity score (e.g., 2.7, 7.4) likely based on the number of proof steps. They are then categorized into a distribution (Easy, Medium, Hard). The training strategy involves presenting theorems in "Descending # Easy Theorems," suggesting a schedule that starts with many easy problems and gradually introduces harder ones.

* **Progressive Training Mechanism:** This focuses on updating a "Retriver" model (likely a component that finds relevant premises/lemmas). It uses "Limited Exposure" (1 epoch) to prevent catastrophic forgetting, followed by "Balanced Training" to manage the trade-off between "Stability" (retaining old knowledge) and "Plasticity" (learning new information).

* **Theorem Proving Engine:** The "*sorry* Theorem Proving" module takes theorems that are incomplete (marked with `sorry` in Lean). It uses the updated retriever to find relevant premises, generates potential proof tactics (e.g., `apply premise`), and employs a "Best-First Tree Search" algorithm to explore the proof space and generate "New Proofs."

### Key Observations

* **Closed-Loop System:** The architecture is a closed feedback loop where outputs (new proofs) become inputs for future learning, enabling continuous improvement.

* **Explicit Handling of Complexity:** The system doesn't treat all theorems equally. It explicitly measures complexity and structures learning accordingly (Curriculum Learning).

* **Focus on Incremental Updates:** The "Progressive Training" block highlights a concern for efficiently updating the AI model without retraining from scratch, emphasizing stability-plasticity balance.

* **Integration of Symbolic and Neural Methods:** The pipeline combines neural/retrieval components ("Retriever," "Generated Tactics") with classical symbolic AI search ("Best-First Tree Search").

### Interpretation

This diagram outlines a sophisticated, autonomous AI system designed to advance the state of automated mathematical reasoning. The core innovation appears to be the integration of three key machine learning paradigms into a single, continuous pipeline:

1. **Self-Improvement via Data Flywheel:** By mining public code repositories (GitHub), the system bootstraps its own training data, creating a virtuous cycle where more data leads to better proving, which in turn generates more data (new proofs).

2. **Pedagogically-Structured Learning:** The "Curriculum Learning" module mimics human education, suggesting that organizing training data from simple to complex is crucial for efficient learning in formal domains. This likely helps the model build foundational knowledge before tackling advanced concepts.

3. **Efficient Lifelong Learning:** The "Progressive Training" module addresses a key challenge in AI: updating a model with new information without forgetting old knowledge. This is essential for a system that must continuously learn from an ever-growing database of theorems.

The ultimate goal is to reduce the need for human-written proofs (the `sorry` placeholders) by having the AI agent autonomously discover and formalize proofs. This has significant implications for formal verification, mathematical research, and the development of more reliable software systems. The architecture suggests a move away from static, one-time trained models towards dynamic, self-improving agents that operate continuously on live data sources.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Automated Theorem Proving System Architecture

### Overview

The diagram illustrates a multi-stage automated theorem proving system with iterative learning and curriculum adaptation. It shows data flow from GitHub repositories through a LeanAgent, curriculum learning framework, progressive training mechanisms, and specialized handling of "SORRY Theorems" (theorems that initially fail to prove).

### Components/Axes

**Key Components:**

1. **Input Layer**

- GitHub Repositories (source of theorems/proofs)

- Extract Data Per Repository → Premise Corpus + Theorems + Proofs

2. **Core Processing**

- LeanAgent (theorem proving engine)

- Curriculum Learning (complexity-based adaptation)

- Progressive Training (retriever evolution)

3. **Output Layer**

- Dynamic Database (knowledge repository)

- SORRY Theorem Proving (specialized handling)

**Visual Elements:**

- Color-coded complexity levels (green=low, yellow=medium, red=high)

- Feedback loops between components

- Iterative refinement arrows

### Detailed Analysis

**1. Data Ingestion Pipeline**

- GitHub repositories → Extract Data Per Repository

- Creates: Premise Corpus + Theorems + Proofs

**2. Curriculum Learning System**

- Complexity → Theorem Complexities (e.g., 2.7, 7.4)

- Complexity graph shows:

- # Proof Steps vs Complexity

- Distribution: Easy (green) → Medium (yellow) → Hard (red)

- Descending # Easy Theorems over time

**3. Progressive Training Mechanism**

- Latest Retriever → Limited Exposure (1 epoch)

- Balanced Training (Stability vs Plasticity)

- New Retriever generation

**4. SORRY Theorem Proving**

- Input: SORRY Theorems

- Process:

- Retrieved Premises (updated retriever)

- Generated Tactics

- Best-First Tree Search

- Output: New Proofs

### Key Observations

1. **Iterative Learning**: Feedback loops between components suggest continuous improvement

2. **Complexity Adaptation**: Curriculum learning adjusts difficulty based on proof complexity metrics

3. **Retriever Evolution**: Progressive training creates increasingly sophisticated theorem retrieval systems

4. **Specialized Handling**: SORRY Theorems use distinct processing pipeline with retrieval-augmented generation

### Interpretation

This architecture demonstrates a sophisticated approach to automated theorem proving that:

1. **Adapts Difficulty**: Uses complexity metrics to create personalized learning paths

2. **Balances Stability/Plasticity**: Progressive training maintains core knowledge while enabling adaptation

3. **Handles Edge Cases**: Specialized SORRY Theorem Proving addresses previously unsolved problems

4. **Leverages Community Knowledge**: GitHub repositories serve as initial knowledge base

The system appears designed to create self-improving theorem provers that can handle increasingly complex mathematical problems through iterative learning and curriculum adaptation. The "SORRY Theorem" handling suggests a focus on solving previously intractable problems through advanced retrieval and search strategies.

DECODING INTELLIGENCE...