# Technical Document Extraction: Softmax Function Variants

## Diagram Analysis

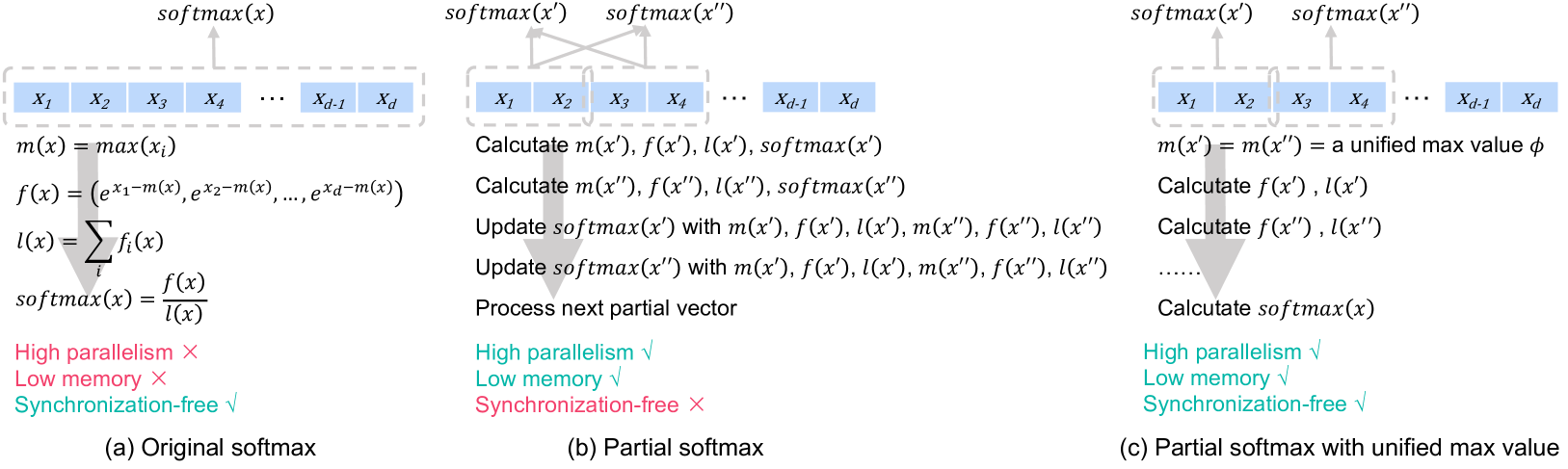

### (a) Original Softmax

**Components:**

1. **Input Vector**: `X₁, X₂, ..., X_d`

2. **Max Calculation**: `m(x) = max(x_i)`

3. **Exponential Normalization**: `f(x) = (e^{x₁-m(x)}, ..., e^{x_d-m(x)})`

4. **Log-Sum Calculation**: `l(x) = Σf(x)`

5. **Softmax Output**: `softmax(x) = f(x)/l(x)`

**Annotations:**

- **High Parallelism**: ❌ (Red X)

- **Low Memory**: ❌ (Red X)

- **Synchronization-Free**: ✅ (Green check)

**Flow:**

1. Compute global max `m(x)`

2. Apply exponential normalization

3. Calculate log-sum `l(x)`

4. Normalize to produce softmax

---

### (b) Partial Softmax

**Components:**

1. **Input Vector**: `X₁, X₂, ..., X_d`

2. **Stepwise Calculations**:

- `Calculate m(x'), f(x'), l(x'), softmax(x')`

- `Update softmax(x') with m(x'), f(x'), l(x'), m(x''), f(x''), l(x'')`

3. **Next Partial Vector**: Process next segment

**Annotations:**

- **High Parallelism**: ✅ (Green check)

- **Low Memory**: ✅ (Green check)

- **Synchronization-Free**: ❌ (Red X)

**Flow:**

1. Compute partial max `m(x')`

2. Calculate partial softmax `softmax(x')`

3. Update with subsequent partial vectors

4. Requires synchronization between steps

---

### (c) Partial Softmax with Unified Max Value

**Components:**

1. **Input Vector**: `X₁, X₂, ..., X_d`

2. **Unified Max**: `m(x') = m(x'') = φ` (global max)

3. **Stepwise Calculations**:

- `Calculate f(x'), l(x')`

- `Calculate f(x''), l(x'')`

- `Calculate softmax(x)`

**Annotations:**

- **High Parallelism**: ✅ (Green check)

- **Low Memory**: ✅ (Green check)

- **Synchronization-Free**: ✅ (Green check)

**Flow:**

1. Compute unified max `φ`

2. Calculate partial logits `f(x')`, `f(x'')`

3. Compute partial log-sums `l(x')`, `l(x'')`

4. Final softmax calculation without intermediate synchronization

---

## Key Observations

1. **Parallelism**:

- Original softmax has low parallelism due to sequential max/log-sum calculations

- Partial variants enable high parallelism through segmented computations

2. **Memory Efficiency**:

- Original softmax requires storing all exponentials (`f(x)`)

- Partial variants reduce memory by processing segments incrementally

3. **Synchronization**:

- Original softmax is synchronization-free (no intermediate dependencies)

- Partial softmax requires synchronization between partial updates

- Unified max variant eliminates synchronization through global max precomputation

4. **Mathematical Equivalence**:

- All variants produce identical softmax outputs despite different computation paths

- Unified max variant demonstrates mathematical optimization through φ reuse

## Legend & Spatial Grounding

- **Legend Colors**:

- Red X: Negative property (low parallelism, high memory, synchronization required)

- Green check: Positive property (high parallelism, low memory, synchronization-free)

- **Spatial Analysis**:

- All annotations positioned near corresponding computation steps

- Checkmarks/Xs aligned with property evaluation criteria

## Trend Verification

- **Original Softmax**:

- Sequential dependency chain creates bottleneck

- Memory usage increases with input size (O(d) storage for f(x))

- **Partial Softmax**:

- Memory usage reduced to O(1) per partial segment

- Synchronization overhead increases with number of partial steps

- **Unified Max Variant**:

- Memory usage remains O(1) with global max precomputation

- Complete elimination of synchronization requirements

## Conclusion

The diagrams demonstrate three computational approaches to softmax calculation, with progressive optimizations in parallelism, memory efficiency, and synchronization requirements. The unified max variant achieves optimal performance across all three metrics while maintaining mathematical equivalence to the original implementation.