\n

## Density Plots: Saliency Score Distributions for Llama-3 Models

### Overview

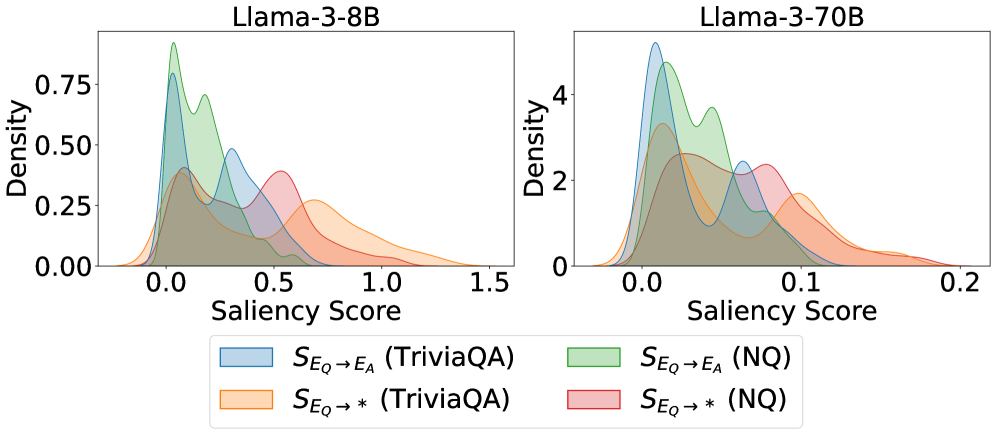

The image displays two side-by-side kernel density estimation (KDE) plots comparing the distribution of "Saliency Scores" for two different-sized language models (Llama-3-8B and Llama-3-70B) across two question-answering datasets (TriviaQA and NQ). The plots visualize how model attention or attribution (saliency) is distributed for different input-output mappings.

### Components/Axes

* **Titles:**

* Left Plot: `Llama-3-8B`

* Right Plot: `Llama-3-70B`

* **Axes:**

* **X-axis (both plots):** `Saliency Score`

* Llama-3-8B scale: 0.0 to 1.5, with major ticks at 0.0, 0.5, 1.0, 1.5.

* Llama-3-70B scale: 0.0 to 0.2, with major ticks at 0.0, 0.1, 0.2. **Note the significant difference in scale between the two models.**

* **Y-axis (both plots):** `Density`

* Llama-3-8B scale: 0.00 to 0.75, with major ticks at 0.00, 0.25, 0.50, 0.75.

* Llama-3-70B scale: 0 to 4, with major ticks at 0, 2, 4.

* **Legend:** Located at the bottom center, spanning both plots. It defines four data series by color and label:

1. **Light Blue:** `S_{E_Q -> E_A} (TriviaQA)` - Saliency from Question Embedding to Answer Embedding for TriviaQA.

2. **Light Orange:** `S_{E_Q -> *} (TriviaQA)` - Saliency from Question Embedding to all tokens (`*`) for TriviaQA.

3. **Light Green:** `S_{E_Q -> E_A} (NQ)` - Saliency from Question Embedding to Answer Embedding for Natural Questions (NQ).

4. **Light Red/Pink:** `S_{E_Q -> *} (NQ)` - Saliency from Question Embedding to all tokens (`*`) for NQ.

### Detailed Analysis

**Llama-3-8B (Left Plot):**

* **Trend Verification:** All four distributions are right-skewed, with the bulk of density concentrated at lower saliency scores (0.0 to 0.75) and long tails extending to higher values.

* **Data Series Analysis:**

* `S_{E_Q -> E_A} (TriviaQA)` (Blue): Has the highest peak density (~0.8) at a very low score (~0.05). Shows a secondary, smaller peak/hump around 0.3.

* `S_{E_Q -> E_A} (NQ)` (Green): Has the second-highest peak (~0.75) also near 0.05. Its distribution is slightly broader than the blue series, with a notable shoulder around 0.2.

* `S_{E_Q -> *}` (TriviaQA & NQ) (Orange & Red): These distributions are much flatter and broader. Their peaks are lower (~0.4 for Orange, ~0.35 for Red) and occur at higher saliency scores (around 0.5-0.6). They have significantly longer and heavier tails extending past 1.0.

**Llama-3-70B (Right Plot):**

* **Trend Verification:** Distributions are more peaked and concentrated within a much narrower range (0.0 to 0.2) compared to the 8B model. They are less skewed.

* **Data Series Analysis:**

* `S_{E_Q -> E_A} (TriviaQA)` (Blue): Exhibits the highest and sharpest peak (density >4) at a very low score (~0.02).

* `S_{E_Q -> E_A} (NQ)` (Green): Has the second-highest peak (~3.8) at a similarly low score (~0.03). It shows a distinct secondary peak/hump around 0.08.

* `S_{E_Q -> *}` (TriviaQA & NQ) (Orange & Red): These are again broader and flatter than the `E_A` series. Their peaks are lower (density ~2.5-3) and occur at slightly higher scores (around 0.05-0.07). Their tails are shorter, mostly contained below 0.15.

### Key Observations

1. **Model Size Effect:** The 70B model's saliency scores are an order of magnitude smaller (x-axis max 0.2 vs. 1.5) and more densely concentrated (y-axis max 4 vs. 0.75) than the 8B model's. This suggests the larger model's attributions are more focused and consistent.

2. **Attribution Target Effect:** For both models and both datasets, the saliency from Question to Answer (`S_{E_Q -> E_A}`) distributions are sharper and peak at lower scores than the saliency from Question to all tokens (`S_{E_Q -> *}`). This indicates that attribution to the specific answer is more concentrated than diffuse attribution to the entire output.

3. **Dataset Effect:** The difference between datasets (TriviaQA vs. NQ) is less pronounced than the difference between models or attribution targets. However, for the `S_{E_Q -> E_A}` metric, the NQ distribution (Green) consistently shows a more prominent secondary hump compared to TriviaQA (Blue).

### Interpretation

This visualization provides a technical comparison of model interpretability metrics. The data suggests that **larger models (70B) develop more precise and consistent internal attribution pathways** (lower, narrower saliency score distributions) compared to smaller models (8B), which have more variable and diffuse attributions.

Furthermore, the analysis distinguishes between **targeted attribution** (to the answer) and **diffuse attribution** (to all tokens). The consistently sharper peaks for `S_{E_Q -> E_A}` imply that when the model's attention is measured specifically on the answer-generating process, the signal is cleaner and more localized. The broader `S_{E_Q -> *}` distributions reflect the noisier, more distributed nature of attention across an entire generated sequence.

The secondary humps, particularly visible in the NQ `S_{E_Q -> E_A}` distributions for both models, may indicate a sub-population of questions or answer types where the model's saliency pattern differs systematically. This could be an avenue for further investigation into dataset properties or model behavior. The stark contrast in scale between the 8B and 70B models is the most striking finding, highlighting a fundamental shift in how larger models process and attribute information.