\n

## Bar Chart: Refusal Rate of Language Models

### Overview

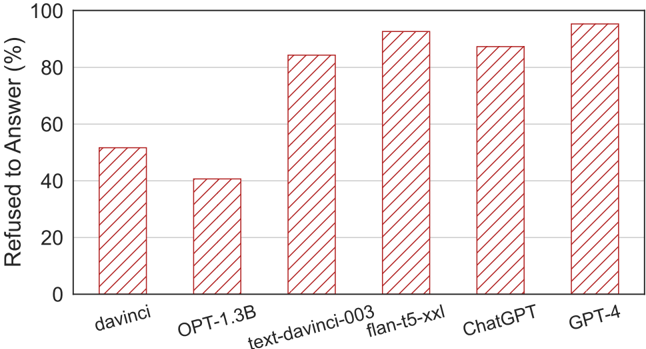

This bar chart displays the percentage of times different language models refused to answer a question. The x-axis represents the language model, and the y-axis represents the refusal rate in percentage. Each bar represents the refusal rate for a specific model.

### Components/Axes

* **X-axis:** Language Model (davinci, OPT-1.3B, text-davinci-003, flan-t5-xxl, ChatGPT, GPT-4)

* **Y-axis:** Refused to Answer (%) - Scale ranges from 0 to 100, with increments of 20.

* **Bars:** Represent the refusal rate for each language model. All bars have a red and white striped pattern.

### Detailed Analysis

The chart shows a clear trend of increasing refusal rates as the language model becomes more advanced.

* **davinci:** The refusal rate is approximately 52%.

* **OPT-1.3B:** The refusal rate is approximately 40%.

* **text-davinci-003:** The refusal rate is approximately 84%.

* **flan-t5-xxl:** The refusal rate is approximately 92%.

* **ChatGPT:** The refusal rate is approximately 88%.

* **GPT-4:** The refusal rate is approximately 98%.

The bars are positioned sequentially along the x-axis, with equal spacing between them. The height of each bar corresponds to the refusal rate indicated on the y-axis.

### Key Observations

* GPT-4 exhibits the highest refusal rate, nearly reaching 100%.

* davinci and OPT-1.3B have significantly lower refusal rates compared to the other models.

* There is a noticeable jump in refusal rate between OPT-1.3B and text-davinci-003.

* The refusal rates for text-davinci-003, flan-t5-xxl, and ChatGPT are relatively close to each other.

### Interpretation

The data suggests that more advanced language models (GPT-4, flan-t5-xxl, ChatGPT, text-davinci-003) are more likely to refuse to answer questions compared to earlier models (davinci, OPT-1.3B). This could be due to several factors:

* **Increased Safety Measures:** Newer models may have more robust safety mechanisms in place to prevent them from generating harmful or inappropriate responses.

* **Improved Understanding of Context:** More advanced models may be better at identifying potentially problematic questions and refusing to answer them.

* **Alignment with Ethical Guidelines:** Newer models may be more closely aligned with ethical guidelines and principles, leading them to refuse to answer questions that violate those principles.

The increasing trend in refusal rates indicates a trade-off between model capability and safety. While more advanced models are more powerful and versatile, they also require more careful control to ensure they are used responsibly. The high refusal rate of GPT-4 suggests that OpenAI has prioritized safety and ethical considerations in its development.

The difference between davinci and OPT-1.3B compared to the others could be due to the training data and the specific safety protocols implemented during their development. The jump between OPT-1.3B and text-davinci-003 suggests a significant change in the safety mechanisms or training data used for the latter model.