\n

## Diagram: Agent Self-Evolution Process

### Overview

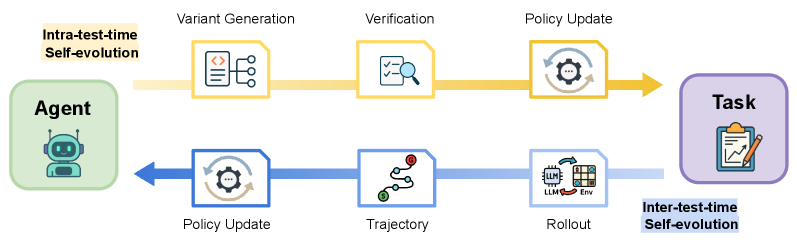

The image depicts a diagram illustrating an agent's self-evolution process, divided into two main loops: intra-test-time self-evolution (yellow) and inter-test-time self-evolution (blue). The diagram shows the flow of information and actions between the agent and a task, with intermediate steps of variant generation, verification, policy update, trajectory, and rollout.

### Components/Axes

The diagram consists of the following components:

* **Agent:** Represented by a robot icon, positioned on the left side.

* **Task:** Represented by a clipboard with a graph, positioned on the right side.

* **Intra-test-time Self-evolution:** A yellow loop connecting the Agent to the Task, with steps: Variant Generation, Verification, and Policy Update.

* **Inter-test-time Self-evolution:** A blue loop connecting the Task back to the Agent, with steps: Policy Update, Trajectory, and Rollout.

* **Variant Generation:** Icon of code snippets.

* **Verification:** Icon of a magnifying glass.

* **Policy Update:** Icon of gears.

* **Trajectory:** Icon of a winding path with markers.

* **Rollout:** Icon of a stack of blocks with "LLM" and "Env" labels.

### Detailed Analysis or Content Details

The diagram illustrates a cyclical process.

1. **Intra-test-time Self-evolution (Yellow Loop):**

* The Agent initiates the process by generating variants.

* These variants are then verified.

* Based on the verification results, the policy is updated.

* The updated policy is then applied to the Task.

2. **Inter-test-time Self-evolution (Blue Loop):**

* The Task provides feedback, leading to a policy update.

* A trajectory is generated based on the updated policy.

* The trajectory is rolled out using a Large Language Model (LLM) and an Environment (Env).

* The rollout results are fed back to the Agent, completing the loop.

The "LLM" and "Env" are contained within the Rollout icon. The trajectory icon shows a winding path with circular markers. The policy update icons (both yellow and blue) are identical.

### Key Observations

The diagram highlights a continuous self-improvement cycle for the agent. The separation into intra- and inter-test-time evolution suggests different levels or frequencies of adaptation. The inclusion of LLM and Env in the rollout phase indicates the use of these components in the agent's learning process.

### Interpretation

The diagram represents a reinforcement learning or iterative optimization process where an agent learns to perform a task through repeated cycles of action, evaluation, and adaptation. The intra-test-time loop represents rapid adjustments during a single task execution, while the inter-test-time loop represents more substantial learning and policy refinement based on broader experience. The use of LLM and Env suggests a sophisticated learning environment where the agent can leverage language models and interact with a simulated or real-world environment. The diagram emphasizes the importance of continuous self-evolution for achieving optimal performance in a given task. The two loops suggest a hierarchical learning structure, with fast, local adjustments within a test and slower, global adjustments between tests. This is a common pattern in modern reinforcement learning algorithms.