## Diagram Type: Query-Response Logic Flow

### Overview

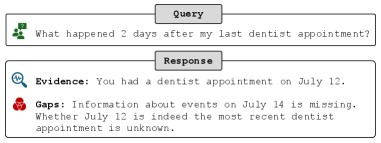

This image displays a user interface (UI) component representing a structured interaction between a user and an information retrieval system (likely an AI assistant). It illustrates a "reasoning" process where a natural language query is analyzed, and the response is broken down into verified evidence and identified missing information.

### Components/Axes

The image is divided into two primary horizontal blocks, one for the input and one for the output.

* **Header Labels:** Both main blocks feature a centered, grey rectangular label at the top of their respective borders.

* **Top Label:** "Query"

* **Bottom Label:** "Response"

* **Icons:**

* **Query Icon (Top-Left):** A green icon depicting a person's silhouette with a speech bubble containing a question mark.

* **Evidence Icon (Middle-Left):** A blue circular icon containing a magnifying glass with a pulse/waveform line inside.

* **Gaps Icon (Bottom-Left):** A red icon consisting of three interlocking circles, similar to a Venn diagram.

* **Text Blocks:**

* **Query Text:** Located in the top box.

* **Evidence Text:** Located in the upper half of the bottom box.

* **Gaps Text:** Located in the lower half of the bottom box.

### Content Details

The text within the diagram is transcribed exactly as follows:

* **Query Section:**

* "What happened 2 days after my last dentist appointment?"

* **Response Section:**

* **Evidence:** "You had a dentist appointment on July 12."

* **Gaps:** "Information about events on July 14 is missing. Whether July 12 is indeed the most recent dentist appointment is unknown."

### Key Observations

* **Temporal Reasoning:** The system demonstrates the ability to perform date arithmetic. It identifies a "dentist appointment" on July 12 and correctly calculates that "2 days after" corresponds to July 14.

* **Uncertainty Handling:** The system explicitly identifies two types of missing information:

1. **Data Void:** No records exist for the calculated target date (July 14).

2. **Contextual Ambiguity:** The system cannot verify if the July 12 appointment is the *absolute* last one in the user's history, acknowledging a potential limitation in its data access or the user's records.

* **Color Coding:** The use of blue for "Evidence" suggests a neutral or positive confirmation of facts, while red for "Gaps" serves as a warning or indicator of missing critical data.

### Interpretation

This diagram demonstrates a "Transparent Reasoning" or "Grounded" AI response model. Instead of providing a simple "I don't know" or potentially hallucinating an answer, the system exposes its internal logic to the user.

1. **Fact Retrieval:** It successfully finds a relevant anchor point (July 12).

2. **Logic Application:** It applies the user's requested offset (+2 days).

3. **Validation:** It checks its database for the resulting date (July 14) and finds nothing.

4. **Critical Thinking:** It questions its own premise—is July 12 actually the "last" appointment?

This approach is characteristic of systems designed for high-stakes personal data management (like health or scheduling), where accuracy and the ability to audit the system's "thought process" are more important than a concise but potentially misleading answer. It follows a Peircean investigative style by presenting the "Firstness" of the raw query, the "Secondness" of the conflicting data/gaps, and the "Thirdness" of the structured explanation that mediates between the two.