## Grouped Bar Chart: Accuracy Comparison of Qwen2.5 Models with Different Methods

### Overview

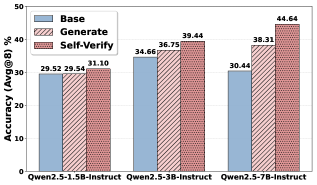

The image is a grouped bar chart comparing the performance (accuracy) of three different Qwen2.5 language model variants on the ArguBench (ArguB) dataset. For each model variant, three methods are evaluated: Base, Generate, and Self-Verify. The chart demonstrates the impact of these methods on model accuracy.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:**

* **Label:** `Accuracy (ArguB) %`

* **Scale:** Linear scale from 0 to 50, with major tick marks at intervals of 10 (0, 10, 20, 30, 40, 50).

* **X-Axis:**

* **Label:** Model variants.

* **Categories (Groups):** Three distinct model variants are listed:

1. `Qwen2.5-1.5B-Instruct`

2. `Qwen2.5-3B-Instruct`

3. `Qwen2.5-7B-Instruct`

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Content:** Defines the three bar patterns/colors corresponding to the methods:

* `Base`: Solid light blue bar.

* `Generate`: Light blue bar with diagonal hatching (lines sloping down from left to right).

* `Self-Verify`: Reddish-brown bar with a dense cross-hatch pattern.

* **Data Labels:** Each bar has its exact accuracy value printed directly above it.

### Detailed Analysis

The chart presents accuracy percentages for each method across the three model sizes. The data is as follows:

**1. Qwen2.5-1.5B-Instruct (Leftmost Group):**

* **Trend:** Accuracy increases progressively from Base to Generate to Self-Verify.

* **Data Points:**

* Base: 29.52%

* Generate: 29.54% (a negligible increase of 0.02% over Base)

* Self-Verify: 31.10% (an increase of ~1.56% over Generate)

**2. Qwen2.5-3B-Instruct (Center Group):**

* **Trend:** A clear, steady upward trend from Base to Generate to Self-Verify.

* **Data Points:**

* Base: 34.66%

* Generate: 36.73% (an increase of ~2.07% over Base)

* Self-Verify: 39.44% (an increase of ~2.71% over Generate)

**3. Qwen2.5-7B-Instruct (Rightmost Group):**

* **Trend:** A very strong upward trend, with the largest gains observed in this model group.

* **Data Points:**

* Base: 30.44%

* Generate: 38.33% (a substantial increase of ~7.89% over Base)

* Self-Verify: 44.64% (a further large increase of ~6.31% over Generate)

### Key Observations

1. **Consistent Improvement with Advanced Methods:** For all three model sizes, the `Self-Verify` method yields the highest accuracy, followed by `Generate`, with `Base` performing the worst. This pattern is consistent across the board.

2. **Scaling Effect:** The performance gain from applying the `Generate` and `Self-Verify` methods is not uniform across model sizes. The largest model (7B) shows the most dramatic improvement, especially between its `Base` (30.44%) and `Self-Verify` (44.64%) scores—a total gain of over 14 percentage points.

3. **Anomaly in Base Performance:** The `Base` accuracy does not scale linearly with model size. The 3B model (34.66%) outperforms both the smaller 1.5B (29.52%) and the larger 7B (30.44%) models when using only the `Base` method. This suggests factors beyond pure parameter count influence baseline performance on this task.

4. **Method Efficacy:** The `Self-Verify` method provides a significant boost over `Generate` for the two larger models (+2.71% for 3B, +6.31% for 7B), but a much smaller one for the smallest model (+1.56%). This could indicate that the self-verification capability benefits more from the model's increased capacity.

### Interpretation

The data suggests that for the ArguBench benchmark, simply scaling up the base model size (from 1.5B to 7B parameters) does not guarantee better performance, as seen in the dip in `Base` accuracy for the 7B model. However, when combined with more sophisticated inference methods like `Generate` and particularly `Self-Verify`, larger models can leverage their increased capacity to achieve substantially higher accuracy.

The `Self-Verify` method appears to be a highly effective technique for improving reasoning or argumentative performance on this benchmark. Its advantage grows with model scale, implying it may be a key method for unlocking the potential of larger language models. The near-identical `Base` and `Generate` scores for the 1.5B model suggest a possible performance ceiling for that model size on this task, where the `Generate` method alone provides little benefit, but `Self-Verify` can still extract some improvement.

In summary, the chart demonstrates that methodological improvements (like `Self-Verify`) can be as important, or even more important, than raw model scale for achieving high performance on specific tasks. The most effective strategy appears to be combining larger models with advanced verification techniques.