\n

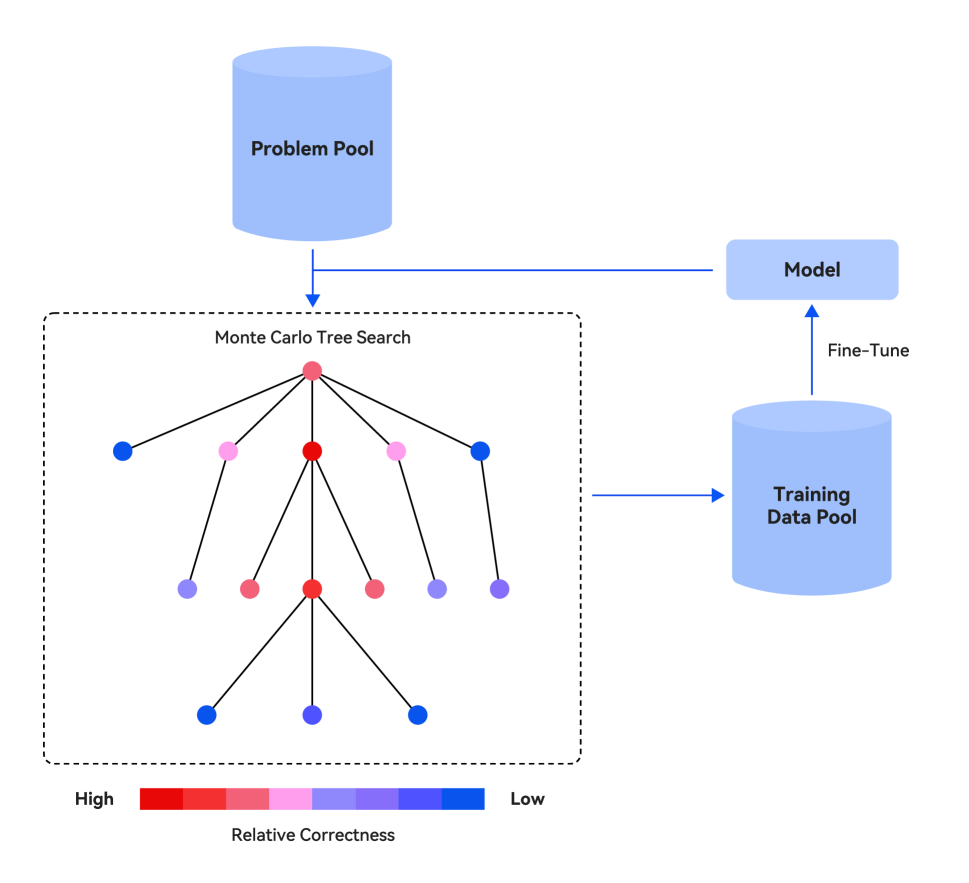

## Diagram: Iterative Model Training via Monte Carlo Tree Search

### Overview

The image is a technical flowchart illustrating a cyclical process for training or fine-tuning a machine learning model. The core of the process uses a Monte Carlo Tree Search (MCTS) algorithm to explore a problem space, evaluate the correctness of different solution paths, and generate training data to improve the model. The diagram depicts a closed-loop system where the model's outputs feed back into the process.

### Components/Axes

The diagram consists of four primary components connected by directional arrows indicating data flow:

1. **Problem Pool** (Top-Center): A blue cylinder icon representing a repository or source of problems.

2. **Monte Carlo Tree Search (MCTS)** (Left-Center): A dashed rectangular box containing a tree structure. The tree has a root node and multiple levels of child nodes connected by lines. The nodes are colored circles.

3. **Training Data Pool** (Right-Center): A blue cylinder icon representing a repository for collected training examples.

4. **Model** (Top-Right): A blue rounded rectangle representing the machine learning model being trained.

**Legend** (Bottom-Left):

* **Title**: "Relative Correctness"

* **Scale**: A horizontal color bar ranging from **High** (left) to **Low** (right).

* **Colors**: The scale shows a gradient from **Red** (High correctness) through **Pink** and **Purple** to **Blue** (Low correctness).

### Detailed Analysis

**Flow and Relationships:**

1. The process begins with the **Problem Pool**. An arrow points downward from it into the **Monte Carlo Tree Search** block.

2. Inside the MCTS block, a tree structure is shown. The **root node** is colored **red** (indicating High relative correctness). It branches into five first-level nodes. From left to right, their colors are: **blue**, **pink**, **red**, **pink**, **blue**.

3. The central **red** first-level node branches further into three second-level nodes: **purple**, **red**, **pink**.

4. The central **red** second-level node branches into three third-level (leaf) nodes: **blue**, **purple**, **blue**.

5. An arrow exits the right side of the MCTS dashed box and points to the **Training Data Pool**. This indicates that information or data generated/evaluated by the MCTS process is sent to the training pool.

6. An arrow labeled **"Fine-Tune"** points upward from the **Training Data Pool** to the **Model**. This shows the model is updated using the data.

7. An arrow points from the **Model** back to the **Problem Pool**, completing the cycle. This suggests the model may generate new problems or select problems from the pool for the next iteration.

**Node Color Distribution (Relative Correctness):**

* **High Correctness (Red)**: 1 root node, 2 first-level nodes, 1 second-level node. Total: 4 nodes.

* **Medium-High Correctness (Pink)**: 2 first-level nodes, 1 second-level node. Total: 3 nodes.

* **Medium-Low Correctness (Purple)**: 1 second-level node, 1 third-level node. Total: 2 nodes.

* **Low Correctness (Blue)**: 2 first-level nodes, 2 third-level nodes. Total: 4 nodes.

### Key Observations

1. **Central Path Emphasis**: The most developed branch in the tree stems from the central **red** (high correctness) node at the first level, which itself leads to another **red** node. This visually suggests the search algorithm is focusing exploration on promising, high-correctness paths.

2. **Correctness Gradient**: There is a general trend where nodes closer to the root (especially the central path) tend to be red/pink (higher correctness), while leaf nodes at the periphery are more often blue (lower correctness). This aligns with the typical MCTS goal of identifying and refining good solutions.

3. **Closed-Loop System**: The diagram explicitly shows a feedback loop: Model -> Problem Pool -> MCTS -> Training Data -> Fine-Tune -> Model. This represents an iterative, self-improving training paradigm.

4. **Data Generation Source**: The arrow from the MCTS block to the Training Data Pool implies that the *process of search and evaluation itself* generates valuable training data, not just the final solutions.

### Interpretation

This diagram illustrates a sophisticated **self-play or iterative improvement framework** for AI models, likely in domains like game playing, theorem proving, or complex problem-solving.

* **What it demonstrates**: The system uses Monte Carlo Tree Search not just to solve a single problem, but as a **data engine**. By exploring a problem space (from the Problem Pool) and evaluating the relative correctness of different reasoning steps (visualized by node colors), it curates a dataset of high-quality reasoning traces (sent to the Training Data Pool). This dataset is then used to fine-tune the original Model, making it better at solving such problems in the next cycle.

* **Relationships**: The Model is both the **beneficiary** (it gets fine-tuned) and a **participant** (it likely guides the MCTS or selects problems). The Problem Pool is the **input source**, the MCTS is the **exploration and evaluation engine**, and the Training Data Pool is the **curated output** of that engine.

* **Notable Insight**: The color-coding reveals the **internal state of the search algorithm**. The concentration of red nodes along a central path indicates the algorithm has identified a high-quality solution trajectory. The presence of blue nodes shows it also explores less promising paths, which is essential for avoiding local optima and generating diverse training data. The entire process embodies a **Peircean abductive reasoning cycle**: the model generates hypotheses (problems/paths), tests them via search (MCTS), evaluates the results (correctness coloring), and updates its own knowledge (fine-tuning) based on the most explanatory findings.