## [Multi-Panel Bar Chart]: LLM Performance Comparison Across Datasets

### Overview

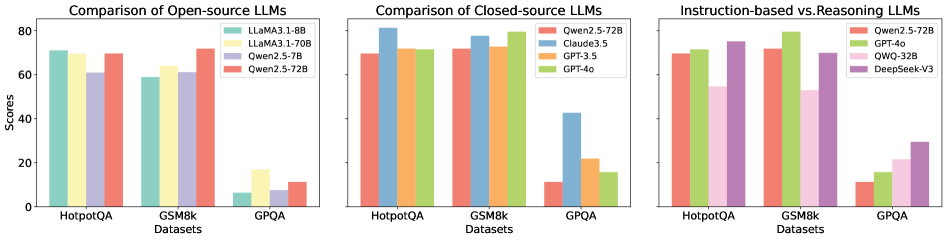

The image displays three horizontally arranged bar charts comparing the performance of various Large Language Models (LLMs) on three benchmark datasets: HotpotQA, GSM8k, and GPQA. The charts are segmented by model type: open-source, closed-source, and instruction-based vs. reasoning models. All charts share a common y-axis labeled "Scores" ranging from 0 to 80, and an x-axis labeled "Datasets".

### Components/Axes

* **Overall Layout:** Three distinct bar charts arranged side-by-side.

* **Common Y-Axis:** Labeled "Scores", with major tick marks at 0, 20, 40, 60, and 80.

* **Common X-Axis:** Labeled "Datasets", with three categorical tick marks: "HotpotQA", "GSM8k", and "GPQA".

* **Chart 1 (Left):** Title: "Comparison of Open-source LLMs". Legend (top-right corner): LLaMA3.1-8B (teal), LLaMA3.1-70B (light yellow), Qwen2.5-7B (light purple), Qwen2.5-72B (salmon).

* **Chart 2 (Center):** Title: "Comparison of Closed-source LLMs". Legend (top-right corner): Qwen2.5-72B (salmon), Claude3.5 (blue), GPT-3.5 (orange), GPT-4o (green).

* **Chart 3 (Right):** Title: "Instruction-based vs. Reasoning LLMs". Legend (top-right corner): Qwen2.5-72B (salmon), GPT-4o (green), QWQ-32B (pink), DeepSeek-V3 (purple).

### Detailed Analysis

**Chart 1: Comparison of Open-source LLMs**

* **HotpotQA:** LLaMA3.1-8B (~70), LLaMA3.1-70B (~68), Qwen2.5-7B (~60), Qwen2.5-72B (~70).

* **GSM8k:** LLaMA3.1-8B (~58), LLaMA3.1-70B (~62), Qwen2.5-7B (~60), Qwen2.5-72B (~72).

* **GPQA:** LLaMA3.1-8B (~5), LLaMA3.1-70B (~18), Qwen2.5-7B (~8), Qwen2.5-72B (~12).

* **Trend:** Performance is relatively high and clustered on HotpotQA and GSM8k, but drops dramatically for all models on the GPQA dataset. Qwen2.5-72B is the top performer on GSM8k.

**Chart 2: Comparison of Closed-source LLMs**

* **HotpotQA:** Qwen2.5-72B (~70), Claude3.5 (~80), GPT-3.5 (~72), GPT-4o (~72).

* **GSM8k:** Qwen2.5-72B (~72), Claude3.5 (~78), GPT-3.5 (~72), GPT-4o (~80).

* **GPQA:** Qwen2.5-72B (~12), Claude3.5 (~42), GPT-3.5 (~22), GPT-4o (~16).

* **Trend:** Claude3.5 and GPT-4o show strong, leading performance on HotpotQA and GSM8k. Claude3.5 is a significant outlier on GPQA, achieving a score (~42) more than double that of the next closest model (GPT-3.5 at ~22).

**Chart 3: Instruction-based vs. Reasoning LLMs**

* **HotpotQA:** Qwen2.5-72B (~70), GPT-4o (~72), QWQ-32B (~55), DeepSeek-V3 (~75).

* **GSM8k:** Qwen2.5-72B (~72), GPT-4o (~80), QWQ-32B (~52), DeepSeek-V3 (~72).

* **GPQA:** Qwen2.5-72B (~12), GPT-4o (~16), QWQ-32B (~22), DeepSeek-V3 (~30).

* **Trend:** GPT-4o leads on GSM8k. DeepSeek-V3 shows the strongest performance on HotpotQA and GPQA among this group. QWQ-32B underperforms on HotpotQA and GSM8k but shows relative strength on GPQA compared to its other scores.

### Key Observations

1. **Dataset Difficulty:** GPQA is universally the most challenging dataset, with all models scoring below 45, and most below 20.

2. **Model Standouts:** Claude3.5 demonstrates exceptional performance on the difficult GPQA dataset. GPT-4o is consistently a top performer across all datasets and model groupings.

3. **Open vs. Closed:** The highest-performing open-source model (Qwen2.5-72B) generally matches or slightly trails the top closed-source models on HotpotQA and GSM8k but falls far behind on GPQA.

4. **Cross-Chart Reference:** Qwen2.5-72B and GPT-4o appear in multiple charts, providing a direct performance bridge between the different model categories.

### Interpretation

The data suggests a clear hierarchy in LLM capability based on model architecture and training. Closed-source models, particularly Claude3.5 and GPT-4o, exhibit superior performance, especially on the complex reasoning tasks represented by GPQA. The dramatic performance drop for all models on GPQA indicates this benchmark tests a capability frontier that current models struggle with. The standout performance of Claude3.5 on GPQA suggests it may have a unique architectural or training advantage for that specific type of reasoning. The comparison in the third chart implies that models explicitly designed for reasoning (like DeepSeek-V3) may have an edge on certain tasks (GPQA, HotpotQA) over general instruction-tuned models, though this advantage is not universal across all benchmarks (e.g., GSM8k). The charts collectively highlight that while open-source models are competitive on some tasks, a significant performance gap remains on the most challenging benchmarks.