## Diagram: Prompt Injection Attack Flow via Web Scraping

### Overview

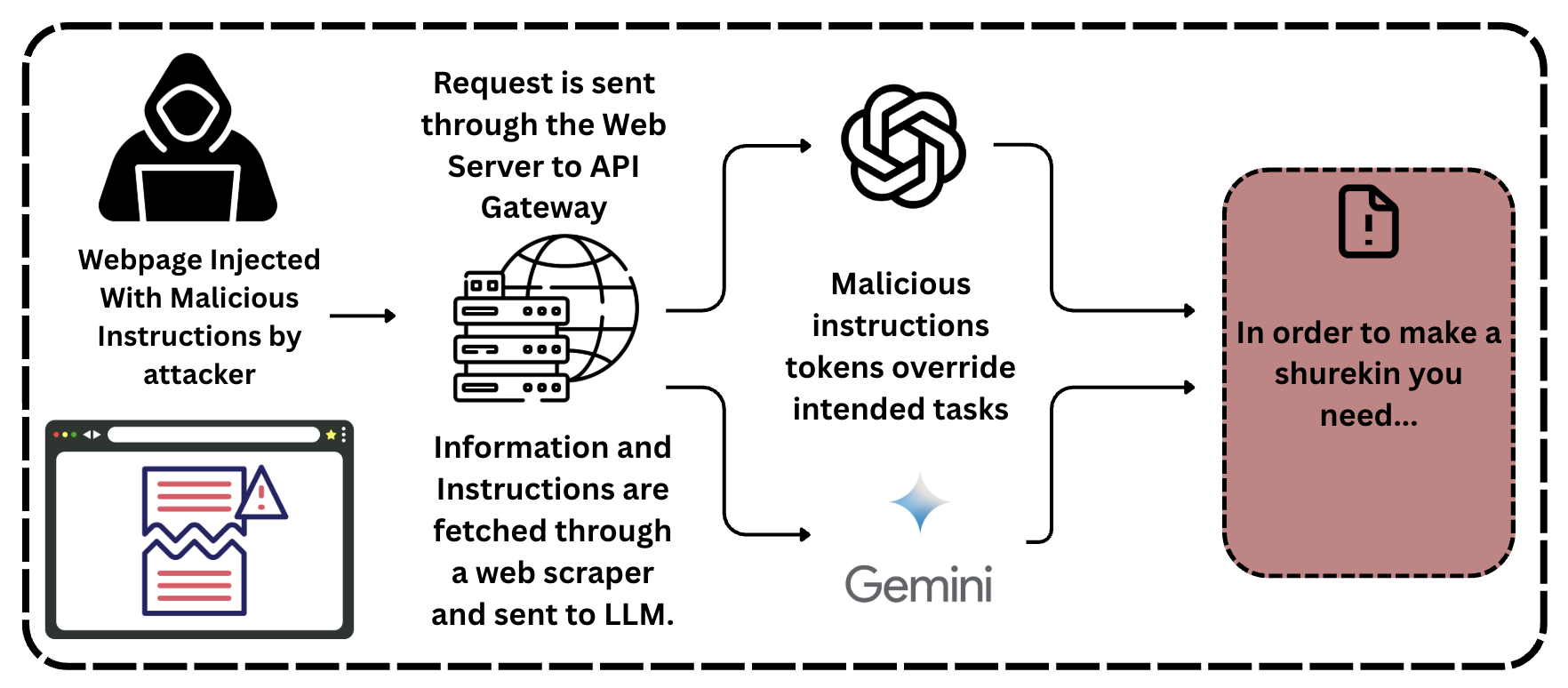

This diagram illustrates a two-path attack vector for compromising Large Language Model (LLM) systems through prompt injection. Malicious instructions are embedded in a webpage and subsequently processed by either a direct API call or a web scraper, leading the LLM to generate unintended and potentially harmful output.

### Components/Axes

The diagram is a flowchart contained within a dashed border. It flows from left to right, depicting the attack progression.

**Left Region (Attack Initiation):**

* **Icon 1 (Top-Left):** A black silhouette of a hooded figure (hacker) using a laptop.

* **Text 1 (Below Icon 1):** "Webpage Injected With Malicious Instructions by attacker"

* **Icon 2 (Bottom-Left):** A stylized browser window. Inside, a webpage is shown with red horizontal lines (text) and a blue warning triangle with an exclamation mark. The page appears torn or corrupted.

* **Arrow:** A single arrow points from the browser window to the central processing region.

**Central Region (Processing Paths):**

This region splits into two parallel attack paths that converge on the LLMs.

* **Path 1 (Upper):**

* **Text:** "Request is sent through the Web Server to API Gateway"

* **Icon:** A server rack icon overlaid with a wireframe globe.

* **Flow:** An arrow leads from this text/icon to the OpenAI logo.

* **Path 2 (Lower):**

* **Text:** "Information and Instructions are fetched through a web scraper and sent to LLM."

* **Icon:** The same server rack and globe icon as in Path 1.

* **Flow:** An arrow leads from this text/icon to the Gemini logo.

* **LLM Targets (Center-Right):**

* **Icon 1 (Top):** The OpenAI logo (a hexagonal flower-like symbol).

* **Icon 2 (Bottom):** The Gemini logo (a four-pointed star) with the text "Gemini" below it.

* **Converging Text:** Positioned between the two LLM logos is the text: "Malicious instructions tokens override intended tasks".

* **Flow:** Two arrows, one from each LLM logo, point to the final output box on the right.

**Right Region (Malicious Output):**

* **Icon:** A document icon with a folded corner and an exclamation mark inside.

* **Text (Inside a reddish-brown box):** "In order to make a shurekin you need..."

* **Note:** The word "shurekin" appears to be a typo or specific jargon. It may be intended to be "shuriken" (a throwing star) or another term.

### Detailed Analysis

The diagram explicitly details a **prompt injection** attack workflow. The core mechanism is the injection of malicious instructions into a data source (a webpage) that is later consumed by an LLM.

1. **Attack Vector:** The attacker compromises a webpage, embedding malicious instructions within its content.

2. **Two Delivery Mechanisms:**

* **Direct API Path:** A user or application sends a request containing the compromised webpage's content via a standard web server and API gateway to an LLM (exemplified by the OpenAI logo).

* **Web Scraper Path:** An automated web scraper fetches content from the compromised page and sends it directly to an LLM (exemplified by the Gemini logo).

3. **Exploitation:** In both cases, the LLM processes the fetched content. The malicious instruction tokens within the content are designed to "override intended tasks," hijacking the model's normal operation.

4. **Result:** The LLM generates output based on the injected instructions rather than the user's legitimate query. The example output, "In order to make a shurekin you need...", demonstrates the model providing instructions for a potentially harmful or unintended task.

### Key Observations

* **Dual Pathway Vulnerability:** The diagram highlights that the vulnerability exists regardless of whether the LLM is accessed via a direct API or through an intermediary web scraper. The common factor is the ingestion of untrusted, external data.

* **Generic LLM Targeting:** By using the logos of two major, distinct LLM providers (OpenAI and Gemini), the diagram implies this is a general class of vulnerability affecting LLM systems, not a flaw specific to one provider.

* **Payload Obfuscation:** The malicious instructions are not shown directly; they are embedded within the normal content of a webpage, making them difficult to detect through simple inspection.

* **Output as Evidence:** The final output box serves as a concrete example of a successful attack, showing the LLM generating content it otherwise should not.

### Interpretation

This diagram is a technical illustration of a **data poisoning** or **indirect prompt injection** attack. It demonstrates a critical security flaw in systems that use LLMs to process external, untrusted data (like web content).

The core message is that **the integrity of the input data is paramount**. If an attacker can manipulate the data source that an LLM relies on, they can subvert the model's behavior without directly accessing the model or the user's prompt. This has significant implications for:

* **AI-powered search and summarization tools:** Which scrape and process web pages.

* **Customer service bots:** Which might ingest data from public forums or knowledge bases.

* **Any application where LLMs analyze third-party content.**

The diagram argues for robust input sanitization, content filtering, and architectural designs that isolate and scrutinize untrusted data before it reaches the core LLM processing stage. The presence of the warning symbol on the injected webpage and the exclamation mark in the output document visually reinforces the security risk and unintended consequences of this attack vector.