## Model Information Card

### Overview

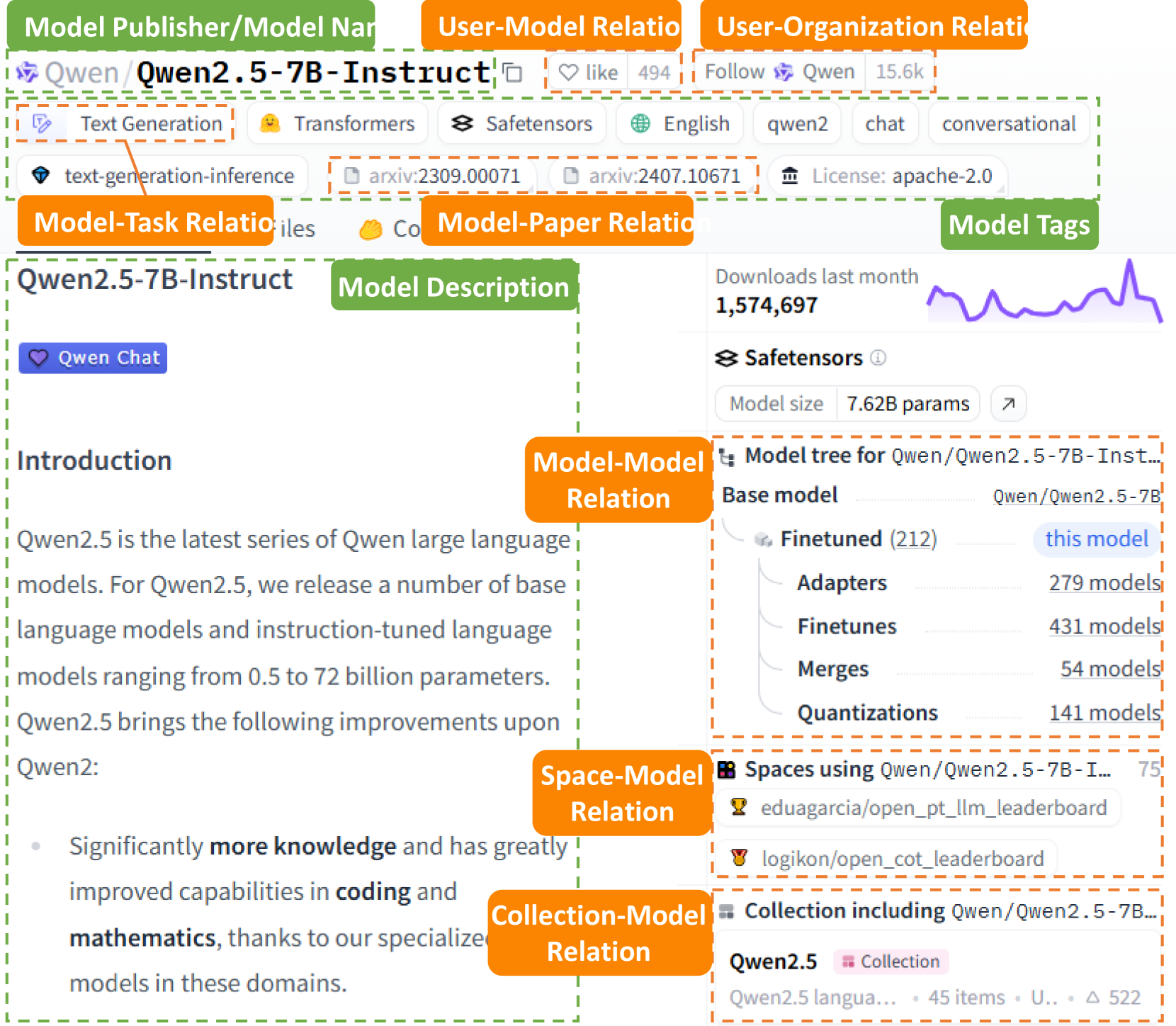

The image is a snapshot of a model information card, likely from a platform like Hugging Face. It provides details about the "Qwen/Qwen2.5-7B-Instruct" model, including its description, related models, usage spaces, collections, and performance metrics.

### Components/Axes

* **Header:**

* Model Publisher/Model Name: "Qwen/Qwen2.5-7B-Instruct"

* User-Model Relation: "like 494"

* User-Organization Relation: "Follow Qwen 15.6k"

* **Tags:**

* "Text Generation"

* "Transformers"

* "Safetensors"

* "English"

* "qwen2"

* "chat"

* "conversational"

* "text-generation-inference"

* "arxiv:2309.00071"

* "arxiv:2407.10671"

* "License: apache-2.0"

* **Model Description:**

* Title: "Introduction"

* Text: "Qwen2.5 is the latest series of Qwen large language models. For Qwen2.5, we release a number of base language models and instruction-tuned language models ranging from 0.5 to 72 billion parameters. Qwen2.5 brings the following improvements upon Qwen2: Significantly more knowledge and has greatly improved capabilities in coding and mathematics, thanks to our specialized models in these domains."

* **Model-Model Relation:**

* Downloads last month: 1,574,697. A small line graph shows a general upward trend with fluctuations.

* Safetensors

* Model size: 7.62B params

* Model tree for Qwen/Qwen2.5-7B-Inst...

* Base model: Qwen/Qwen2.5-7B

* Finetuned (212): this model

* Adapters: 279 models

* Finetunes: 431 models

* Merges: 54 models

* Quantizations: 141 models

* **Space-Model Relation:**

* Spaces using Qwen/Qwen2.5-7B-I...: 75

* eduagarcia/open\_pt\_llm\_leaderboard

* logikon/open\_cot\_leaderboard

* **Collection-Model Relation:**

* Collection including Qwen/Qwen2.5-7B...

* Qwen2.5 Collection

* Qwen2.5 langua... 45 items U... Δ 522

### Detailed Analysis or ### Content Details

* **Model Publisher/Model Name:** The model is published by "Qwen" and its name is "Qwen2.5-7B-Instruct".

* **User Interaction:** The model has 494 "likes" and the user can "Follow" "Qwen" with 15.6k followers.

* **Model Description:** The description highlights that Qwen2.5 is the latest in the Qwen series, with models ranging from 0.5 to 72 billion parameters. It emphasizes improvements in knowledge, coding, and mathematics.

* **Downloads:** The model was downloaded 1,574,697 times in the last month.

* **Model Size:** The model size is 7.62B parameters.

* **Model Tree:** The model tree shows the relationships between different versions and adaptations of the model. The base model is "Qwen/Qwen2.5-7B". There are 212 finetuned versions, 279 adapters, 431 finetunes, 54 merges, and 141 quantizations.

* **Spaces:** The model is used in 75 spaces. Two specific spaces are listed: "eduagarcia/open\_pt\_llm\_leaderboard" and "logikon/open\_cot\_leaderboard".

* **Collections:** The model is included in the "Qwen2.5" collection, which contains 45 items.

### Key Observations

* The model is popular, with a high number of downloads and likes.

* There are many variations of the model, including finetuned versions, adapters, and quantizations.

* The model is used in a variety of spaces and collections.

### Interpretation

The information card provides a comprehensive overview of the "Qwen/Qwen2.5-7B-Instruct" model. The high download count and user engagement suggest that it is a popular and widely used model. The presence of numerous finetuned versions and adapters indicates that the model is highly adaptable and customizable for different tasks. The inclusion in various spaces and collections further demonstrates its versatility and utility in different applications. The model's focus on improvements in coding and mathematics suggests that it is well-suited for tasks that require strong reasoning and problem-solving abilities.