\n

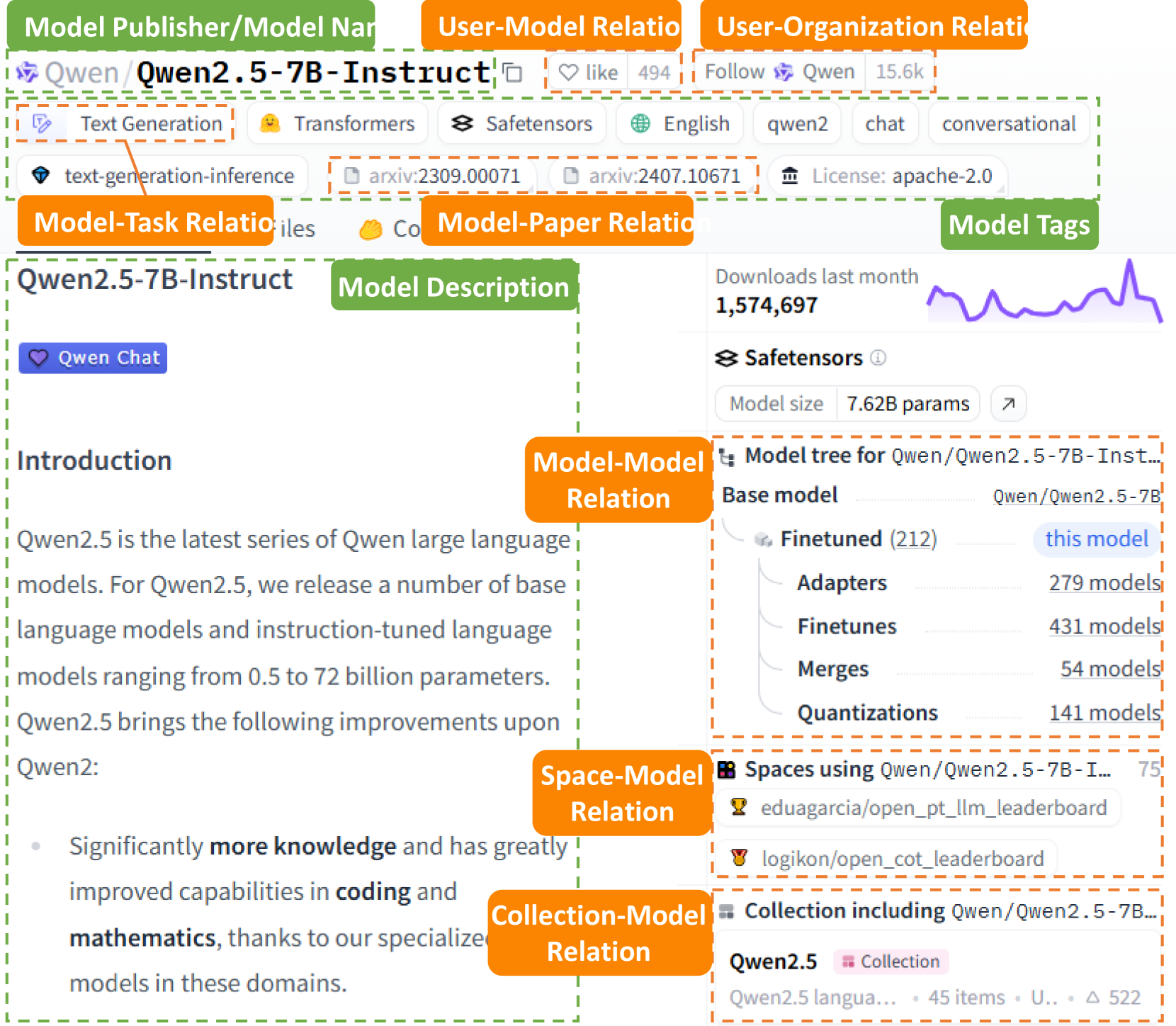

## Diagram: Qwen2.5 Model Information Hub

### Overview

This diagram presents a comprehensive overview of the Qwen2.5 language model, organized around its relationships to various aspects like publishers, users, tasks, papers, and spaces. It's structured as a mind-map or knowledge graph, with the core model ("Qwen2.5-7B-Instruct") at the center and radiating connections to related information. The diagram includes textual descriptions, numerical data (downloads, model size, number of models), and links to external resources.

### Components/Axes

The diagram is organized into several interconnected sections:

* **Model Publisher/Model Name:** "Qwen (Qwen2.5-7B-Instruct)"

* **User-Model Relation:** Includes "Like" (494), "Follow" (15.6k)

* **User-Organization Relation:** "Qwen"

* **Model-Task Relation:** "Qwen2.5-7B-Instruct"

* **Model-Paper Relation:**

* **Model Tags:** "English", "qwen2", "chat", "conversational"

* **Model Description:** Textual description of Qwen2.5's capabilities.

* **Model-Model Relation:**

* **Space-Model Relation:**

* **Collection-Model Relation:** "Qwen2.5 Collection"

* **Model Tags (Right Side):** Downloads last month (1,574,697), Safetensors information, Model Tree, Spaces using Qwen2.5-7B-Instruct, and related collections.

* **Safetensors:** Model size: 7.62B params.

### Detailed Analysis or Content Details

**Model Publisher/Model Name:**

* Model: Qwen2.5-7B-Instruct

* Categories: Text Generation, Transformers

**User-Model Relation:**

* Likes: Approximately 494

* Followers: Approximately 15,600

**Model Tags:**

* Language: English

* Tags: qwen2, chat, conversational

**Model Description:**

* Qwen2.5 is the latest series of Qwen large language models.

* It releases a number of base and instruction-tuned language models ranging from 0.5 to 72 billion parameters.

* Qwen2.5 brings the following improvements upon Qwen2:

* Significantly more knowledge and has greatly improved capabilities in coding and mathematics, thanks to its specialized models in these domains.

**Safetensors:**

* Model Size: 7.62 Billion parameters.

**Model Tree (Qwen/Qwen2.5-7B-Instruct):**

* Finetuned: 212 models

* Adapters: 279 models

* Finetunes: 431 models

* Merges: 54 models

* Quantizations: 141 models

**Spaces using Qwen/Qwen2.5-7B-Instruct:**

* eduagarcia/open_plt_llm_leaderboard

* logikon/open_cot_leaderboard

**Qwen2.5 Collection:**

* Qwen2.5 language… + 45 items, U… + 522

**Downloads Last Month:**

* 1,574,697 downloads. The graph shows a fluctuating trend with a peak around the middle of the month.

### Key Observations

* The model has a substantial number of downloads (over 1.5 million) in the last month, indicating significant interest.

* The model tree shows a diverse ecosystem of finetuned models, adapters, and quantizations built upon the base Qwen2.5-7B-Instruct model.

* The model description highlights improvements in coding and mathematics capabilities.

* The diagram emphasizes the model's versatility through its connections to various tasks, papers, and spaces.

### Interpretation

The diagram serves as a central hub for information about the Qwen2.5 model. It demonstrates a thriving community around the model, evidenced by the number of likes, followers, downloads, and derived models. The emphasis on coding and mathematics suggests a targeted improvement in these areas, potentially positioning Qwen2.5 as a strong contender in specialized applications. The model tree illustrates the extensibility of the base model, allowing developers to customize it for specific use cases. The fluctuating download graph suggests periods of increased activity and interest, potentially linked to releases or announcements. The diagram effectively communicates the model's capabilities, community support, and potential for further development. The use of a mind-map style visually represents the interconnectedness of various aspects related to the model, making it easy to grasp the overall ecosystem.