## Screenshot: Model Card Page for Qwen2.5-7B-Instruct

### Overview

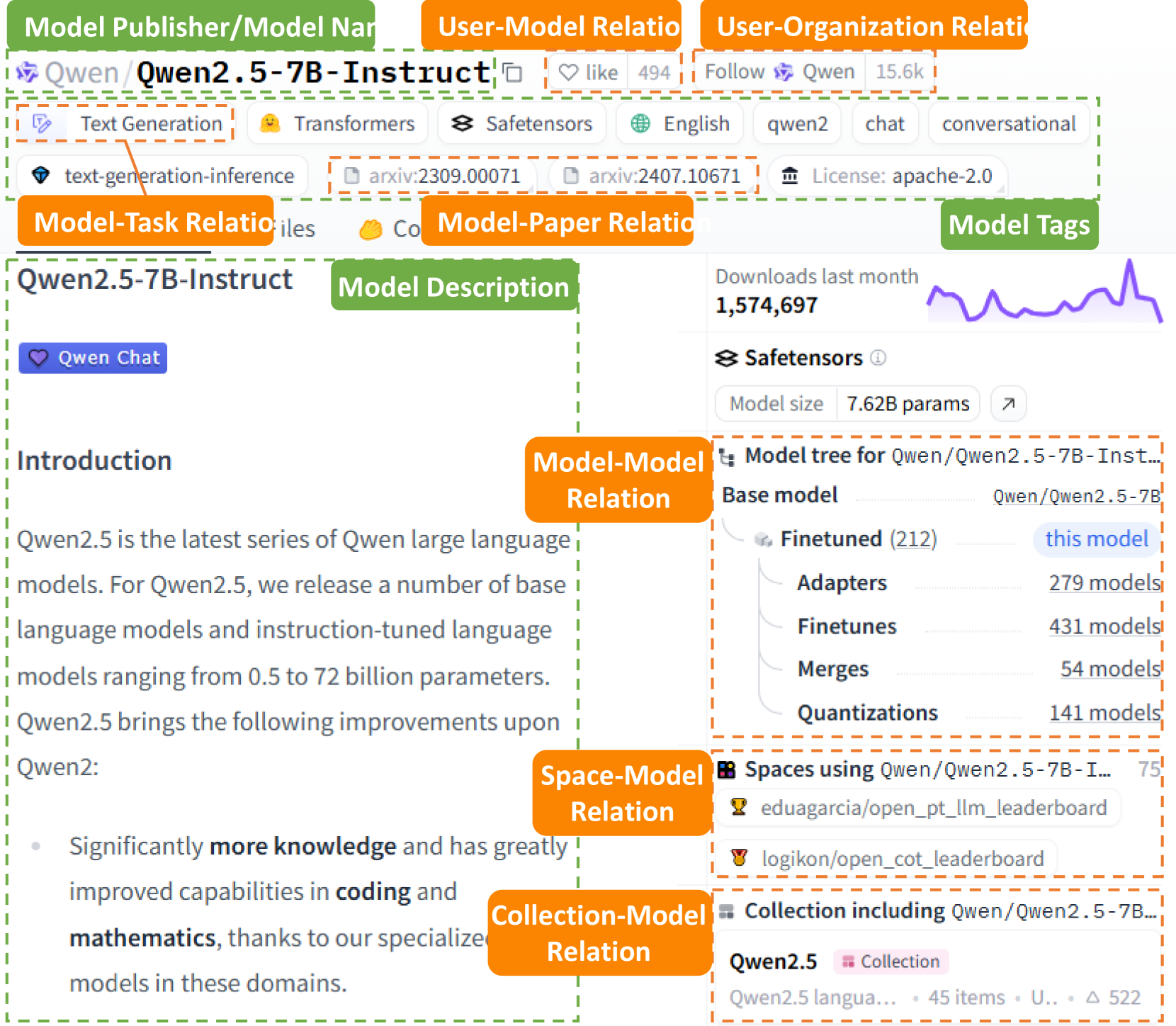

This image is a screenshot of a model card page, likely from a platform like Hugging Face, for the large language model "Qwen2.5-7B-Instruct". The page is densely annotated with orange and green labels that categorize the different informational components and their relationships. The primary language is English, with the model name containing the Chinese characters "通义千问" (Qwen).

### Components/Axes

The page is segmented into labeled regions. The key components, identified by their on-screen labels and positions, are:

1. **Top Header Bar (Top of screen):**

* **Model Publisher/Model Name:** `Qwen/Qwen2.5-7B-Instruct` (with a copy icon).

* **User-Model Relation:** A "like" button with a count of `494`.

* **User-Organization Relation:** A "Follow" button for `Qwen` with a count of `15.6k`.

2. **Tag & Metadata Row (Below header):**

* **Model-Task Relation:** Tags include `Text Generation`, `Transformers`, `Safetensors`, `English`, `qwen2`, `chat`, `conversational`.

* **Model-Paper Relation:** Links to two arXiv papers: `arxiv:2309.00071` and `arxiv:2407.10671`.

* **License:** `apache-2.0`.

* **Model Tags:** A green label grouping the above tags.

3. **Main Content Area (Left Column):**

* **Model Description:** A green label pointing to the section title `Qwen2.5-7B-Instruct`.

* **Introduction Text:** A block of text describing the model series.

* **Qwen Chat:** A blue button/link.

4. **Right Sidebar (Right Column):**

* **Downloads last month:** A metric showing `1,574,697` with a small purple line chart showing a generally upward trend with a sharp recent spike.

* **Safetensors:** A section indicating the model format, with a "Model size" of `7.62B params`.

* **Model-Model Relation:** A section titled "Model tree for Qwen/Qwen2.5-7B-Instruct...".

* **Base model:** `Qwen/Qwen2.5-7B` (truncated).

* **Finetuned (212):** Labeled as "this model".

* **Adapters:** `279 models`.

* **Finetunes:** `431 models`.

* **Merges:** `54 models`.

* **Quantizations:** `141 models`.

* **Space-Model Relation:** A section titled "Spaces using Qwen/Qwen2.5-7B-I..." with a count of `75`. Lists two example Spaces: `eduagarcia/open_pt_llm_leaderboard` and `logikon/open_cot_leaderboard`.

* **Collection-Model Relation:** A section titled "Collection including Qwen/Qwen2.5-7B...".

* **Collection:** `Qwen2.5` (with a pink "Collection" badge).

* **Description:** `Qwen2.5 langua...` (truncated).

* **Stats:** `45 items`, `U..` (likely "Updated"), `△ 522`.

### Detailed Analysis

**Introduction Text Transcription:**

"Qwen2.5 is the latest series of Qwen large language models. For Qwen2.5, we release a number of base language models and instruction-tuned language models ranging from 0.5 to 72 billion parameters. Qwen2.5 brings the following improvements upon Qwen2:

* Significantly **more knowledge** and has greatly improved capabilities in **coding** and **mathematics**, thanks to our specialized models in these domains."

**Model Tree Data:**

The model tree quantifies the ecosystem around the base model `Qwen/Qwen2.5-7B`. The current model (`Qwen2.5-7B-Instruct`) is one of 212 finetuned versions. The ecosystem is extensive, with 279 adapters, 431 other finetunes, 54 merges, and 141 quantizations derived from the base model.

**Downloads Chart:**

The small line chart in the "Downloads last month" section shows a volatile but generally increasing trend over the period, culminating in a significant peak at the far right of the chart.

### Key Observations

1. **High Popularity:** The model has substantial engagement, with nearly 1,600,000 downloads in the last month and 15.6k followers for the Qwen organization.

2. **Rich Ecosystem:** The "Model tree" reveals a very active community, with hundreds of derivative models (adapters, finetunes, merges, quantizations) built upon the base `Qwen2.5-7B` model.

3. **Clear Annotation Schema:** The image itself is a meta-document, using color-coded labels (orange for relations, green for components) to explicitly map out the information architecture of a model card page.

4. **Documented Improvements:** The introduction text explicitly states the model's key improvements over its predecessor: enhanced knowledge, coding, and mathematics capabilities.

### Interpretation

This screenshot serves as both a specific model card and a generic template for understanding the information structure of open-source AI model repositories. The data presented suggests that `Qwen2.5-7B-Instruct` is a popular, well-supported model within a large and active ecosystem. The high download count and extensive number of derivative models indicate strong community adoption and utility.

The annotated labels (e.g., "Model-Task Relation," "Space-Model Relation") provide a Peircean framework for reading the page, explicitly defining the signs and relationships between entities: the model itself, its tasks, its creators, its academic papers, its user community, and its downstream applications (Spaces). The "Model tree" is particularly insightful, demonstrating how a single base model can spawn a diverse family of specialized models, highlighting the modular and collaborative nature of modern AI development. The sharp spike in the downloads chart could correlate with a recent release, update, or viral use case, marking a point of significant interest.