## Line Charts: Average Attention Weight Comparison (Qwen2.5-7B-Math, Head 22)

### Overview

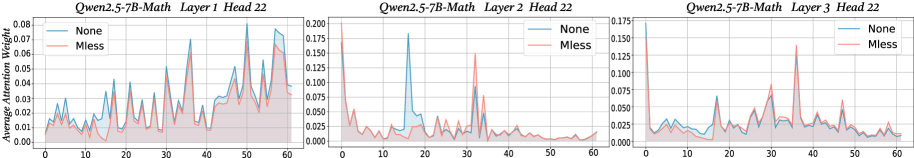

The image displays three horizontally arranged line charts, each comparing the "Average Attention Weight" across an index (0-60) for two conditions: "None" (blue line) and "Mless" (red line). The charts correspond to different layers (1, 2, and 3) of the Qwen2.5-7B-Math model, all for Head 22. The visualization appears to analyze how attention patterns differ between a baseline ("None") and a modified ("Mless") condition across model depth.

### Components/Axes

* **Chart Titles (Top-Center of each subplot):**

* Left Chart: `Qwen2.5-7B-Math Layer 1 Head 22`

* Middle Chart: `Qwen2.5-7B-Math Layer 2 Head 22`

* Right Chart: `Qwen2.5-7B-Math Layer 3 Head 22`

* **Y-Axis Label (Leftmost chart, vertically oriented):** `Average Attention Weight`

* **Y-Axis Scales (Vary per chart):**

* Layer 1: 0.00 to 0.08 (ticks at 0.00, 0.02, 0.04, 0.06, 0.08)

* Layer 2: 0.000 to 0.200 (ticks at 0.000, 0.025, 0.050, 0.075, 0.100, 0.125, 0.150, 0.175, 0.200)

* Layer 3: 0.000 to 0.175 (ticks at 0.000, 0.025, 0.050, 0.075, 0.100, 0.125, 0.150, 0.175)

* **X-Axis (All charts):** Numerical index from 0 to 60, with major ticks at 0, 10, 20, 30, 40, 50, 60. The axis label is not explicitly shown but represents a sequence (e.g., token position).

* **Legend (Top-right corner of each subplot):**

* Blue Line: `None`

* Red Line: `Mless`

### Detailed Analysis

**Layer 1, Head 22 (Left Chart):**

* **Trend Verification:** The "None" (blue) line exhibits high volatility with multiple sharp peaks. The "Mless" (red) line follows a similar pattern but is consistently lower in magnitude, especially at the peaks.

* **Data Points (Approximate):**

* **Blue ("None"):** Starts near 0.01. Major peaks occur around index ~30 (0.07), ~40 (0.08), and ~50 (0.075). Troughs dip to ~0.01-0.02.

* **Red ("Mless"):** Follows the blue line's shape but peaks are attenuated. Peaks at ~30 (~0.04), ~40 (~0.05), ~50 (~0.045). General baseline is around 0.01-0.02.

**Layer 2, Head 22 (Middle Chart):**

* **Trend Verification:** The "None" (blue) line shows one extremely dominant peak early on, followed by lower activity. The "Mless" (red) line has a different pattern, with its highest peak occurring later.

* **Data Points (Approximate):**

* **Blue ("None"):** A very sharp, high peak at index ~15, reaching ~0.175. After this, values drop significantly, fluctuating mostly below 0.05, with a smaller peak around index ~35 (~0.075).

* **Red ("Mless"):** Does not share the early blue peak. Its highest point is around index ~35 (~0.15). Otherwise, it fluctuates at a lower level, often below the blue line in the first half and above it in the second half.

**Layer 3, Head 22 (Right Chart):**

* **Trend Verification:** Both lines show more frequent, lower-amplitude oscillations compared to earlier layers. The "Mless" (red) line generally has higher peaks than the "None" (blue) line in this layer.

* **Data Points (Approximate):**

* **Blue ("None"):** Oscillates with peaks rarely exceeding 0.05. Notable peaks around index ~10 (~0.04), ~25 (~0.05), ~40 (~0.04).

* **Red ("Mless"):** Shows more pronounced peaks. A very high initial value at index 0 (~0.17). Other significant peaks at ~25 (~0.075), ~35 (~0.14), and ~45 (~0.06).

### Key Observations

1. **Layer-Dependent Behavior:** The relationship between the "None" and "Mless" conditions changes dramatically across layers. In Layer 1, "None" dominates. In Layer 2, they have distinct peak locations. In Layer 3, "Mless" often has higher peaks.

2. **Peak Magnitude:** The highest absolute attention weight observed is in Layer 2 for the "None" condition (~0.175). The highest for "Mless" is in Layer 3 at index 0 (~0.17).

3. **Pattern Shift:** The "None" condition's most prominent feature (the huge Layer 2 peak) disappears in the "Mless" condition, suggesting the modification significantly alters attention focus at that specific layer and position.

4. **Increased Volatility in "Mless" for Layer 3:** The "Mless" line in Layer 3 shows sharper, more isolated spikes compared to the smoother oscillations of the "None" line.

### Interpretation

This data visualizes the internal attention mechanism of a large language model (Qwen2.5-7B-Math) under two different conditions. "None" likely represents the standard, unmodified model inference. "Mless" presumably stands for a modified inference technique (e.g., "Memory-less" or another intervention).

The charts demonstrate that the intervention ("Mless") does not simply scale attention weights up or down uniformly. Instead, it **reconfigures the attention pattern in a layer-specific manner**:

* In early layers (Layer 1), it suppresses the magnitude of attention peaks.

* In middle layers (Layer 2), it completely shifts the focus of attention away from the position that was most critical in the baseline model.

* In deeper layers (Layer 3), it appears to increase the salience of certain positions, creating sharper, more isolated attention spikes.

This suggests the "Mless" technique fundamentally changes how the model allocates its attention resources across its depth, potentially to reduce reliance on certain types of information (e.g., long-range dependencies or specific token memories) or to encourage different reasoning pathways. The dramatic shift in Layer 2 is particularly noteworthy, indicating that this layer may be a critical point where the standard model's processing is significantly altered by the intervention.