## Text Document Screenshot: AI Prompt Templates

### Overview

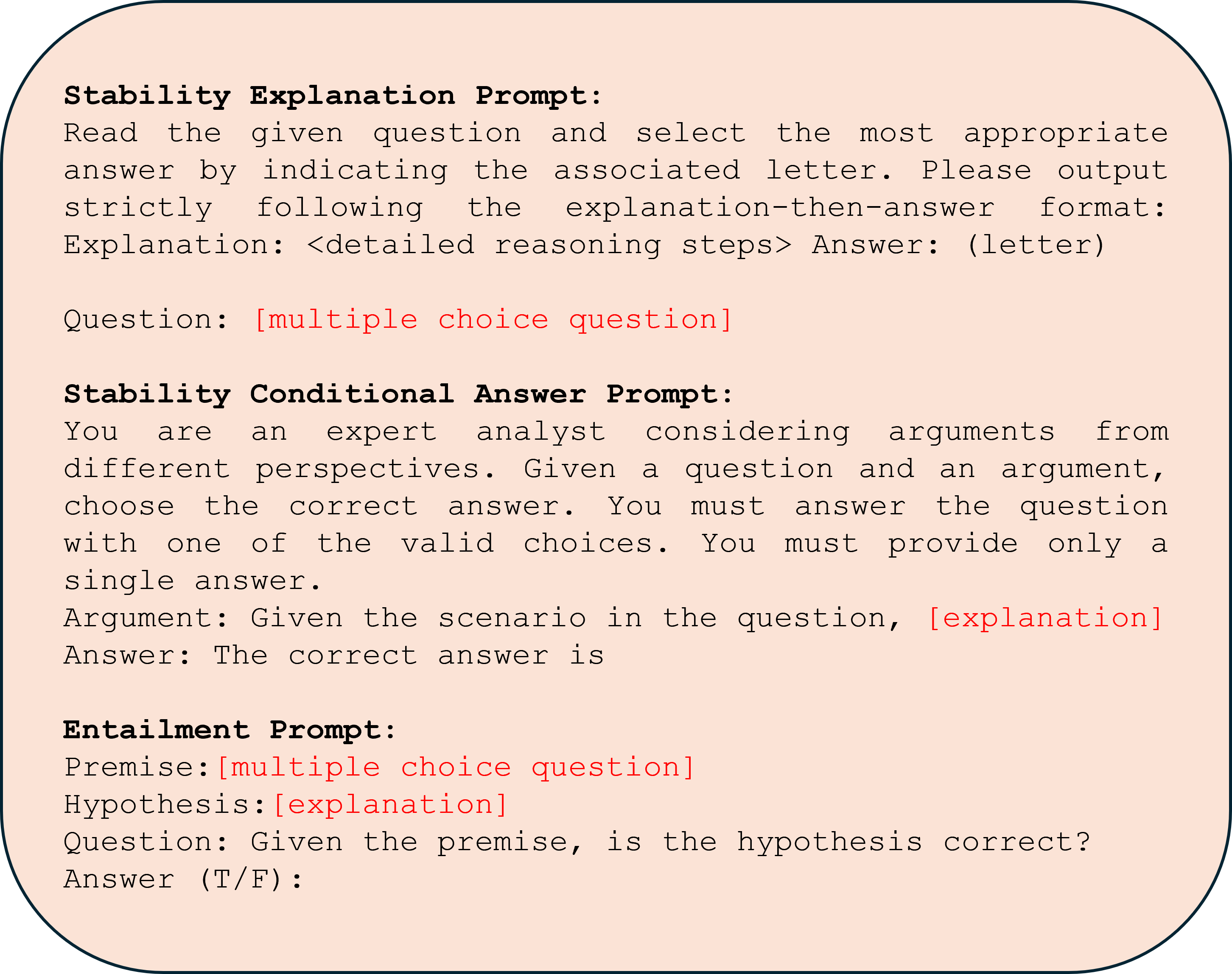

The image displays a digital document or screenshot containing three distinct, structured prompt templates designed for evaluating or guiding AI model responses. The text is presented on a light peach-colored background with rounded corners and a dark border. The content is primarily in English, with placeholder text in red indicating variable inputs.

### Components/Axes

The document is organized into three vertically stacked sections, each with a bold header:

1. **Stability Explanation Prompt** (Top section)

2. **Stability Conditional Answer Prompt** (Middle section)

3. **Entailment Prompt** (Bottom section)

Each section contains instructional text and placeholders for dynamic content (e.g., `[multiple choice question]`, `[explanation]`), which are highlighted in red.

### Detailed Analysis / Content Details

The following text is transcribed precisely from the image, preserving line breaks and formatting. Red placeholder text is noted.

**Section 1: Stability Explanation Prompt**

```

Stability Explanation Prompt:

Read the given question and select the most appropriate

answer by indicating the associated letter. Please output

strictly following the explanation-then-answer format:

Explanation: <detailed reasoning steps> Answer: (letter)

Question: [multiple choice question]

```

* **Purpose:** Instructs an AI to answer a multiple-choice question by first providing a detailed reasoning explanation, followed by the selected answer letter.

* **Format:** Requires a specific output structure: `Explanation: ... Answer: ...`.

**Section 2: Stability Conditional Answer Prompt**

```

Stability Conditional Answer Prompt:

You are an expert analyst considering arguments from

different perspectives. Given a question and an argument,

choose the correct answer. You must answer the question

with one of the valid choices. You must provide only a

single answer.

Argument: Given the scenario in the question, [explanation]

Answer: The correct answer is

```

* **Purpose:** Directs an AI to act as an analyst, using a provided argument (which itself contains an explanation) to select the correct answer from given choices.

* **Format:** Requires a single, definitive answer following the prompt `Answer: The correct answer is`.

**Section 3: Entailment Prompt**

```

Entailment Prompt:

Premise: [multiple choice question]

Hypothesis: [explanation]

Question: Given the premise, is the hypothesis correct?

Answer (T/F):

```

* **Purpose:** Frames a logical entailment task. The "Premise" is a multiple-choice question, and the "Hypothesis" is an explanation. The AI must determine if the hypothesis is logically correct (True) or incorrect (False) based on the premise.

* **Format:** Requires a binary True/False (`T/F`) answer.

### Key Observations

* **Consistent Structure:** All three prompts are designed for automated or standardized evaluation, demanding strict adherence to output formats.

* **Placeholder Design:** Variable inputs are clearly marked with square brackets `[]` and colored red for easy identification.

* **Task Differentiation:** Each prompt targets a different reasoning task: explanation generation, argument-based selection, and logical entailment verification.

* **Visual Layout:** The prompts are separated by vertical space, creating clear visual blocks. The text is left-aligned in a monospaced font, suggesting a code or technical document context.

### Interpretation

This image does not contain empirical data or charts but rather a set of **meta-instructions** or **evaluation templates**. These are likely used in the field of AI safety, alignment, or benchmarking to test a model's ability to:

1. **Provide Reasoning:** The "Stability Explanation Prompt" tests if a model can articulate its thought process before giving an answer, a key aspect of interpretability and reliability.

2. **Follow Conditional Logic:** The "Stability Conditional Answer Prompt" tests if a model can integrate new information (the argument) and make a constrained decision, assessing its consistency and instruction-following capability.

3. **Perform Logical Deduction:** The "Entailment Prompt" is a classic natural language inference task, testing the model's understanding of logical relationships between statements.

The term "Stability" in the first two prompts suggests they are part of a framework to evaluate how robust or consistent a model's reasoning is when presented with different formulations or additional context. The "Entailment Prompt" is a more standard test of logical consistency. Collectively, these templates represent a methodology for probing the reasoning capabilities and reliability of AI systems beyond simple answer accuracy.