## Horizontal Bar Chart: XAI Paper Filtering Funnel

### Overview

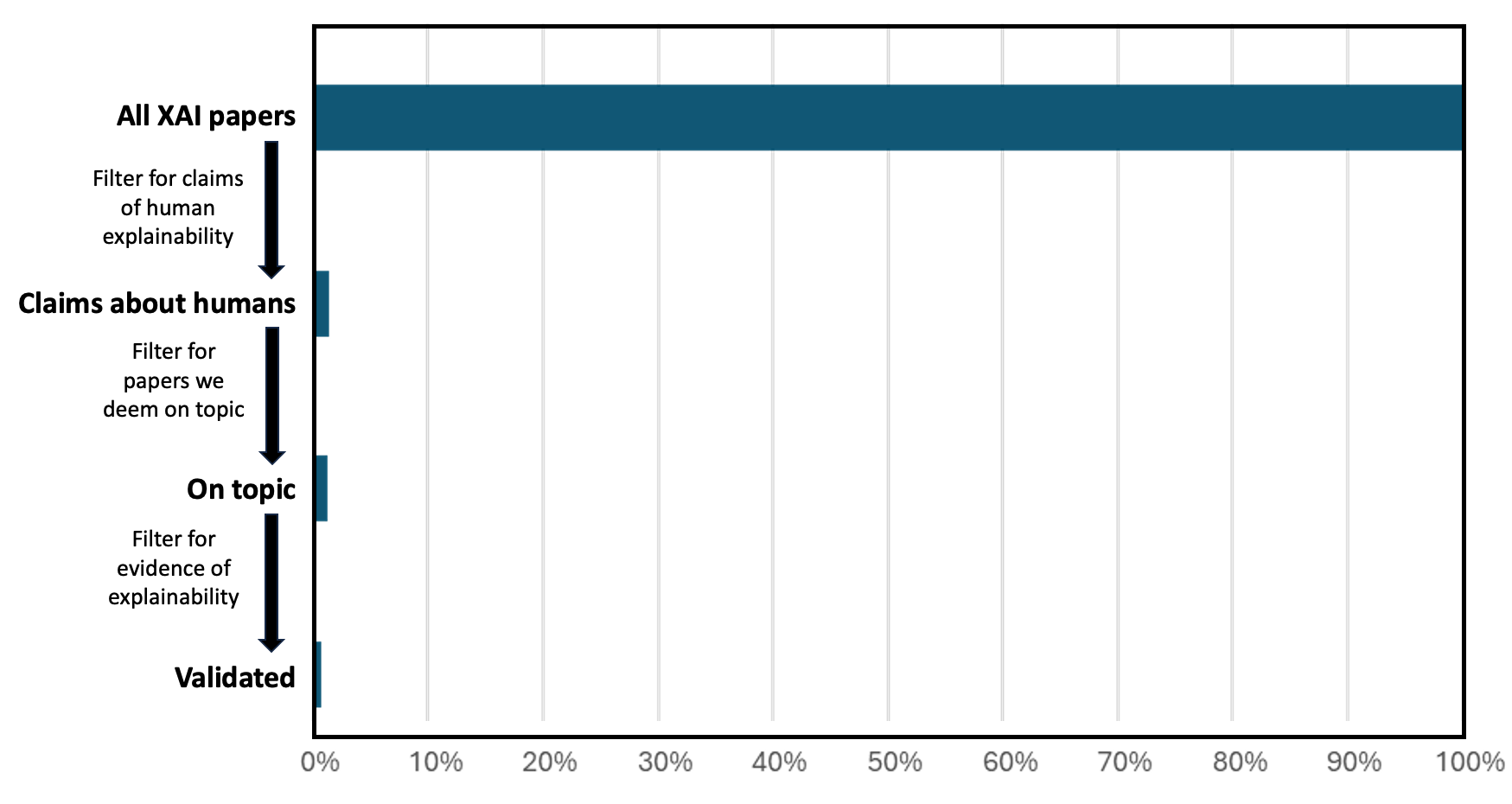

The image displays a horizontal bar chart combined with a funnel diagram on the left side. It illustrates a multi-stage filtering process applied to a corpus of "XAI papers," showing the dramatic reduction in the number of papers at each successive stage of evaluation. The chart visually emphasizes the scarcity of papers that meet rigorous criteria for human explainability.

### Components/Axes

* **Chart Type:** Horizontal bar chart with an integrated funnel/process diagram.

* **Y-Axis (Left Side - Funnel Labels):** The vertical axis lists the stages of the filtering process. From top to bottom:

1. **All XAI papers**

2. **Claims about humans**

3. **On topic**

4. **Validated**

* **Filter Descriptions (Between Labels):** Text annotations with downward-pointing arrows describe the filtering criteria applied between stages:

* Between "All XAI papers" and "Claims about humans": **"Filter for claims of human explainability"**

* Between "Claims about humans" and "On topic": **"Filter for papers we deem on topic"**

* Between "On topic" and "Validated": **"Filter for evidence of explainability"**

* **X-Axis (Bottom):** A percentage scale running from **0%** to **100%**, with major grid lines and labels at every 10% increment (0%, 10%, 20%, ..., 100%).

* **Data Series:** A single data series represented by dark teal horizontal bars. There is no legend, as all bars represent the same metric (percentage of the initial corpus).

* **Spatial Layout:** The funnel labels and filter text are positioned to the left of the main chart area. The bars originate from the left edge of the chart area and extend rightward. The x-axis labels are centered below the chart.

### Detailed Analysis

The chart quantifies the attrition of papers through the defined filtering pipeline. The length of each bar represents the percentage of the original "All XAI papers" corpus that remains at that stage.

1. **All XAI papers:** The bar extends fully to the **100%** mark. This is the baseline corpus.

2. **Claims about humans:** After filtering for papers that make claims about human explainability, the bar length drops precipitously. Visually, it extends to approximately **5%** of the original width (±1% uncertainty).

3. **On topic:** After a further filter for papers deemed "on topic," the bar length appears very similar to the previous stage, estimated at **~5%** (±1%). This suggests the "on topic" filter removed very few additional papers from the "Claims about humans" subset.

4. **Validated:** After the final filter for "evidence of explainability," the bar shrinks further. It is the shortest bar, estimated at approximately **1-2%** of the original corpus (±0.5% uncertainty).

**Trend Verification:** The visual trend is one of severe, stepwise reduction. The first filter causes a massive drop (from 100% to ~5%). The second filter shows minimal change. The third filter causes another noticeable, though smaller, relative reduction.

### Key Observations

* **Massive Initial Attrition:** The most significant finding is that only a tiny fraction (≈5%) of all XAI papers even make claims about human explainability.

* **Stability in the Middle Stage:** The "On topic" filter does not substantially reduce the number of papers further, indicating that most papers making claims about human explainability are considered relevant by the evaluators.

* **Final Validation is Rare:** The process of finding papers with validated evidence of explainability is highly selective, yielding only about 1-2% of the original literature.

* **Visual Emphasis:** The funnel diagram on the left, with its bold labels and arrows, explicitly narrates the logical flow of the filtering process, which the bar chart then quantifies.

### Interpretation

This visualization presents a critical, investigative look at the state of research in Explainable AI (XAI). It suggests a significant gap between the volume of published work and the subset that provides robust, validated evidence for its claims about human explainability.

The data implies that the field may be saturated with papers that propose methods or make claims, but a vanishingly small proportion undergo the rigorous evaluation necessary to validate those claims. The near-plateau between "Claims about humans" and "On topic" indicates that relevance is not the primary bottleneck; rather, the core challenges are (1) the initial formulation of human-centric explainability claims and, more critically, (2) the subsequent generation of empirical evidence to support them.

This chart likely serves as a call to action for the research community to prioritize methodological rigor, empirical validation, and a focus on human-centric outcomes over the mere proliferation of new techniques. It highlights a potential credibility gap in the XAI literature.