## Bar Chart: Accuracy Comparison on Video Datasets

### Overview

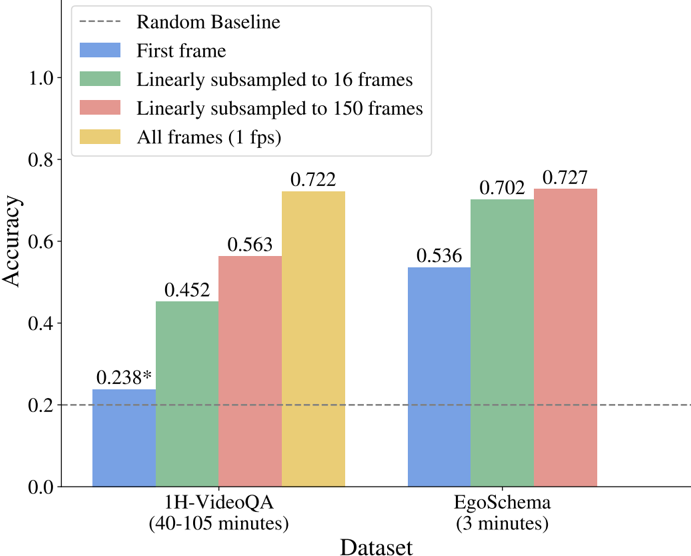

The image is a bar chart comparing the accuracy of different video frame sampling strategies on two datasets: 1H-VideoQA and EgoSchema. The chart shows the accuracy achieved using the first frame, linearly subsampled frames (16 and 150), and all frames (1 fps). A random baseline is also indicated.

### Components/Axes

* **X-axis:** Dataset (1H-VideoQA (40-105 minutes), EgoSchema (3 minutes))

* **Y-axis:** Accuracy (scale from 0.0 to 1.0, with increments of 0.2)

* **Legend (top-left):**

* Random Baseline (dashed gray line)

* First frame (blue)

* Linearly subsampled to 16 frames (green)

* Linearly subsampled to 150 frames (red)

* All frames (1 fps) (yellow)

### Detailed Analysis

**1H-VideoQA Dataset:**

* **First frame (blue):** Accuracy of 0.238. The value has an asterisk.

* **Linearly subsampled to 16 frames (green):** Accuracy of 0.452.

* **Linearly subsampled to 150 frames (red):** Accuracy of 0.563.

* **All frames (1 fps) (yellow):** Accuracy of 0.722.

**EgoSchema Dataset:**

* **First frame (blue):** Accuracy of 0.536.

* **Linearly subsampled to 16 frames (green):** Accuracy of 0.702.

* **Linearly subsampled to 150 frames (red):** Accuracy of 0.727.

* **All frames (1 fps) (yellow):** No bar is present for this category.

**Random Baseline:**

* A dashed gray line is present at the 0.2 accuracy level.

### Key Observations

* For both datasets, using all frames (1 fps) generally yields the highest accuracy, except for EgoSchema where linearly subsampling to 150 frames is slightly better.

* Using only the first frame results in the lowest accuracy for 1H-VideoQA, but performs relatively better for EgoSchema.

* The accuracy generally increases as more frames are used (from first frame to all frames).

* The EgoSchema dataset shows higher accuracy across all sampling methods compared to the 1H-VideoQA dataset.

### Interpretation

The bar chart demonstrates the impact of different video frame sampling strategies on the accuracy of video-based tasks. The results suggest that using more frames generally leads to better performance, but the optimal sampling strategy may depend on the specific dataset and task. The higher accuracy on EgoSchema could be attributed to the shorter video lengths (3 minutes) compared to 1H-VideoQA (40-105 minutes), making it easier to extract relevant information even with fewer frames. The random baseline provides a reference point, highlighting the improvement achieved by each sampling method over random chance. The asterisk next to the "First frame" accuracy for 1H-VideoQA suggests a statistically significant difference or a specific condition associated with that value, which would require further context to interpret fully.