TECHNICAL ASSET FINGERPRINT

f5ff5c02a662eab5114b2b59

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Comparison of Agent Exploration and Path Generation Methods

### Overview

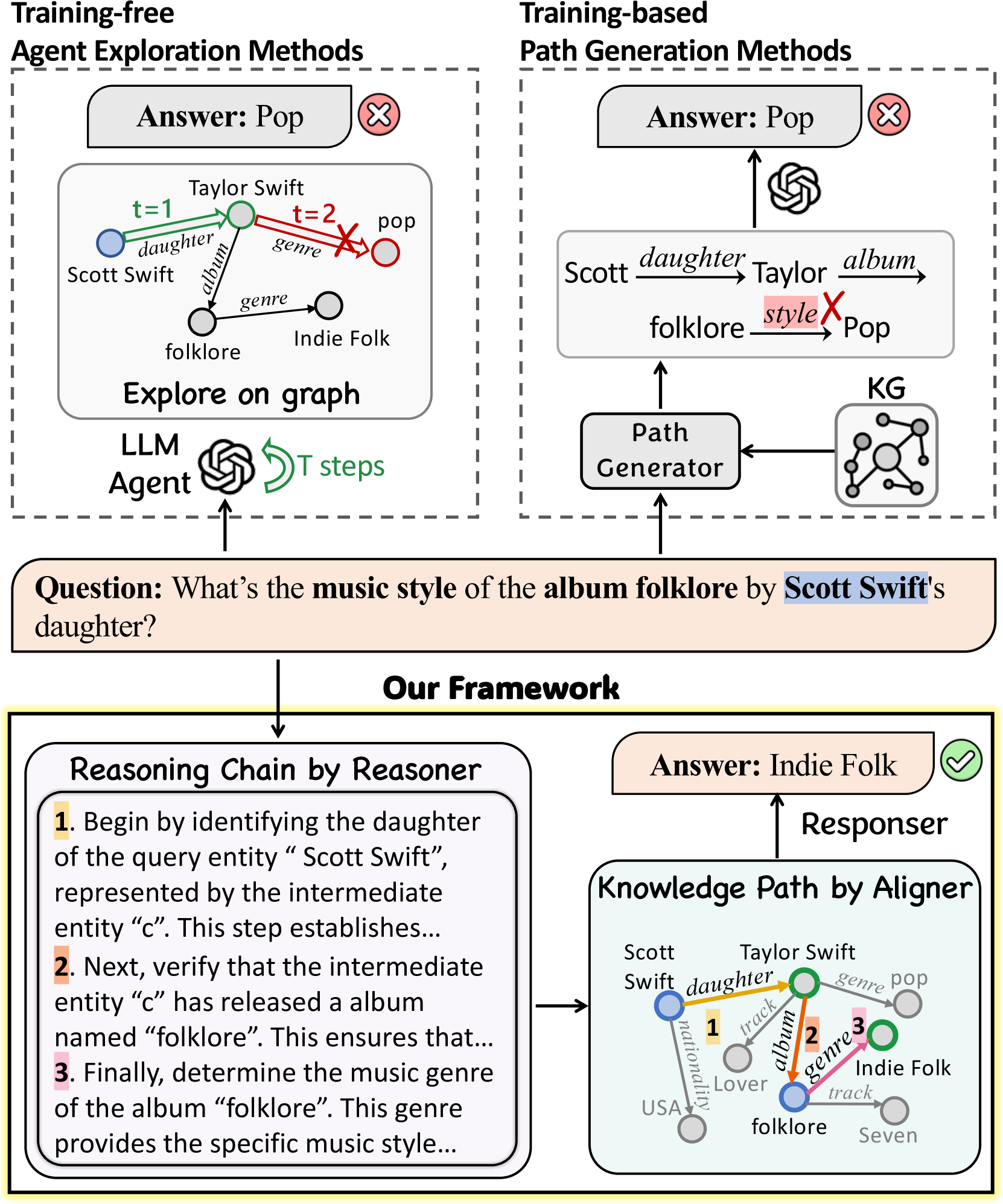

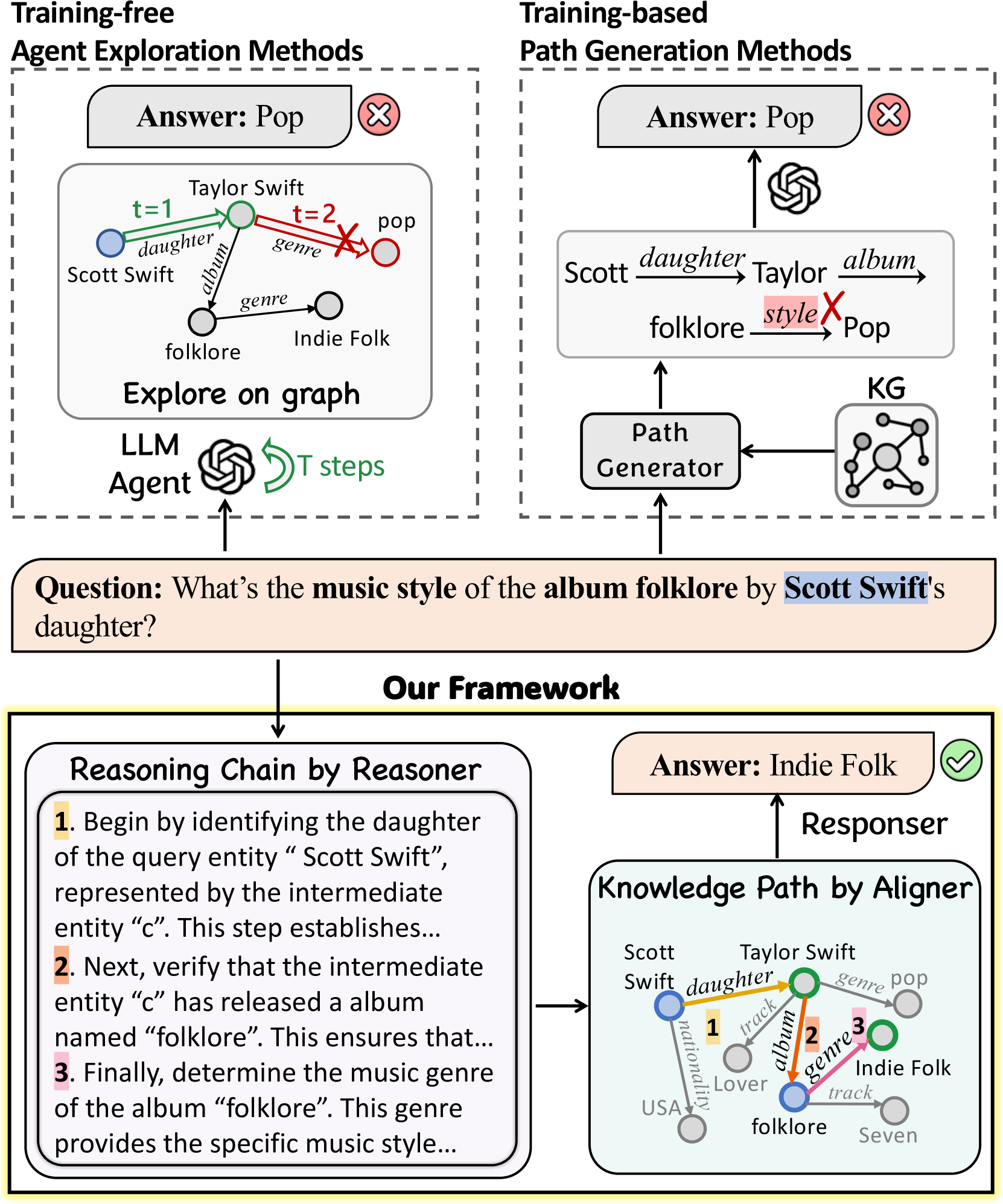

The image presents a comparative diagram illustrating two approaches to answering a question about music style: "What's the music style of the album folklore by Scott Swift's daughter?". The left side depicts a "Training-free Agent Exploration Method," while the right side shows a "Training-based Path Generation Method." Below these, "Our Framework" is presented, detailing a "Reasoning Chain by Reasoner" and a "Knowledge Path by Aligner" that correctly answers the question.

### Components/Axes

**Top Section:**

* **Titles:** "Training-free Agent Exploration Methods" (left), "Training-based Path Generation Methods" (right).

* **Answer Boxes:** Each method has an "Answer" box, both initially stating "Pop" with a red "X" indicating an incorrect answer.

* **Question:** "What's the music style of the album folklore by Scott Swift's daughter?"

**Left Side (Training-free Agent Exploration):**

* **Graph:** A simple graph with nodes representing entities (Scott Swift, Taylor Swift, Pop, Indie Folk, folklore) and edges representing relationships (daughter, album, genre).

* Scott Swift (blue node) is connected to Taylor Swift (green node) via "daughter" (t=1, green arrow).

* Taylor Swift (green node) is connected to Pop (red node) via "genre" (t=2, red arrow with an X).

* Taylor Swift (green node) is connected to folklore (grey node) via "album" (black arrow).

* folklore (grey node) is connected to Indie Folk (grey node) via "genre" (black arrow).

* **LLM Agent:** An icon representing a Large Language Model Agent.

* **T steps:** A green arrow indicating the agent takes T steps.

* **Explore on graph:** Label below the graph.

**Right Side (Training-based Path Generation):**

* **Graph:** A graph with nodes representing entities (Scott, Taylor, folklore, Pop) and edges representing relationships (daughter, album, style).

* Scott is connected to Taylor via "daughter".

* Taylor is connected to folklore via "album".

* folklore is connected to Pop via "style" (red X over style).

* **KG:** An icon representing a Knowledge Graph.

* **Path Generator:** A box labeled "Path Generator".

* **Arrow Flow:** Arrows indicate the flow of information from the question to the Path Generator, from the KG to the Path Generator, from the Path Generator to the graph, and from the graph to the "Answer" box.

**Bottom Section (Our Framework):**

* **Title:** "Our Framework"

* **Left Box (Reasoning Chain by Reasoner):**

* **Title:** "Reasoning Chain by Reasoner"

* **Steps:**

1. "Begin by identifying the daughter of the query entity 'Scott Swift', represented by the intermediate entity 'c'. This step establishes..."

2. "Next, verify that the intermediate entity 'c' has released a album named 'folklore'. This ensures that..."

3. "Finally, determine the music genre of the album 'folklore'. This genre provides the specific music style..."

* **Right Box (Knowledge Path by Aligner):**

* **Title:** "Knowledge Path by Aligner"

* **Graph:** A graph with nodes representing entities (Scott Swift, Taylor Swift, Pop, Indie Folk, folklore, USA, Lover, Seven) and edges representing relationships (daughter, album, genre, nationality, track).

* Scott Swift (blue node) is connected to Taylor Swift (green node) via "daughter" (orange arrow labeled "1").

* Taylor Swift (green node) is connected to Indie Folk (green node) via "genre" (pink arrow labeled "3").

* Taylor Swift (green node) is connected to folklore (blue node) via "album" (orange arrow labeled "2").

* Scott Swift (blue node) is connected to USA (grey node) via "nationality".

* Taylor Swift (green node) is connected to Lover (grey node) via "track".

* folklore (blue node) is connected to Seven (grey node) via "track".

* Taylor Swift (green node) is connected to Pop (green node) via "genre".

* **Responser:** Label above the "Answer" box.

* **Answer Box:** "Answer: Indie Folk" with a green checkmark indicating the correct answer.

### Detailed Analysis or ### Content Details

* **Incorrect Answers:** Both the "Training-free" and "Training-based" methods initially provide the incorrect answer "Pop."

* **Correct Answer:** "Our Framework" correctly identifies the answer as "Indie Folk."

* **Reasoning Chain:** The "Reasoning Chain by Reasoner" outlines the steps taken to arrive at the correct answer.

* **Knowledge Path:** The "Knowledge Path by Aligner" visually represents the connections between entities that lead to the correct answer.

### Key Observations

* The diagram highlights the difference between methods that rely on exploration versus those that use training to generate paths.

* The "Our Framework" section demonstrates a more effective approach to answering the question.

* The use of color and arrows helps to visually represent the flow of information and relationships between entities.

### Interpretation

The diagram illustrates the superiority of the proposed framework in answering complex questions compared to simpler exploration or path generation methods. The initial failure of both "Training-free" and "Training-based" methods underscores the need for a more sophisticated approach. "Our Framework," which combines reasoning and knowledge alignment, successfully identifies the correct answer, "Indie Folk." This suggests that a combination of structured reasoning and knowledge graph traversal is more effective for answering questions that require understanding relationships between entities. The diagram emphasizes the importance of considering multiple factors and relationships to arrive at an accurate conclusion.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Knowledge Graph Reasoning Framework Comparison

### Overview

The image presents a comparative diagram illustrating two approaches to answering a knowledge-based question: "What's the music style of the album folklore by Scott Swift's daughter?". The two approaches are "Training-free Agent Exploration Methods" and "Training-based Path Generation Methods", contrasted with "Our Framework" which combines elements of both. Each approach is visualized using knowledge graphs and flow diagrams.

### Components/Axes

The diagram is divided into four main sections:

1. **Training-free Agent Exploration Methods** (Top-Left)

2. **Training-based Path Generation Methods** (Top-Right)

3. **Our Framework** (Bottom) - further divided into Reasoning Chain by Reasoner and Knowledge Path by Aligner.

Each section includes:

* A knowledge graph representing relationships between entities (Scott Swift, Taylor Swift, folklore, genre, pop, Indie Folk).

* A flow diagram illustrating the process of arriving at an answer.

* A "Question" box.

* An "Answer" box.

The knowledge graphs use nodes to represent entities and edges to represent relationships. The edges are labeled with relationship types (e.g., "daughter", "album", "genre", "style").

### Detailed Analysis or Content Details

**1. Training-free Agent Exploration Methods (Top-Left)**

* **Question:** "What's the music style of the album folklore by Scott Swift's daughter?"

* **Answer:** "Pop" (marked with a red 'X')

* **Knowledge Graph:**

* Nodes: Scott Swift, Taylor Swift, folklore, genre, pop, Indie Folk.

* Edges:

* Scott Swift -> daughter -> Taylor Swift

* Taylor Swift -> album -> folklore

* folklore -> genre -> Indie Folk

* Indie Folk -> genre -> pop (marked with a red 'X')

* **Flow Diagram:**

* LLM Agent -> Explore on graph -> T steps

* Time steps are indicated: t=1 and t=2.

* At t=1, the path is Scott Swift -> daughter -> Taylor Swift -> album -> folklore -> genre -> Indie Folk.

* At t=2, the path is Scott Swift -> daughter -> Taylor Swift -> album -> folklore -> genre -> pop (incorrect).

**2. Training-based Path Generation Methods (Top-Right)**

* **Question:** "What's the music style of the album folklore by Scott Swift's daughter?"

* **Answer:** "Pop" (marked with a red 'X')

* **Knowledge Graph:**

* Nodes: Scott Swift, Taylor Swift, folklore, album, style, Pop.

* Edges:

* Scott Swift -> daughter -> Taylor Swift

* Taylor Swift -> album -> folklore

* folklore -> style -> Pop (incorrect)

* **Flow Diagram:**

* Path Generator -> KG (Knowledge Graph)

* A circular arrow indicates iterative path generation.

**3. Our Framework (Bottom)**

* **Reasoning Chain by Reasoner:**

* Steps:

1. "Begin by identifying the daughter of the query entity “Scott Swift”, represented by the intermediate entity “c”. This step establishes…"

2. "Next, verify that the intermediate entity “c” has released an album named “folklore”. This ensures that…"

3. "Finally, determine the music genre of the album “folklore”. This genre provides the specific music style…"

* **Knowledge Path by Aligner:**

* **Answer:** "Indie Folk"

* **Knowledge Graph:**

* Nodes: Scott Swift, Taylor Swift, folklore, track, Indie Folk, Seven, USA, Lover.

* Edges:

* Scott Swift -> daughter -> Taylor Swift

* Taylor Swift -> album -> folklore

* folklore -> track -> Seven

* folklore -> genre -> Indie Folk

* Taylor Swift -> track -> Lover

* Taylor Swift -> location -> USA

* Numbered edges: 1 and 2.

### Key Observations

* Both the "Training-free" and "Training-based" methods initially arrive at the incorrect answer "Pop".

* "Our Framework" correctly identifies "Indie Folk" as the music style.

* The "Reasoning Chain" section outlines a logical, step-by-step approach to answering the question.

* The "Knowledge Path" section demonstrates a more complete knowledge graph with additional relationships.

* The red 'X' consistently marks incorrect paths or answers.

* The "Our Framework" utilizes a more detailed knowledge graph, including tracks and locations.

### Interpretation

The diagram demonstrates the limitations of simple knowledge graph traversal methods (Training-free and Training-based) and highlights the benefits of a more sophisticated framework that incorporates reasoning and alignment. The initial incorrect answers from the first two methods suggest that relying solely on direct paths in a knowledge graph can lead to errors, especially when multiple relationships exist. "Our Framework" addresses this by employing a reasoning chain to guide the exploration and an alignment process to ensure the selected path is logically sound. The inclusion of additional information in the "Knowledge Path" graph (tracks, location) suggests a richer representation of knowledge, which contributes to the more accurate answer. The diagram effectively illustrates a progression from naive knowledge graph querying to a more intelligent and reliable reasoning system. The use of the red 'X' is a clear visual indicator of the errors made by the initial methods, emphasizing the improvement achieved by "Our Framework".

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Knowledge Graph Question Answering Methods

### Overview

This diagram illustrates and compares three different approaches for answering a complex, multi-hop question using a knowledge graph (KG). The central question is: **"What's the music style of the album folklore by Scott Swift's daughter?"** The diagram contrasts two existing methods (Training-free and Training-based) that produce incorrect answers with a proposed new framework ("Our Framework") that arrives at the correct answer. The visual narrative emphasizes the reasoning process and knowledge path alignment required for accurate multi-hop reasoning.

### Components/Axes

The diagram is divided into three primary sections, each enclosed in a dashed or solid border:

1. **Top-Left Section: "Training-free Agent Exploration Methods"**

* **Header:** "Training-free Agent Exploration Methods"

* **Main Element:** A box labeled "Explore on graph" containing a small knowledge graph snippet.

* **Graph Nodes & Edges:**

* Nodes: `Scott Swift` (blue), `Taylor Swift` (green), `folklore` (gray), `pop` (red), `Indie Folk` (gray).

* Edges: `daughter` (from Scott to Taylor), `album` (from Taylor to folklore), `genre` (from Taylor to pop, and from folklore to Indie Folk).

* **Process Indicators:**

* `t=1` (green arrow) points from Scott Swift to Taylor Swift.

* `t=2` (red arrow with an 'X') points from Taylor Swift to pop.

* **Output:** A box labeled "Answer: Pop" with a red 'X' icon.

* **Agent Icon:** An icon labeled "LLM Agent" with a circular arrow labeled "T steps".

2. **Top-Right Section: "Training-based Path Generation Methods"**

* **Header:** "Training-based Path Generation Methods"

* **Main Element:** A box showing a generated path: `Scott --daughter--> Taylor --album--> folklore --styleX--> Pop`.

* **Process Flow:**

* A "Path Generator" box receives input from a "KG" (Knowledge Graph) icon.

* The Path Generator outputs the path to an LLM icon (OpenAI logo).

* The LLM outputs the answer.

* **Output:** A box labeled "Answer: Pop" with a red 'X' icon.

3. **Bottom Section: "Our Framework"**

* **Header:** "Our Framework" (in a yellow-highlighted box).

* **Input Question:** A large box contains the full question text: "Question: What's the **music style** of the **album folklore** by **Scott Swift's** daughter?"

* **Two Parallel Components:**

* **Left: "Reasoning Chain by Reasoner"**

* A numbered list outlining a logical, step-by-step reasoning process:

1. "Begin by identifying the daughter of the query entity 'Scott Swift', represented by the intermediate entity 'c'. This step establishes..."

2. "Next, verify that the intermediate entity 'c' has released a album named 'folklore'. This ensures that..."

3. "Finally, determine the music genre of the album 'folklore'. This genre provides the specific music style..."

* **Right: "Knowledge Path by Aligner"**

* A more detailed knowledge graph snippet showing the correct path.

* **Nodes:** `Scott Swift` (blue), `Taylor Swift` (green), `folklore` (blue), `Lover` (gray), `Seven` (gray), `USA` (gray), `pop` (gray), `Indie Folk` (green).

* **Edges & Path:** A highlighted path with numbered steps:

1. `daughter` (orange arrow from Scott to Taylor).

2. `album` (orange arrow from Taylor to folklore).

3. `genre` (orange arrow from folklore to Indie Folk).

* Other edges shown: `nationality` (Scott to USA), `track` (Taylor to Lover, folklore to Seven), `genre` (Taylor to pop).

* **Output:** An arrow from the "Knowledge Path" box points to a "Responser" label, which outputs a box labeled "Answer: Indie Folk" with a green checkmark icon.

### Detailed Analysis

The diagram presents a comparative analysis of methodologies for the same task.

* **Training-free Agent Exploration:** This method uses an LLM agent to explore the knowledge graph step-by-step (`t=1`, `t=2`). The visual shows it correctly identifies Taylor Swift as the daughter (`t=1`, green arrow) but then makes an incorrect jump from Taylor Swift directly to the genre "pop" (`t=2`, red arrow with 'X'), missing the crucial intermediate step of identifying the specific album "folklore". This leads to the wrong answer, "Pop".

* **Training-based Path Generation:** This method uses a dedicated "Path Generator" module to create a reasoning path from the KG. The generated path shown is `Scott -> daughter -> Taylor -> album -> folklore -> style -> Pop`. The error is embedded in the final edge: it incorrectly links the album "folklore" to the genre "Pop" (marked with a red 'X'). This flawed path is fed to an LLM, which outputs the same incorrect answer, "Pop".

* **Our Framework:** This proposed solution decouples the process into two specialized components:

1. **Reasoner:** Generates a high-level, logical reasoning chain (the three-step list) that correctly structures the problem: find the daughter, find her album, find that album's genre.

2. **Aligner:** Grounds this reasoning in the actual knowledge graph, constructing the precise, correct path: `Scott Swift --daughter--> Taylor Swift --album--> folklore --genre--> Indie Folk`. The graph also shows related but irrelevant information (e.g., Taylor's other album "Lover", the track "Seven", her nationality "USA", her general genre "pop") which the framework correctly ignores.

The aligned path is then passed to a "Responser" to generate the final, correct answer: "Indie Folk".

### Key Observations

1. **Error Source:** Both failing methods make a similar logical error: they associate the artist (Taylor Swift) directly with her predominant genre (Pop) instead of tracing the relationship to the specific album in question ("folklore") and then to its unique genre.

2. **Visual Coding:** Colors are used meaningfully. Green indicates correct steps or entities in the final path (Taylor Swift, folklore, Indie Folk). Red indicates errors (the wrong "pop" node and the incorrect edges leading to it). Blue is used for the starting entity (Scott Swift).

3. **Complexity of the KG:** The "Knowledge Path by Aligner" graph reveals the complexity of the underlying data, showing multiple possible paths and relationships. The framework's success lies in selecting the single correct path relevant to the specific question.

4. **Process vs. Output:** The diagram emphasizes that the *process* of reasoning and path construction is as important as the final answer. The "Our Framework" section dedicates equal space to the reasoning chain and the knowledge path.

### Interpretation

This diagram argues for a modular, neuro-symbolic approach to complex question answering over knowledge graphs. It demonstrates that:

* **Pure LLM exploration** (Training-free) can be myopic, taking locally correct steps but missing the global logical structure.

* **Pure path generation** (Training-based) can be brittle, generating plausible but factually incorrect relationships if not properly constrained.

* The proposed **decoupled framework** succeeds by first establishing a correct, high-level reasoning plan (the "what to do") and then meticulously grounding each step in the factual knowledge graph (the "how to do it"). This separation of concerns between logical reasoning and factual retrieval/alignment appears to be the key innovation for handling multi-hop queries where intermediate steps are critical. The diagram serves as a visual proof-of-concept for why this integrated yet modular design is superior for accurate knowledge-grounded reasoning.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Agent Exploration Methods for Knowledge Graph Query Resolution

### Overview

The diagram compares three approaches to resolving a knowledge graph (KG) query: "What's the music style of the album folklore by Scott Swift's daughter?" It contrasts training-free agent exploration methods, training-based path generation methods, and a proposed framework combining reasoning chains with knowledge path alignment. The correct answer ("Indie Folk") is highlighted through structured reasoning and KG alignment.

---

### Components/Axes

1. **Left Panel (Training-free Agent Exploration Methods)**:

- **LLM Agent**: Explores a graph with nodes (entities) and edges (relationships).

- **Graph Structure**:

- Nodes: Taylor Swift, Scott Swift, folklore, Indie Folk, pop.

- Edges:

- `daughter` (Taylor Swift → Scott Swift, t=1)

- `genre` (Taylor Swift → pop, t=2)

- `album` (Taylor Swift → folklore)

- `genre` (folklore → Indie Folk)

- **Incorrect Answer**: "Pop" (marked with red X).

- **Process**: "Explore on graph" with 2 steps (t=1, t=2).

2. **Right Panel (Training-based Path Generation Methods)**:

- **Path Generator**: Constructs a path: Scott → daughter → Taylor → album → folklore → style → Pop.

- **KG (Knowledge Graph)**: Visualized as a network with nodes (entities) and edges (relationships).

- **Incorrect Answer**: "Pop" (marked with red X).

- **Components**: Path Generator → KG.

3. **Bottom Panel (Proposed Framework)**:

- **Question**: "What's the music style of the album folklore by Scott Swift's daughter?"

- **Reasoning Chain by Reasoner**:

1. Identify daughter of "Scott Swift" (intermediate entity "c").

2. Verify "c" released album "folklore".

3. Determine genre of "folklore" (music style).

- **Knowledge Path by Aligner**:

- Visualizes correct path: Scott → daughter → Taylor → album → folklore → genre → Indie Folk.

- **Correct Answer**: "Indie Folk" (marked with green checkmark).

---

### Detailed Analysis

#### Training-free Methods

- **Graph Exploration**:

- The LLM Agent starts at Taylor Swift (t=1) and incorrectly infers "genre: pop" at t=2, ignoring the album "folklore" and its actual genre.

- Spatial grounding: The red X is positioned near the "pop" node, indicating the error.

#### Training-based Methods

- **Path Generation**:

- The Path Generator creates a path that incorrectly labels the final "style" edge as "Pop" instead of "Indie Folk."

- The KG visualization shows a direct edge from "folklore" to "Pop," which is factually wrong.

#### Proposed Framework

- **Reasoning Chain**:

- Step 1: Correctly identifies Scott Swift's daughter as Taylor Swift.

- Step 2: Confirms Taylor Swift released "folklore."

- Step 3: Accurately determines the genre of "folklore" as "Indie Folk."

- **Knowledge Path Alignment**:

- The Aligner visually connects entities with correct relationships, overriding the erroneous "Pop" label.

- The green checkmark emphasizes the validated answer.

---

### Key Observations

1. **Incorrect Assumptions in Existing Methods**:

- Both training-free and training-based methods fail due to:

- Overlooking the album "folklore" (Training-free).

- Mislabeling the genre in the KG (Training-based).

2. **Framework Advantages**:

- Structured reasoning ensures intermediate steps are validated.

- Knowledge path alignment corrects KG errors by prioritizing verified relationships.

---

### Interpretation

The diagram illustrates the limitations of unguided exploration (Training-free) and pre-trained path generation (Training-based) in KG query resolution. The proposed framework addresses these issues by:

1. **Decomposing the Query**: Breaking the problem into verifiable steps (Reasoner).

2. **Leveraging KG Structure**: Using the Aligner to correct KG inaccuracies and align paths with real-world data.

3. **Validation**: Explicitly marking incorrect answers (red X) and correct ones (green checkmark) to highlight the framework's robustness.

This approach underscores the importance of combining logical reasoning with KG alignment to handle noisy or incomplete knowledge graphs effectively.

DECODING INTELLIGENCE...