# Technical Document: Attention Backward Speed Analysis

## Chart Title

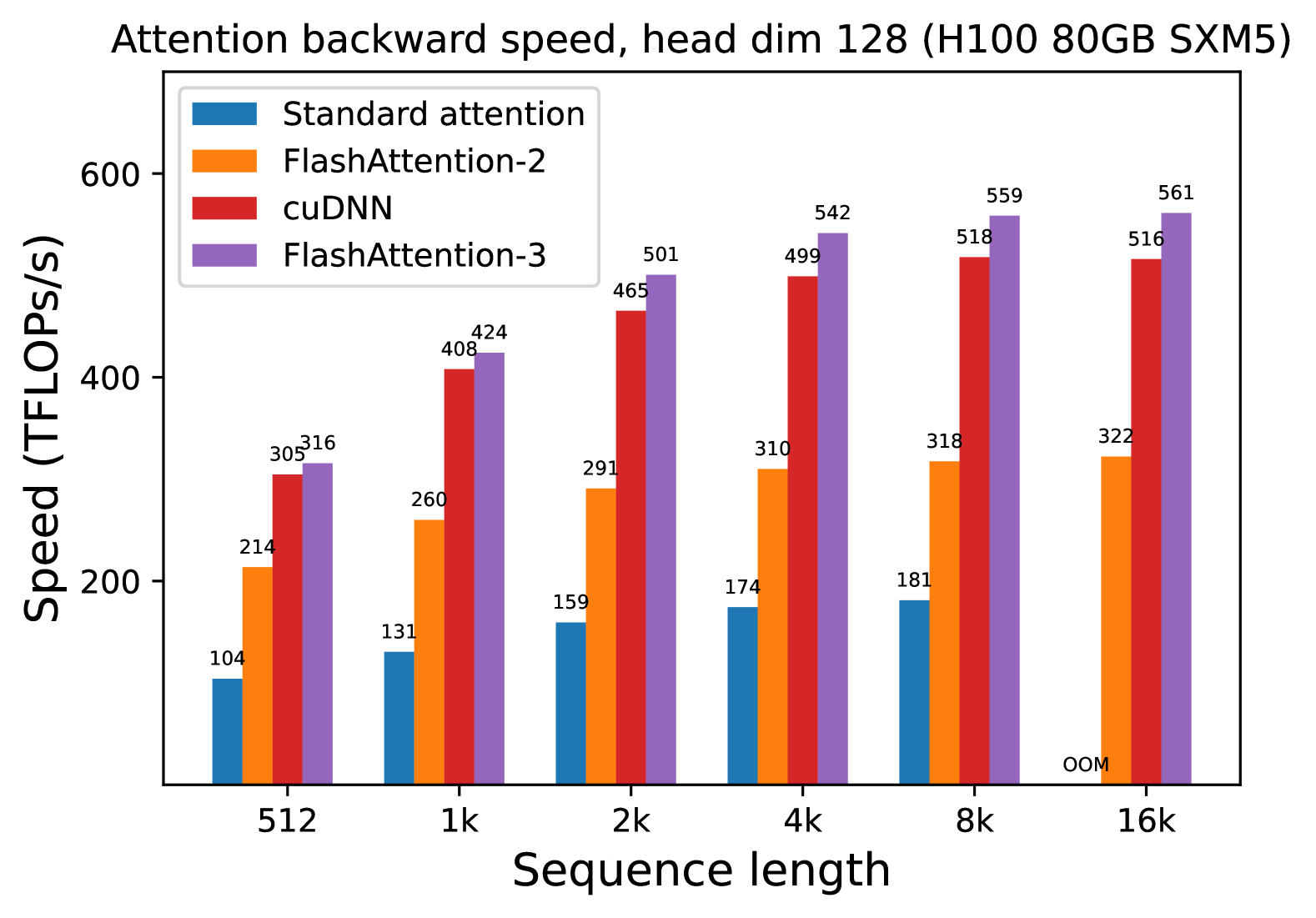

**Attention backward speed, head dim 128 (H100 80GB SXM5)**

---

### Axis Labels

- **X-axis**: Sequence length (categories: 512, 1k, 2k, 4k, 8k, 16k)

- **Y-axis**: Speed (TFLOPs/s)

---

### Legend

| Color | Method |

|-------------|----------------------|

| Blue | Standard attention |

| Orange | FlashAttention-2 |

| Red | cuDNN |

| Purple | FlashAttention-3 |

---

### Data Points (Speed in TFLOPs/s)

| Sequence Length | Standard attention | FlashAttention-2 | cuDNN | FlashAttention-3 |

|-----------------|--------------------|------------------|-------|------------------|

| 512 | 104 | 214 | 305 | 316 |

| 1k | 131 | 260 | 408 | 424 |

| 2k | 159 | 291 | 465 | 501 |

| 4k | 174 | 310 | 499 | 542 |

| 8k | 181 | 318 | 518 | 559 |

| 16k | OOM | 322 | 516 | 561 |

---

### Key Observations

1. **Performance Trends**:

- **FlashAttention-3** consistently achieves the highest speed across all sequence lengths (316–561 TFLOPs/s).

- **cuDNN** outperforms **FlashAttention-2** and **Standard attention** at all sequence lengths (305–518 TFLOPs/s).

- **Standard attention** shows diminishing returns and becomes **out of memory (OOM)** at 16k sequence length.

2. **Scalability**:

- All methods exhibit increased speed with longer sequence lengths, except Standard attention at 16k.

- FlashAttention-3 demonstrates the most significant performance improvement (e.g., +245 TFLOPs/s from 512 to 16k).

3. **Hardware Context**:

- Benchmarked on **H100 80GB SXM5** GPU with head dimension 128.

---

### Notes

- **OOM**: Indicates "out of memory" error for Standard attention at 16k sequence length.

- **Head dim 128**: Refers to the dimensionality of attention heads in the model architecture.