## Bar Chart: Performance on MATH Benchmark with and without SHEPHERD Augmentation

### Overview

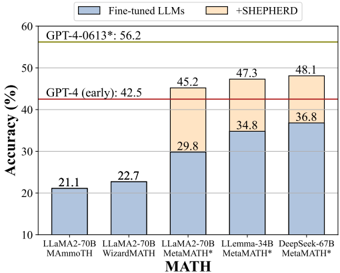

The image is a grouped, stacked bar chart comparing the accuracy of various Large Language Models (LLMs) on the "MATH" benchmark. It specifically contrasts the performance of models that have been fine-tuned on mathematical tasks ("Fine-tuned LLMs") against the performance of those same models when augmented with a method called "SHEPHERD" ("+SHEPHERD"). Two horizontal reference lines indicate the performance of GPT-4 variants.

### Components/Axes

* **Chart Type:** Grouped, stacked bar chart.

* **Y-Axis:** Labeled "Accuracy (%)". Scale runs from 10 to 60, with major tick marks every 10 units.

* **X-Axis:** Labeled "MATH". It lists five distinct model configurations:

1. `LLaMA2-70B MAMoTH`

2. `WizardMATH`

3. `LLaMA2-70B MetaMath*`

4. `Llemma-34B MetaMath*`

5. `DeepSeek-67B MetaMath*`

* **Legend:** Located at the top center of the chart area.

* A blue rectangle corresponds to "Fine-tuned LLMs".

* An orange rectangle corresponds to "+SHEPHERD".

* **Reference Lines:**

* A solid red horizontal line at approximately 42.5% accuracy, labeled "GPT-4 (early): 42.5".

* A solid yellow-green horizontal line at approximately 56.2% accuracy, labeled "GPT-4-0613*: 56.2".

### Detailed Analysis

The chart presents data for five model configurations. Each bar is stacked, with the blue segment representing the base fine-tuned model's accuracy and the orange segment representing the additional accuracy gained by applying SHEPHERD.

1. **LLaMA2-70B MAMoTH:**

* **Fine-tuned LLMs (Blue):** 21.1%

* **+SHEPHERD (Orange):** 0% (No orange segment is visible).

* **Total Accuracy:** 21.1%

2. **WizardMATH:**

* **Fine-tuned LLMs (Blue):** 22.7%

* **+SHEPHERD (Orange):** 0% (No orange segment is visible).

* **Total Accuracy:** 22.7%

3. **LLaMA2-70B MetaMath*:**

* **Fine-tuned LLMs (Blue):** 29.8%

* **+SHEPHERD (Orange):** 15.4% (Calculated as 45.2% total - 29.8% base).

* **Total Accuracy:** 45.2%

4. **Llemma-34B MetaMath*:**

* **Fine-tuned LLMs (Blue):** 34.8%

* **+SHEPHERD (Orange):** 12.5% (Calculated as 47.3% total - 34.8% base).

* **Total Accuracy:** 47.3%

5. **DeepSeek-67B MetaMath*:**

* **Fine-tuned LLMs (Blue):** 36.8%

* **+SHEPHERD (Orange):** 11.3% (Calculated as 48.1% total - 36.8% base).

* **Total Accuracy:** 48.1%

**Trend Verification:**

* **Base Models (Blue Segments):** The trend slopes upward from left to right. Accuracy increases from 21.1% (LLaMA2-70B MAMoTH) to 36.8% (DeepSeek-67B MetaMath*), indicating that the choice of base model and its specific fine-tuning (MAMoTH vs. WizardMATH vs. MetaMath*) significantly impacts baseline performance.

* **SHEPHERD Augmentation (Orange Segments):** SHEPHERD is only applied to the last three models (those using MetaMath* fine-tuning). For these, it provides a consistent positive boost, though the magnitude of the boost decreases slightly as the base model's performance increases (from +15.4% to +11.3%).

### Key Observations

1. **SHEPHERD's Impact:** The SHEPHERD method provides a substantial and consistent accuracy improvement for models fine-tuned with MetaMath*, boosting performance by between 11.3 and 15.4 percentage points.

2. **Model Hierarchy:** Among the tested configurations, `DeepSeek-67B MetaMath* + SHEPHERD` achieves the highest accuracy at 48.1%. The `LLaMA2-70B MetaMath* + SHEPHERD` configuration (45.2%) surpasses the `GPT-4 (early)` benchmark (42.5%).

3. **Benchmark Gap:** All tested model configurations, even the best-performing one (48.1%), remain below the performance of `GPT-4-0613*` (56.2%).

4. **Fine-tuning Method Matters:** Models fine-tuned with MetaMath* (columns 3-5) show significantly higher baseline performance (29.8%-36.8%) compared to those fine-tuned with MAMoTH or WizardMATH (21.1%-22.7%).

### Interpretation

This chart demonstrates the efficacy of the SHEPHERD augmentation technique for improving mathematical reasoning in LLMs. The data suggests that SHEPHERD is not a standalone solution but a powerful complementary method that builds upon a strong fine-tuned foundation (specifically, MetaMath* fine-tuning in this experiment).

The consistent upward trend in the blue bars indicates that advancements in base model architecture (e.g., DeepSeek vs. LLaMA) and fine-tuning methodology (MetaMath* vs. others) are primary drivers of performance. SHEPHERD then acts as a performance multiplier on top of these advances.

The fact that the best composite model still falls short of GPT-4-0613* highlights the continued gap between specialized, open-weight models and the capabilities of large, proprietary systems on complex reasoning tasks. However, the chart also shows a promising trajectory: by combining strong fine-tuning (MetaMath*) with targeted augmentation (SHEPHERD), smaller models can approach and even surpass earlier versions of state-of-the-art models like GPT-4 (early). This points to a viable pathway for developing more efficient and accessible high-performance AI systems for specialized domains like mathematics.