## Horizontal Bar Chart: AI Model Mean Accuracy Comparison

### Overview

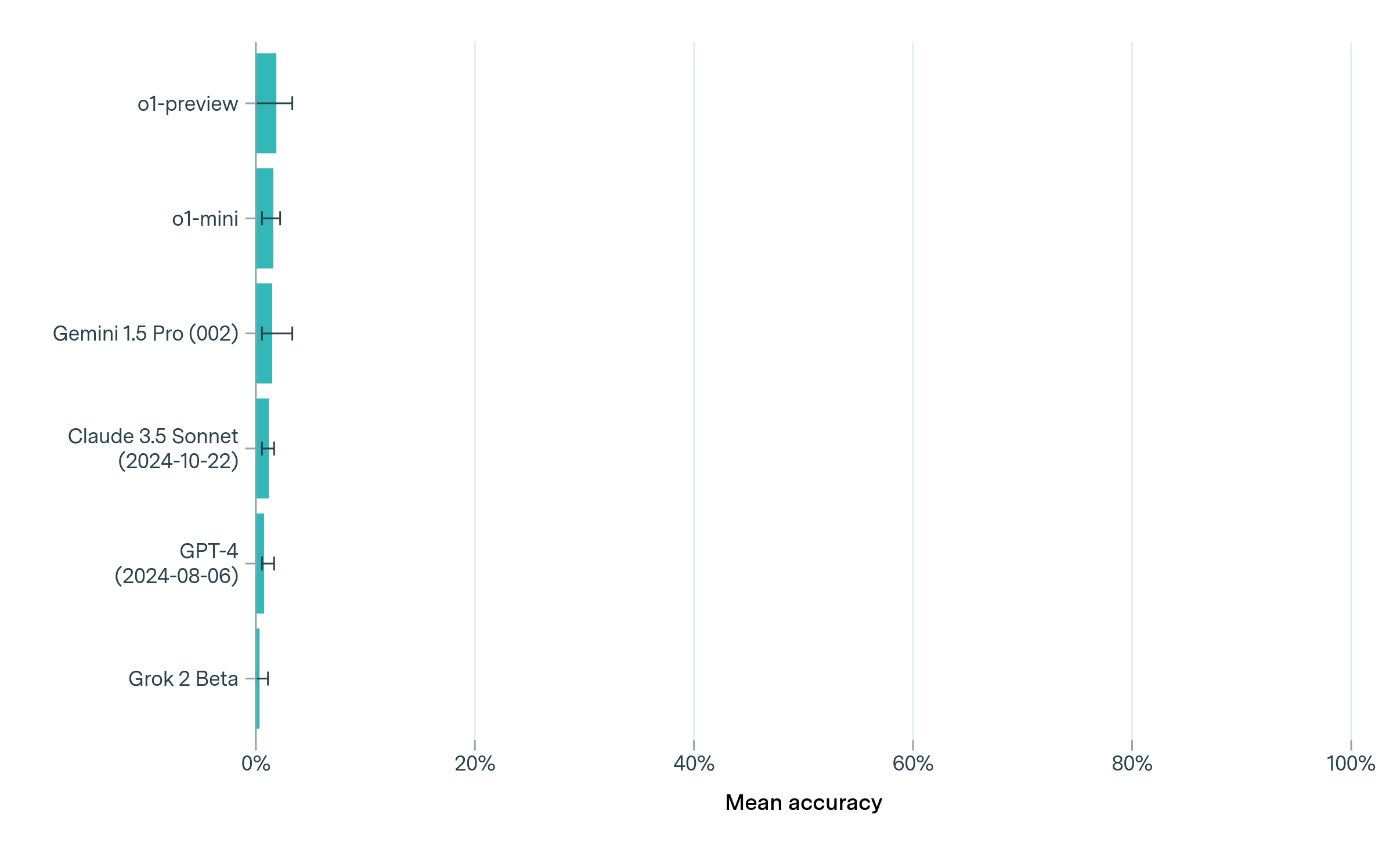

The image displays a horizontal bar chart comparing the mean accuracy of six different large language models (LLMs). The chart uses a single metric, "Mean accuracy," measured on a percentage scale from 0% to 100%. All models show very low accuracy scores, with bars clustered near the 0% mark. Each bar is accompanied by an error bar, indicating the variability or confidence interval of the measurement.

### Components/Axes

* **Vertical Axis (Y-axis):** Lists the names of the AI models being compared. From top to bottom:

1. `o1-preview`

2. `o1-mini`

3. `Gemini 1.5 Pro (002)`

4. `Claude 3.5 Sonnet (2024-10-22)`

5. `GPT-4 (2024-08-06)`

6. `Grok 2 Beta`

* **Horizontal Axis (X-axis):** Labeled "Mean accuracy". It has major tick marks and labels at 0%, 20%, 40%, 60%, 80%, and 100%. Vertical grid lines extend from these ticks across the chart area.

* **Data Series:** Represented by teal-colored horizontal bars. Each bar's length corresponds to the model's mean accuracy score.

* **Error Bars:** Thin black horizontal lines extending from the end of each teal bar, capped with small vertical lines. These represent the uncertainty or variance in the accuracy measurement.

### Detailed Analysis

**Trend Verification:** All data series show a similar visual trend: extremely short bars originating from the 0% baseline, indicating uniformly low mean accuracy across all listed models. There is no significant visual difference in bar length, suggesting performance is clustered within a narrow, low range.

**Data Point Extraction (Approximate Values):**

* **o1-preview:** The bar extends slightly further than the others. Estimated mean accuracy: **~3-4%**. The error bar spans approximately ±1%.

* **o1-mini:** Bar length is very similar to o1-preview, possibly marginally shorter. Estimated mean accuracy: **~3%**. Error bar: ±1%.

* **Gemini 1.5 Pro (002):** Bar length appears consistent with the top two. Estimated mean accuracy: **~3%**. Error bar: ±1%.

* **Claude 3.5 Sonnet (2024-10-22):** Bar length is consistent. Estimated mean accuracy: **~3%**. Error bar: ±1%.

* **GPT-4 (2024-08-06):** Bar length is consistent. Estimated mean accuracy: **~2-3%**. Error bar: ±1%.

* **Grok 2 Beta:** This is the shortest bar, barely visible past the axis line. Estimated mean accuracy: **~1% or less**. Error bar: ±0.5%.

**Spatial Grounding:** The legend (model names) is positioned on the left side, aligned with the start of each bar. The "Mean accuracy" axis label is centered at the bottom. The error bars are positioned at the right end of each data bar.

### Key Observations

1. **Uniformly Low Performance:** The most striking observation is that all six models, including recent and advanced versions, achieve a mean accuracy of less than 5% on the evaluated task. This suggests the task is exceptionally difficult or the evaluation metric is very stringent.

2. **Minimal Differentiation:** There is very little visual separation between the models' performance. `o1-preview` and `o1-mini` appear to have a very slight edge, while `Grok 2 Beta` shows the lowest measured accuracy.

3. **Consistent Uncertainty:** The error bars for all models are relatively small and of similar magnitude, indicating consistent measurement variance across the board.

4. **Chart Scale:** The choice of a 0-100% scale, while standard, visually minimizes the already small differences between the models because all data is compressed into the first 5% of the chart's width.

### Interpretation

This chart presents a Peircean snapshot of a specific benchmark or evaluation where current leading AI models are performing poorly. The data suggests one of several possibilities:

* The task measured is at the extreme edge of current LLM capabilities, possibly involving complex reasoning, specialized knowledge, or a novel format the models are not trained for.

* The "Mean accuracy" metric might be defined in a particularly rigorous way (e.g., requiring perfect, multi-step solutions).

* The models are being tested on a domain or problem type that is a known weakness for this class of AI.

The near-identical, low scores indicate a common performance ceiling. The slight lead of the `o1` models could hint at architectural or training differences that provide a marginal advantage on this specific challenge. However, the overarching conclusion is not about ranking these models, but about highlighting the significant gap between their current abilities and the demands of the task represented by this chart. The visualization effectively communicates that, for this particular measure, all models are struggling similarly.