## Horizontal Grouped Bar Chart: AI Model Reflection Percentages Across Datasets

### Overview

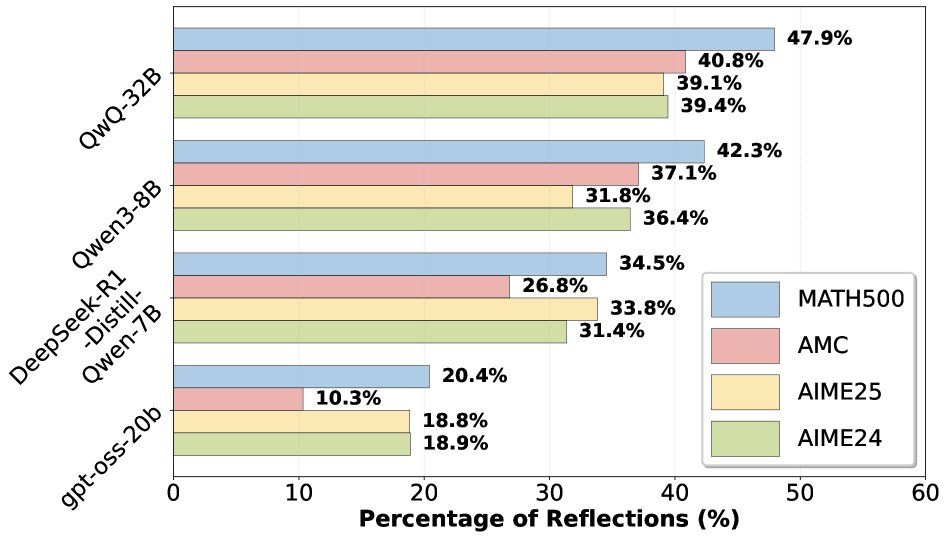

The image displays a horizontal grouped bar chart comparing the performance of four different AI models on four distinct mathematical reasoning datasets. The performance metric is the "Percentage of Reflections (%)," which likely measures a specific capability or behavior of the models. The chart is organized with models on the vertical axis and percentage values on the horizontal axis.

### Components/Axes

* **Chart Type:** Horizontal Grouped Bar Chart.

* **Y-Axis (Vertical):** Lists four AI models. From top to bottom:

1. `QwQ-32B`

2. `Qwen3-8B`

3. `DeepSeek-R1-Distill-Qwen-7B`

4. `gpt-oss-20b`

* **X-Axis (Horizontal):** Labeled "Percentage of Reflections (%)". The scale runs from 0 to 60, with major tick marks at intervals of 10 (0, 10, 20, 30, 40, 50, 60).

* **Legend:** Positioned in the bottom-right corner of the chart area. It defines four datasets, each associated with a specific color:

* **Light Blue:** `MATH500`

* **Light Red/Pink:** `AMC`

* **Light Yellow:** `AIME25`

* **Light Green:** `AIME24`

* **Data Series:** For each model, there are four horizontal bars, one for each dataset, grouped together. The bars are ordered from top to bottom within each group as: MATH500 (blue), AMC (red), AIME25 (yellow), AIME24 (green).

### Detailed Analysis

**Model: QwQ-32B (Top Group)**

* **Trend:** This model shows the highest overall performance across all datasets.

* **Data Points:**

* MATH500 (Blue): **47.9%** (Longest bar in the group, extends furthest right).

* AMC (Red): **40.8%**

* AIME25 (Yellow): **39.1%**

* AIME24 (Green): **39.4%**

**Model: Qwen3-8B (Second Group)**

* **Trend:** Performance is lower than QwQ-32B but follows a similar pattern, with MATH500 being the strongest.

* **Data Points:**

* MATH500 (Blue): **42.3%**

* AMC (Red): **37.1%**

* AIME25 (Yellow): **31.8%**

* AIME24 (Green): **36.4%**

**Model: DeepSeek-R1-Distill-Qwen-7B (Third Group)**

* **Trend:** Shows a different pattern. The AMC score is notably lower than the others in this group.

* **Data Points:**

* MATH500 (Blue): **34.5%**

* AMC (Red): **26.8%** (Significantly shorter bar than the others in this group).

* AIME25 (Yellow): **33.8%**

* AIME24 (Green): **31.4%**

**Model: gpt-oss-20b (Bottom Group)**

* **Trend:** This model has the lowest performance across all datasets. The AMC score is particularly low.

* **Data Points:**

* MATH500 (Blue): **20.4%**

* AMC (Red): **10.3%** (The shortest bar in the entire chart).

* AIME25 (Yellow): **18.8%**

* AIME24 (Green): **18.9%**

### Key Observations

1. **Consistent Leader:** `QwQ-32B` achieves the highest percentage on all four datasets.

2. **Dataset Difficulty:** For every model, the `MATH500` dataset (blue bar) yields the highest or tied-for-highest percentage, suggesting it may be the "easiest" for these models under this metric. Conversely, the `AMC` dataset (red bar) often yields the lowest or second-lowest score, indicating it may be more challenging.

3. **Model Ranking:** The performance hierarchy between models is consistent across all datasets: QwQ-32B > Qwen3-8B > DeepSeek-R1-Distill-Qwen-7B > gpt-oss-20b.

4. **Notable Outlier:** The `DeepSeek-R1-Distill-Qwen-7B` model's performance on the `AMC` dataset (26.8%) is a significant dip compared to its scores on the other three datasets (all above 31%), breaking the otherwise smooth gradient of its performance.

5. **Scale of Difference:** The top-performing model (`QwQ-32B`) scores more than 4.5 times higher than the lowest-performing model (`gpt-oss-20b`) on the `AMC` dataset (40.8% vs. 10.3%).

### Interpretation

This chart provides a comparative benchmark of AI model capabilities on mathematical reasoning tasks, measured by a "Percentage of Reflections." The data suggests a clear performance stratification among the tested models, with `QwQ-32B` demonstrating superior capability across diverse problem sets (MATH500, AMC, AIME24, AIME25).

The consistent pattern where `MATH500` scores are highest could imply that this dataset aligns well with the models' training data or that its problems are more susceptible to the "reflection" behavior being measured. The relative difficulty of the `AMC` dataset, especially for the `gpt-oss-20b` and `DeepSeek-R1-Distill-Qwen-7B` models, may highlight specific weaknesses in handling certain types of mathematical problems or reasoning chains.

The stark performance gap between the top and bottom models (e.g., ~48% vs. ~20% on MATH500) underscores significant differences in model architecture, training, or scale. The chart effectively communicates that model size alone (e.g., `gpt-oss-20b` vs. `Qwen3-8B`) is not the sole determinant of performance on this specific metric, as the 8B model outperforms the 20b model. This invites further investigation into the qualitative nature of the "reflections" being measured and the specific design of each model.