## Diagram: LLM Explainability Barrier

### Overview

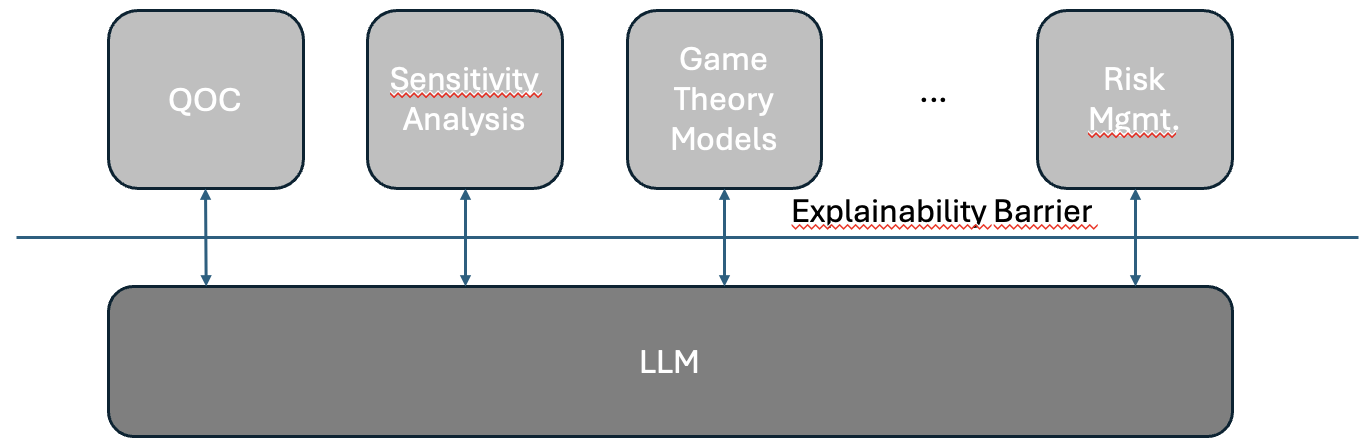

The image is a conceptual diagram illustrating the relationship between a Large Language Model (LLM) and various analytical or decision-making frameworks. It visually represents a barrier to explainability that exists between the LLM and these external systems.

### Components

The diagram is composed of two primary layers separated by a horizontal line.

1. **Top Layer (Analytical Frameworks):**

* A row of four rounded rectangular boxes, colored light gray with dark borders.

* From left to right, the boxes are labeled:

* **QOC** (Position: Top-left)

* **Sensitivity Analysis** (Position: Top-center-left). The text has a red, wavy underline, suggesting a spell-check or emphasis marker.

* **Game Theory Models** (Position: Top-center-right)

* **...** (Ellipsis, indicating additional, unspecified frameworks)

* **Risk Mgmt.** (Position: Top-right). The text "Mgmt." has a red, wavy underline.

* Each box has a vertical, double-headed arrow pointing downward.

2. **Separating Element:**

* A solid, horizontal blue line spans the width of the diagram.

* Centered above this line is the label **"Explainability Barrier"**. The text has a red, wavy underline.

3. **Bottom Layer (Core Model):**

* A single, wide rounded rectangular box, colored dark gray with a dark border.

* It is labeled **"LLM"** in white text.

* This box is positioned centrally at the bottom of the diagram.

### Detailed Analysis

* **Flow and Relationships:** The double-headed arrows connect each of the top-layer analytical frameworks directly to the single LLM box below. All arrows must cross the horizontal "Explainability Barrier" line.

* **Spatial Grounding:** The "Explainability Barrier" label is positioned centrally, directly on the dividing line. The LLM box is the foundational element, centered at the bottom. The analytical frameworks are distributed evenly across the top.

* **Text Transcription:** All text is in English. The red underlines on "Sensitivity", "Analysis", "Mgmt.", and "Barrier" are visual artifacts within the image itself, not part of the conceptual labels.

### Key Observations

1. **Central Barrier:** The "Explainability Barrier" is the diagram's focal point, explicitly named as the obstacle between the LLM and external systems.

2. **Bidirectional Interaction:** The double-headed arrows imply a two-way flow of information or requests between the LLM and the frameworks, not a one-way output.

3. **Heterogeneous Top Layer:** The top layer represents diverse fields (Quality of Care/Question, Sensitivity Analysis, Game Theory, Risk Management), suggesting the explainability challenge is universal across different types of analysis.

4. **Ellipsis Implication:** The "..." indicates the list of affected frameworks is not exhaustive; the problem extends to other similar systems.

### Interpretation

This diagram presents a Peircean investigative model of a core challenge in AI deployment. It argues that while LLMs can interact with and inform sophisticated analytical models (the top layer), a fundamental "Explainability Barrier" impedes clear, interpretable communication.

* **What it Suggests:** The LLM's internal reasoning is opaque. When it provides outputs to a Sensitivity Analysis or a Game Theory Model, the "why" behind its conclusions is obscured. The barrier represents the gap between the model's complex, high-dimensional computations and the human-understandable logic or causal relationships required by traditional analytical frameworks.

* **Relationships:** The LLM is positioned as a central, powerful engine (the dark, foundational box). The analytical frameworks are clients or consumers of its outputs. The barrier is not a wall but a filter or distortion field that complicates the integration of LLM capabilities into established decision-making processes.

* **Anomaly/Notable Point:** The bidirectional arrows are critical. They suggest the barrier also impedes the ability of these frameworks to *query* or *interrogate* the LLM effectively, not just to receive its answers. This highlights a two-way explainability problem. The red underlines, while likely an artifact, ironically emphasize the very terms ("Sensitivity Analysis", "Risk Mgmt.") that are most hindered by the lack of explainability.