TECHNICAL ASSET FINGERPRINT

f66dbd75d0972b50689d13d3

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Structure-Aware Premise and Hypothesis Flow

### Overview

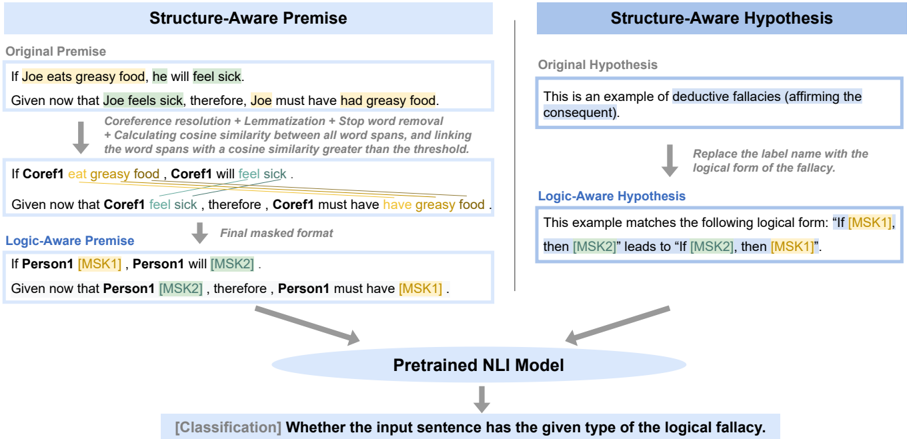

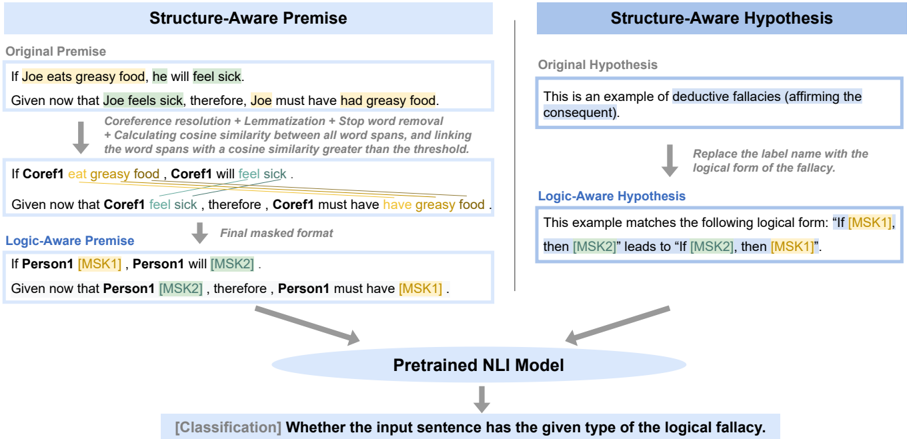

The image presents a diagram illustrating the transformation of an original premise and hypothesis into logic-aware forms using a structure-aware approach. It shows the steps involved in processing the premise and hypothesis, including coreference resolution, lemmatization, and masking, before feeding them into a pretrained NLI model for classification.

### Components/Axes

* **Titles:** "Structure-Aware Premise" (left), "Structure-Aware Hypothesis" (right)

* **Sections:**

* Original Premise/Hypothesis

* Intermediate Processing Steps (Coreference resolution, etc.)

* Logic-Aware Premise/Hypothesis

* Pretrained NLI Model

* Classification Output

* **Arrows:** Indicate the flow of information and transformations.

### Detailed Analysis or ### Content Details

**1. Structure-Aware Premise (Left Side):**

* **Original Premise:**

* "If Joe eats greasy food, he will feel sick." (Highlighted: "eats greasy food" in yellow, "feel sick" in light green)

* "Given now that Joe feels sick, therefore, Joe must have had greasy food." (Highlighted: "feels sick" in light green, "had greasy food" in yellow)

* **Processing Steps:**

* "Coreference resolution + Lemmatization + Stop word removal"

* "+ Calculating cosine similarity between all word spans, and linking the word spans with a cosine similarity greater than the threshold."

* **Intermediate Premise:**

* "If Coref1 eat greasy food, Coref1 will feel sick." (Highlighted: "eat greasy food" in yellow, "feel sick" in light green)

* "Given now that Coref1 feel sick, therefore, Coref1 must have have greasy food." (Highlighted: "feel sick" in light green, "have greasy food" in yellow)

* Lines connect "eat greasy food" to "have greasy food" and "feel sick" to "feel sick".

* **Logic-Aware Premise:**

* "If Person1 [MSK1], Person1 will [MSK2]."

* "Given now that Person1 [MSK2], therefore, Person1 must have [MSK1]."

**2. Structure-Aware Hypothesis (Right Side):**

* **Original Hypothesis:**

* "This is an example of deductive fallacies (affirming the consequent)."

* **Processing Step:**

* "Replace the label name with the logical form of the fallacy."

* **Logic-Aware Hypothesis:**

* "This example matches the following logical form: "If [MSK1], then [MSK2]" leads to "If [MSK2], then [MSK1]"." (Highlighted: "[MSK1]" in yellow, "[MSK2]" in light blue)

**3. Pretrained NLI Model (Bottom Center):**

* **Text:** "Pretrained NLI Model"

* **Input:** Arrows from both Logic-Aware Premise and Logic-Aware Hypothesis point to this block.

* **Output:** An arrow points downwards from this block.

**4. Classification Output (Bottom):**

* **Text:** "[Classification] Whether the input sentence has the given type of the logical fallacy."

### Key Observations

* The diagram illustrates a process of transforming natural language premises and hypotheses into a masked, logic-aware format.

* Coreference resolution, lemmatization, and cosine similarity calculations are used to identify relationships between words and phrases.

* The masked format replaces specific phrases with generic placeholders like "[MSK1]" and "[MSK2]".

* The transformed premise and hypothesis are fed into a pretrained NLI model for classification.

### Interpretation

The diagram describes a method for analyzing logical fallacies in natural language. The key idea is to convert the original statements into a more structured, logic-aware representation before feeding them to a machine learning model. This involves identifying coreferences, simplifying the language, and masking specific parts of the sentence. By doing so, the model can focus on the underlying logical structure rather than the specific words used. The NLI model then classifies whether the input sentence exhibits a particular type of logical fallacy. The highlighting of phrases and the connecting lines visually emphasize the relationships and transformations occurring during the process.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Structure-Aware Premise to Logic-Aware Hypothesis Flow

### Overview

This diagram illustrates a process for converting a natural language premise into a logic-aware hypothesis, utilizing a pretrained Natural Language Inference (NLI) model to identify logical fallacies. The diagram is split into two main columns: "Structure-Aware Premise" on the left and "Structure-Aware Hypothesis" on the right, both feeding into a central "Pretrained NLI Model" component.

### Components/Axes

The diagram consists of several text blocks within rectangular boxes, connected by arrows indicating the flow of information. Key components include:

* **Original Premise:** A natural language statement.

* **Given that…:** A statement based on the original premise.

* **Coreference resolution + Lemmatization + Stop word removal + Calculating cosine similarity between all word spans, and linking the word spans with a cosine similarity greater than the threshold:** A description of the processing steps.

* **Final masked format:** The output of the processing steps.

* **Logic-Aware Premise:** A masked version of the premise.

* **Original Hypothesis:** A natural language statement.

* **Logic-Aware Hypothesis:** A logical form of the hypothesis.

* **Pretrained NLI Model:** The core component performing the classification.

* **[Classification] Whether the input sentence has the given type of the logical fallacy:** The output of the NLI model.

### Detailed Analysis or Content Details

**Structure-Aware Premise (Left Column):**

* **Original Premise:** "If Joe eats greasy food, he will feel sick."

* **Given that:** "Given now that Joe feels sick, therefore, Joe must have had greasy food."

* **Processing Steps:** "Coreference resolution + Lemmatization + Stop word removal + Calculating cosine similarity between all word spans, and linking the word spans with a cosine similarity greater than the threshold."

* **Intermediate Result:** "If Coref1 eat greasy food, Coref1 will feel sick."

* **Given that:** "Given now that Coref1 feel sick, therefore, Coref1 must have greasy food."

* **Final masked format:** "If Person1 [MSK1], Person1 will [MSK2]. Given now that Person1 [MSK2], therefore, Person1 must have [MSK1]."

**Structure-Aware Hypothesis (Right Column):**

* **Original Hypothesis:** "This is an example of deductive fallacies (affirming the consequent)."

* **Replace the label name with the logical form of the fallacy:** (Implied action)

* **Logic-Aware Hypothesis:** "This example matches the following logical form: 'If [MSK1], then [MSK2]' leads to '[MSK2], then [MSK1]'."

**Central Component:**

* **Pretrained NLI Model:** Receives input from both the Structure-Aware Premise and Structure-Aware Hypothesis.

* **Output:** "[Classification] Whether the input sentence has the given type of the logical fallacy."

### Key Observations

The diagram highlights a transformation process. Natural language statements are converted into a masked, logic-aware format. The use of "Coref1" and "Person1" suggests a coreference resolution step. The masking with "[MSK1]" and "[MSK2]" indicates a generalization or abstraction of the original statements. The diagram emphasizes the use of an NLI model for classifying logical fallacies.

### Interpretation

The diagram demonstrates a method for identifying logical fallacies in natural language using a combination of linguistic processing and machine learning. The process involves converting natural language into a more structured, logic-based representation that can be readily analyzed by an NLI model. The coreference resolution, lemmatization, and stop word removal steps aim to reduce noise and improve the accuracy of the analysis. The masking of specific terms with "[MSK1]" and "[MSK2]" suggests an attempt to generalize the logical structure of the statements, allowing the model to identify fallacies across different contexts. The final classification step determines whether the input sentence exhibits a specific type of logical fallacy. The diagram suggests a pipeline for automated fallacy detection, potentially useful in areas like argument mining, critical thinking education, and misinformation detection. The use of "affirming the consequent" as an example suggests the system is designed to identify common deductive fallacies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Structure-Aware Logical Fallacy Detection Process

### Overview

The image is a technical flowchart illustrating a two-path process for transforming natural language text into a standardized, logic-aware format for input into a Pretrained Natural Language Inference (NLI) model. The goal is to classify whether an input sentence contains a specific type of logical fallacy. The diagram is divided into two main vertical columns: "Structure-Aware Premise" on the left and "Structure-Aware Hypothesis" on the right, which converge at the bottom into a central model.

### Components/Axes

The diagram has no traditional chart axes. Its components are text boxes, arrows, and a central processing node.

**1. Main Columns:**

* **Left Column Header:** "Structure-Aware Premise" (blue background, white text).

* **Right Column Header:** "Structure-Aware Hypothesis" (blue background, white text).

**2. Central Processing Node:**

* A light blue oval at the bottom center labeled "Pretrained NLI Model".

* An arrow points from this oval to a final output box.

**3. Final Output:**

* A light blue box at the very bottom labeled: "[Classification] Whether the input sentence has the given type of the logical fallacy."

**4. Flow Arrows:** Grey arrows indicate the direction of data transformation and flow between steps.

### Detailed Analysis

#### **Left Column: Structure-Aware Premise**

This column details the transformation of a premise statement.

* **Step 1: Original Premise**

* **Text:** "If Joe eats greasy food, he will feel sick. Given now that Joe feels sick, therefore, Joe must have had greasy food."

* **Note:** The words "Joe" (first instance), "he", "Joe" (second instance), "Joe" (third instance), and "had" are highlighted in yellow.

* **Step 2: Transformation Process (Arrow Label)**

* **Text:** "Coreference resolution + Lemmatization + Stop word removal + Calculating cosine similarity between all word spans, and linking the word spans with a cosine similarity greater than the threshold."

* **Step 3: Intermediate Output**

* **Text:** "If Coref1 eat greasy food, Coref1 will feel sick. Given now that Coref1 feel sick, therefore, Coref1 must have have greasy food."

* **Note:** "Coref1" (all instances), "eat", "feel sick" (first instance), "feel sick" (second instance), and "have" are highlighted in yellow. This shows the result of coreference resolution (replacing "Joe"/"he" with "Coref1") and lemmatization (e.g., "eats" -> "eat", "had" -> "have").

* **Step 4: Final Transformation (Arrow Label)**

* **Text:** "Final masked format"

* **Step 5: Logic-Aware Premise**

* **Text:** "If Person1 [MSK1], Person1 will [MSK2]. Given now that Person1 [MSK2], therefore, Person1 must have [MSK1]."

* **Note:** "Person1" (all instances), "[MSK1]" (both instances), and "[MSK2]" (both instances) are highlighted in green. This is the final, abstracted format where specific actions/states are replaced with generic masks ([MSK1], [MSK2]) and the entity is generalized to "Person1".

#### **Right Column: Structure-Aware Hypothesis**

This column details the transformation of a hypothesis statement that labels the fallacy.

* **Step 1: Original Hypothesis**

* **Text:** "This is an example of deductive fallacies (affirming the consequent)."

* **Note:** The phrase "deductive fallacies (affirming the consequent)" is highlighted in blue.

* **Step 2: Transformation Process (Arrow Label)**

* **Text:** "Replace the label name with the logical form of the fallacy."

* **Step 3: Logic-Aware Hypothesis**

* **Text:** "This example matches the following logical form: \"If [MSK1], then [MSK2]\" leads to \"If [MSK2], then [MSK1]\"."

* **Note:** The entire logical form string is highlighted in green. This step replaces the specific fallacy name with its abstract logical structure, using the same mask tokens ([MSK1], [MSK2]) as the premise path.

#### **Convergence and Classification**

* Two grey arrows, one from the "Logic-Aware Premise" box and one from the "Logic-Aware Hypothesis" box, point to the "Pretrained NLI Model" oval.

* This indicates that the two standardized, logic-aware texts are fed as a pair into the NLI model.

* The model's output is the final classification decision.

### Key Observations

1. **Parallel Transformation:** The process applies analogous transformations to both the premise (the example text) and the hypothesis (the fallacy label), converting them into a shared, abstract language of masks ([MSK1], [MSK2]) and generic entities ("Person1").

2. **Color-Coding:** The diagram uses color highlights to track elements through transformation: yellow for original content being processed, blue for the original fallacy label, and green for the final masked/logical form elements.

3. **Standardization Goal:** The entire pipeline aims to remove lexical and coreference variability, presenting the NLI model with a pure logical structure to evaluate.

4. **Spatial Layout:** The left (premise) path is more complex, involving multiple NLP steps. The right (hypothesis) path is a single substitution step. Both are given equal weight, feeding centrally into the model.

### Interpretation

This diagram outlines a methodology for improving the detection of logical fallacies in text using pretrained NLI models. The core innovation is a **structure-aware preprocessing pipeline** that decouples the logical form from the specific wording.

* **What it demonstrates:** It shows how to convert a real-world example of a fallacy ("affirming the consequent") and its definition into a canonical form. By replacing specific actions ("eat greasy food", "feel sick") with masks ([MSK1], [MSK2]) and resolving pronouns, the system forces the model to focus on the flawed logical pattern ("If P then Q; Q; therefore P") rather than the content.

* **How elements relate:** The "Premise" path provides the *instance* of reasoning to be judged. The "Hypothesis" path provides the *abstract pattern* of the fallacy. The NLI model is then tasked with determining if the instance matches the pattern—a more straightforward inference problem than detecting the fallacy in raw text.

* **Significance:** This approach likely makes fallacy detection more robust and generalizable. A model trained on such paired, logic-aware examples should be better at identifying the same fallacy in different contexts (e.g., "If it rains, the ground is wet. The ground is wet. Therefore, it rained.") because it has learned the abstract structure, not just keyword associations. The "Pretrained NLI Model" is leveraged for its understanding of textual entailment, repurposed here for logical form matching.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Structure-Aware NLI Model Pipeline

### Overview

The diagram illustrates a pipeline for processing natural language inputs through a pretrained Natural Language Inference (NLI) model. It demonstrates how raw text is transformed into structured formats for logical fallacy classification, focusing on two key components: **Structure-Aware Premise** and **Structure-Aware Hypothesis**, with a final classification task.

---

### Components/Axes

1. **Structure-Aware Premise**

- **Original Premise**: "If Joe eats greasy food, he will feel sick."

- **Given Statement**: "Given that Joe feels sick, therefore Joe must have had greasy food."

- **Processing Steps**:

- Coreference resolution + Lemmatization + Stop word removal

- Calculating cosine similarity between word spans

- Threshold-based linking (cosine similarity > threshold)

- **Transformed Premise**: "If Coref1 eat greasy food, Coref1 will feel sick."

- **Logic-Aware Premise**: "If Person1 [MSK1], Person1 will [MSK2]."

- **Final Masked Format**: "Given that Person1 [MSK2], therefore Person1 must have [MSK1]."

2. **Structure-Aware Hypothesis**

- **Original Hypothesis**: "This is an example of deductive fallacies (affirming the consequent)."

- **Logical Form Transformation**:

- Replace label name with logical form: "If [MSK1], then [MSK2] leads to [MSK1]."

- **Logic-Aware Hypothesis**: "This example matches the following logical form: 'If [MSK1], then [MSK2] leads to [MSK1]."

3. **Pretrained NLI Model**

- **Classification Task**: Determine if an input sentence matches a specific logical fallacy type (e.g., deductive fallacy).

---

### Detailed Analysis

#### Structure-Aware Premise

- **Original Premise**: Directly quoted as "If Joe eats greasy food, he will feel sick."

- **Given Statement**: Rephrased as "Given that Joe feels sick, therefore Joe must have had greasy food."

- **Processing Steps**:

- Coreference resolution replaces "Joe" with "Coref1."

- Lemmatization and stop word removal simplify the sentence.

- Cosine similarity thresholds link word spans (e.g., "feels sick" → "will feel sick").

- **Final Masked Format**: Uses placeholders `[MSK1]` and `[MSK2]` to anonymize entities and actions.

#### Structure-Aware Hypothesis

- **Original Hypothesis**: Labels the example as a deductive fallacy ("affirming the consequent").

- **Logical Form**: Transforms the hypothesis into a structured logical statement: "If [MSK1], then [MSK2] leads to [MSK1]."

- **Key Insight**: Demonstrates how the model identifies and categorizes logical fallacies by mapping natural language to formal logic.

#### Pretrained NLI Model

- **Classification**: The model evaluates whether an input sentence aligns with a predefined logical fallacy type (e.g., deductive fallacy).

- **Input**: Masked sentences (e.g., "Given that Person1 [MSK2], therefore Person1 must have [MSK1]").

---

### Key Observations

1. **Coreference and Simplification**: The pipeline standardizes pronouns (e.g., "Joe" → "Coref1") and removes stop words to focus on semantic relationships.

2. **Logical Form Mapping**: The hypothesis section explicitly links natural language to formal logic (e.g., "If A, then B" → "If [MSK1], then [MSK2]").

3. **Masked Placeholders**: `[MSK1]` and `[MSK2]` represent anonymized entities/actions, enabling the model to generalize across inputs.

4. **Threshold-Based Linking**: Cosine similarity thresholds ensure only semantically relevant word spans are connected.

---

### Interpretation

This diagram outlines a framework for training an NLI model to detect logical fallacies in text. By transforming raw sentences into structured formats (e.g., masked premises/hypotheses), the model learns to classify inputs based on their alignment with formal logical patterns. The use of coreference resolution and cosine similarity thresholds ensures robustness to variations in natural language expression. The final classification task highlights the model's ability to generalize across diverse inputs, making it applicable to real-world scenarios like legal document analysis or automated fact-checking.

DECODING INTELLIGENCE...