\n

## Diagram: Structure-Aware Premise to Logic-Aware Hypothesis Flow

### Overview

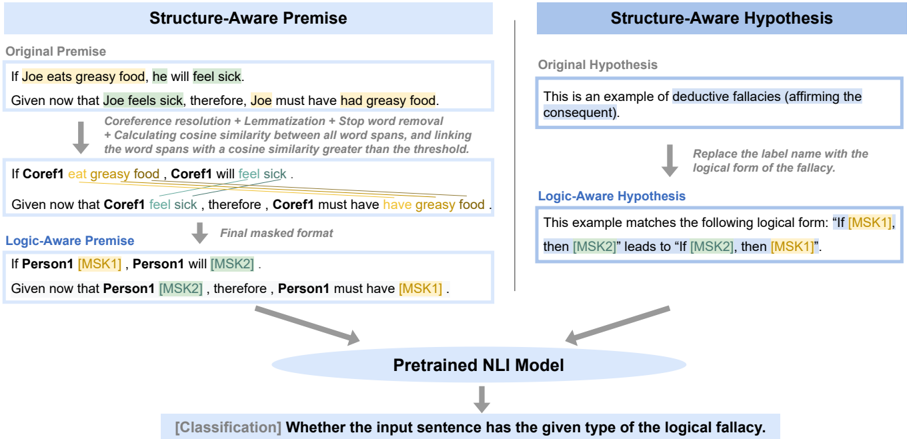

This diagram illustrates a process for converting a natural language premise into a logic-aware hypothesis, utilizing a pretrained Natural Language Inference (NLI) model to identify logical fallacies. The diagram is split into two main columns: "Structure-Aware Premise" on the left and "Structure-Aware Hypothesis" on the right, both feeding into a central "Pretrained NLI Model" component.

### Components/Axes

The diagram consists of several text blocks within rectangular boxes, connected by arrows indicating the flow of information. Key components include:

* **Original Premise:** A natural language statement.

* **Given that…:** A statement based on the original premise.

* **Coreference resolution + Lemmatization + Stop word removal + Calculating cosine similarity between all word spans, and linking the word spans with a cosine similarity greater than the threshold:** A description of the processing steps.

* **Final masked format:** The output of the processing steps.

* **Logic-Aware Premise:** A masked version of the premise.

* **Original Hypothesis:** A natural language statement.

* **Logic-Aware Hypothesis:** A logical form of the hypothesis.

* **Pretrained NLI Model:** The core component performing the classification.

* **[Classification] Whether the input sentence has the given type of the logical fallacy:** The output of the NLI model.

### Detailed Analysis or Content Details

**Structure-Aware Premise (Left Column):**

* **Original Premise:** "If Joe eats greasy food, he will feel sick."

* **Given that:** "Given now that Joe feels sick, therefore, Joe must have had greasy food."

* **Processing Steps:** "Coreference resolution + Lemmatization + Stop word removal + Calculating cosine similarity between all word spans, and linking the word spans with a cosine similarity greater than the threshold."

* **Intermediate Result:** "If Coref1 eat greasy food, Coref1 will feel sick."

* **Given that:** "Given now that Coref1 feel sick, therefore, Coref1 must have greasy food."

* **Final masked format:** "If Person1 [MSK1], Person1 will [MSK2]. Given now that Person1 [MSK2], therefore, Person1 must have [MSK1]."

**Structure-Aware Hypothesis (Right Column):**

* **Original Hypothesis:** "This is an example of deductive fallacies (affirming the consequent)."

* **Replace the label name with the logical form of the fallacy:** (Implied action)

* **Logic-Aware Hypothesis:** "This example matches the following logical form: 'If [MSK1], then [MSK2]' leads to '[MSK2], then [MSK1]'."

**Central Component:**

* **Pretrained NLI Model:** Receives input from both the Structure-Aware Premise and Structure-Aware Hypothesis.

* **Output:** "[Classification] Whether the input sentence has the given type of the logical fallacy."

### Key Observations

The diagram highlights a transformation process. Natural language statements are converted into a masked, logic-aware format. The use of "Coref1" and "Person1" suggests a coreference resolution step. The masking with "[MSK1]" and "[MSK2]" indicates a generalization or abstraction of the original statements. The diagram emphasizes the use of an NLI model for classifying logical fallacies.

### Interpretation

The diagram demonstrates a method for identifying logical fallacies in natural language using a combination of linguistic processing and machine learning. The process involves converting natural language into a more structured, logic-based representation that can be readily analyzed by an NLI model. The coreference resolution, lemmatization, and stop word removal steps aim to reduce noise and improve the accuracy of the analysis. The masking of specific terms with "[MSK1]" and "[MSK2]" suggests an attempt to generalize the logical structure of the statements, allowing the model to identify fallacies across different contexts. The final classification step determines whether the input sentence exhibits a specific type of logical fallacy. The diagram suggests a pipeline for automated fallacy detection, potentially useful in areas like argument mining, critical thinking education, and misinformation detection. The use of "affirming the consequent" as an example suggests the system is designed to identify common deductive fallacies.