## Diagram: Structure-Aware NLI Model Pipeline

### Overview

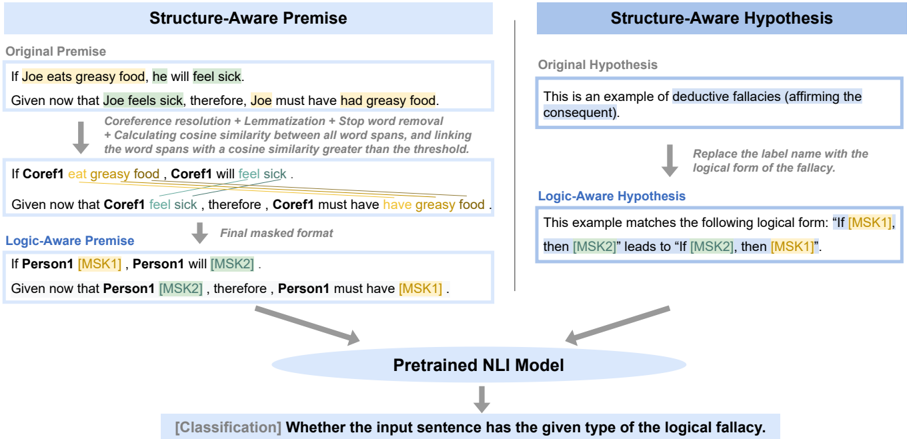

The diagram illustrates a pipeline for processing natural language inputs through a pretrained Natural Language Inference (NLI) model. It demonstrates how raw text is transformed into structured formats for logical fallacy classification, focusing on two key components: **Structure-Aware Premise** and **Structure-Aware Hypothesis**, with a final classification task.

---

### Components/Axes

1. **Structure-Aware Premise**

- **Original Premise**: "If Joe eats greasy food, he will feel sick."

- **Given Statement**: "Given that Joe feels sick, therefore Joe must have had greasy food."

- **Processing Steps**:

- Coreference resolution + Lemmatization + Stop word removal

- Calculating cosine similarity between word spans

- Threshold-based linking (cosine similarity > threshold)

- **Transformed Premise**: "If Coref1 eat greasy food, Coref1 will feel sick."

- **Logic-Aware Premise**: "If Person1 [MSK1], Person1 will [MSK2]."

- **Final Masked Format**: "Given that Person1 [MSK2], therefore Person1 must have [MSK1]."

2. **Structure-Aware Hypothesis**

- **Original Hypothesis**: "This is an example of deductive fallacies (affirming the consequent)."

- **Logical Form Transformation**:

- Replace label name with logical form: "If [MSK1], then [MSK2] leads to [MSK1]."

- **Logic-Aware Hypothesis**: "This example matches the following logical form: 'If [MSK1], then [MSK2] leads to [MSK1]."

3. **Pretrained NLI Model**

- **Classification Task**: Determine if an input sentence matches a specific logical fallacy type (e.g., deductive fallacy).

---

### Detailed Analysis

#### Structure-Aware Premise

- **Original Premise**: Directly quoted as "If Joe eats greasy food, he will feel sick."

- **Given Statement**: Rephrased as "Given that Joe feels sick, therefore Joe must have had greasy food."

- **Processing Steps**:

- Coreference resolution replaces "Joe" with "Coref1."

- Lemmatization and stop word removal simplify the sentence.

- Cosine similarity thresholds link word spans (e.g., "feels sick" → "will feel sick").

- **Final Masked Format**: Uses placeholders `[MSK1]` and `[MSK2]` to anonymize entities and actions.

#### Structure-Aware Hypothesis

- **Original Hypothesis**: Labels the example as a deductive fallacy ("affirming the consequent").

- **Logical Form**: Transforms the hypothesis into a structured logical statement: "If [MSK1], then [MSK2] leads to [MSK1]."

- **Key Insight**: Demonstrates how the model identifies and categorizes logical fallacies by mapping natural language to formal logic.

#### Pretrained NLI Model

- **Classification**: The model evaluates whether an input sentence aligns with a predefined logical fallacy type (e.g., deductive fallacy).

- **Input**: Masked sentences (e.g., "Given that Person1 [MSK2], therefore Person1 must have [MSK1]").

---

### Key Observations

1. **Coreference and Simplification**: The pipeline standardizes pronouns (e.g., "Joe" → "Coref1") and removes stop words to focus on semantic relationships.

2. **Logical Form Mapping**: The hypothesis section explicitly links natural language to formal logic (e.g., "If A, then B" → "If [MSK1], then [MSK2]").

3. **Masked Placeholders**: `[MSK1]` and `[MSK2]` represent anonymized entities/actions, enabling the model to generalize across inputs.

4. **Threshold-Based Linking**: Cosine similarity thresholds ensure only semantically relevant word spans are connected.

---

### Interpretation

This diagram outlines a framework for training an NLI model to detect logical fallacies in text. By transforming raw sentences into structured formats (e.g., masked premises/hypotheses), the model learns to classify inputs based on their alignment with formal logical patterns. The use of coreference resolution and cosine similarity thresholds ensures robustness to variations in natural language expression. The final classification task highlights the model's ability to generalize across diverse inputs, making it applicable to real-world scenarios like legal document analysis or automated fact-checking.