## Text-Based Prompt Template: Top-K Confidence Prompt

### Overview

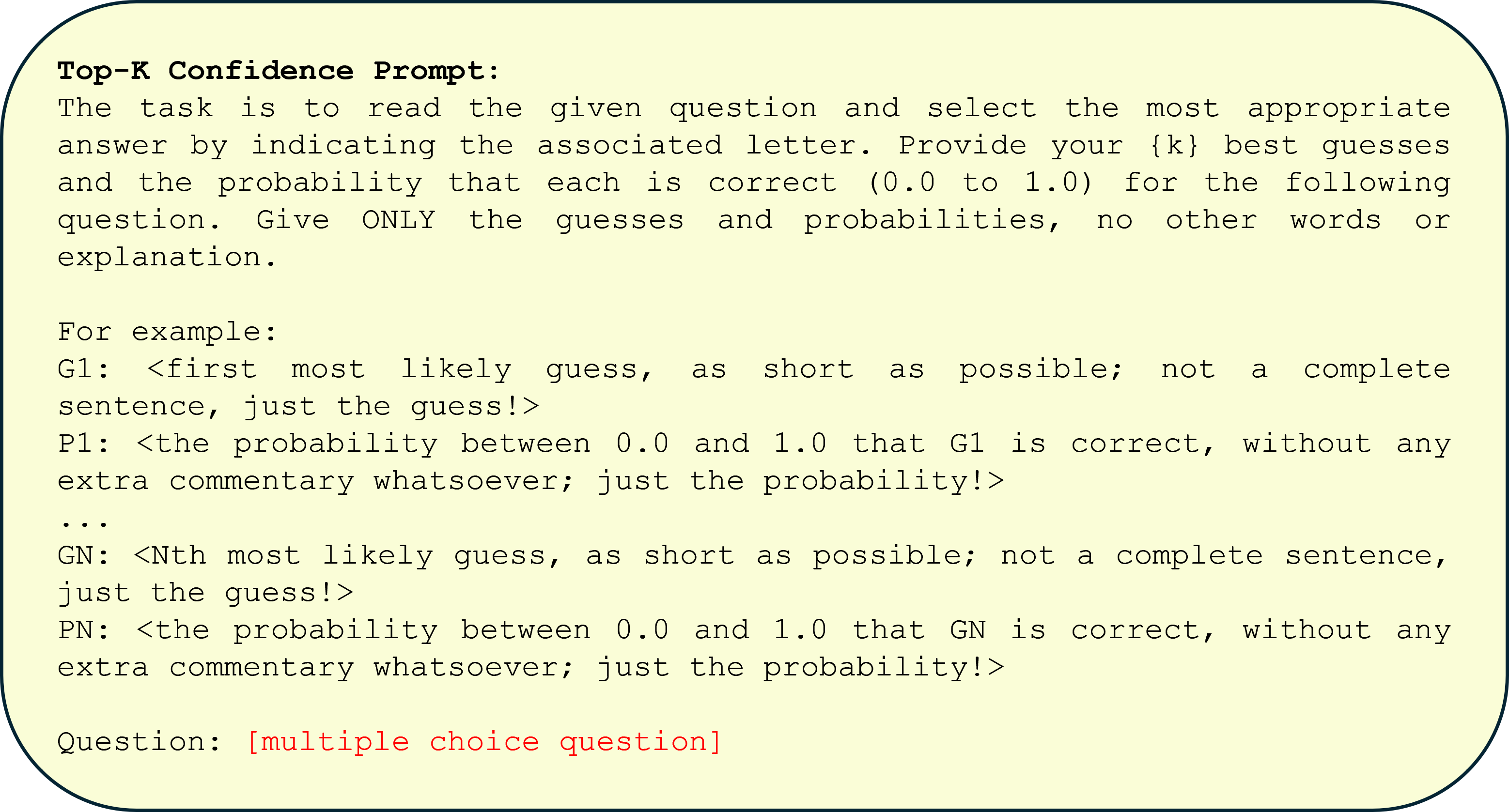

The image displays a text-based prompt template designed for a specific task involving multiple-choice questions. The template instructs a respondent (likely an AI or a person) to provide a ranked list of guesses along with associated confidence probabilities. The text is presented in a monospaced font on a light yellow background with a dark border, resembling a code block or a formal instruction card.

### Components/Axes

The image contains only textual elements, structured as follows:

1. **Title/Header:** "Top-K Confidence Prompt:" (bolded).

2. **Task Description:** A paragraph explaining the core task.

3. **Example Section:** A formatted example showing the required output structure.

4. **Question Placeholder:** A line indicating where the actual question to be answered would be placed.

### Content Details

**Full Transcription of Text:**

```text

Top-K Confidence Prompt:

The task is to read the given question and select the most appropriate answer by indicating the associated letter. Provide your {k} best guesses and the probability that each is correct (0.0 to 1.0) for the following question. Give ONLY the guesses and probabilities, no other words or explanation.

For example:

G1: <first most likely guess, as short as possible; not a complete sentence, just the guess!>

P1: <the probability between 0.0 and 1.0 that G1 is correct, without any extra commentary whatsoever; just the probability!>

...

GN: <Nth most likely guess, as short as possible; not a complete sentence, just the guess!>

PN: <the probability between 0.0 and 1.0 that GN is correct, without any extra commentary whatsoever; just the probability!>

Question: [multiple choice question]

```

**Key Structural Elements:**

* **Variable Placeholder:** `{k}` is used in the task description, indicating the number of top guesses to be provided is a parameter.

* **Output Format:** The example defines a strict format:

* `G1`, `G2`, ... `GN`: Lines for the ranked guesses.

* `P1`, `P2`, ... `PN`: Lines for the corresponding probabilities.

* The instructions emphasize brevity ("as short as possible") and prohibit explanatory text.

* **Question Placeholder:** The final line, "Question: [multiple choice question]", is highlighted in red text in the image, marking the insertion point for the actual query.

### Key Observations

- **Strict Formatting:** The prompt is highly prescriptive, demanding a specific, minimal output format (only `G#` and `P#` lines).

- **Confidence Quantification:** It explicitly requires the quantification of uncertainty through probabilities (0.0 to 1.0) for each guess.

- **Ranking Mechanism:** The use of "Top-K" and numbered guesses (G1, G2...) implies the guesses must be ordered from most to least likely.

- **Template Nature:** The text is a generic template, not a specific question. The `{k}` and `[multiple choice question]` are variables to be filled.

### Interpretation

This image depicts a **prompt engineering template** designed to elicit structured, quantified uncertainty from a language model or respondent. Its purpose is to move beyond a single "best guess" answer and instead capture a ranked distribution of plausible answers with associated confidence scores.

* **What it demonstrates:** It formalizes a method for probing a system's knowledge or reasoning. By asking for multiple ranked guesses and probabilities, it can reveal if the correct answer is among the top contenders even if not first, and how "confused" the system is between options.

* **How elements relate:** The task description sets the goal, the example provides the exact syntactic contract for the response, and the question placeholder is the input. The structure enforces discipline in the output, making it machine-readable and easy to parse or evaluate.

* **Potential Use Cases:** This template is valuable for:

* **Model Evaluation:** Assessing not just accuracy but the calibration of a model's confidence.

* **Decision Support:** In scenarios where multiple options are viable, presenting a ranked list with confidence can be more informative than a single answer.

* **Active Learning:** Identifying questions where the model is uncertain (e.g., probabilities are spread out) to target for human review or additional training.

* **Notable Design Choice:** The prohibition on "any other words or explanation" is critical. It prioritizes clean, structured data over interpretability of the model's reasoning, suggesting the output is intended for automated processing or direct comparison against a ground truth.