\n

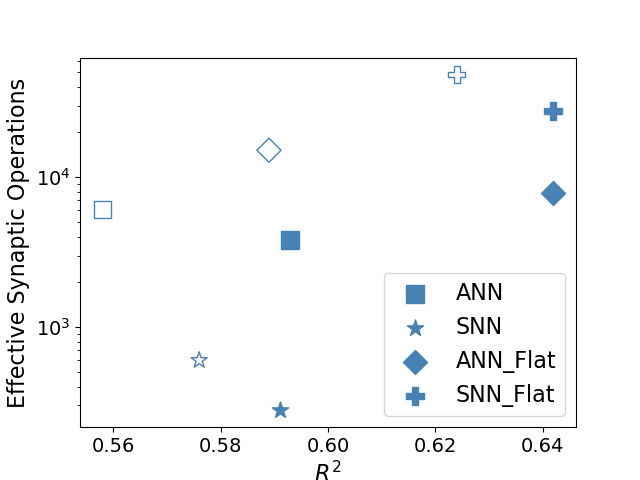

## Scatter Plot: Effective Synaptic Operations vs. R²

### Overview

This image presents a scatter plot comparing the Effective Synaptic Operations against the R² value for four different neural network configurations: ANN, SNN, ANN_Flat, and SNN_Flat. The plot visualizes the trade-off between model accuracy (R²) and computational cost (Effective Synaptic Operations).

### Components/Axes

* **X-axis:** R² (ranging approximately from 0.56 to 0.65)

* **Y-axis:** Effective Synaptic Operations (logarithmic scale, ranging approximately from 10³ to 10⁴)

* **Legend:** Located in the bottom-right corner, defining the data series:

* ANN (Blue Square)

* SNN (Blue Star)

* ANN_Flat (Gray Diamond)

* SNN_Flat (Blue Plus Sign)

### Detailed Analysis

The plot contains data points for each of the four network types. Let's analyze each series:

* **ANN (Blue Square):** The trend is generally upward.

* (0.60, ~3000)

* (0.64, ~8000)

* **SNN (Blue Star):** The trend is generally upward.

* (0.58, ~1000)

* (0.60, ~600)

* **ANN_Flat (Gray Diamond):** The trend is generally upward.

* (0.56, ~10000)

* (0.59, ~10000)

* **SNN_Flat (Blue Plus Sign):** The trend is generally upward.

* (0.62, ~10000)

* (0.65, ~12000)

### Key Observations

* SNN and ANN generally have lower Effective Synaptic Operations for a given R² value compared to their "Flat" counterparts.

* ANN_Flat and SNN_Flat consistently exhibit the highest Effective Synaptic Operations.

* The ANN series shows a relatively consistent increase in Effective Synaptic Operations as R² increases.

* The SNN series shows a decrease in Effective Synaptic Operations as R² increases.

### Interpretation

The data suggests that flattening the neural network architecture (indicated by the "\_Flat" suffix) significantly increases the computational cost (Effective Synaptic Operations) while potentially improving the R² value. The trade-off between accuracy (R²) and computational efficiency (Effective Synaptic Operations) is clearly visible.

The SNN series exhibits a somewhat counterintuitive trend, where increasing R² appears to *decrease* the number of effective synaptic operations. This could indicate a more efficient learning process or a different optimization landscape for SNNs compared to ANNs.

The difference between ANN and SNN suggests that spiking neural networks (SNNs) can achieve comparable or better performance with potentially lower computational requirements, especially at lower R² values. The "Flat" versions of both network types demonstrate a clear scaling issue, where increased accuracy comes at a substantial computational cost. This could be due to increased model complexity or the need for more parameters to achieve higher accuracy in a flattened architecture.