\n

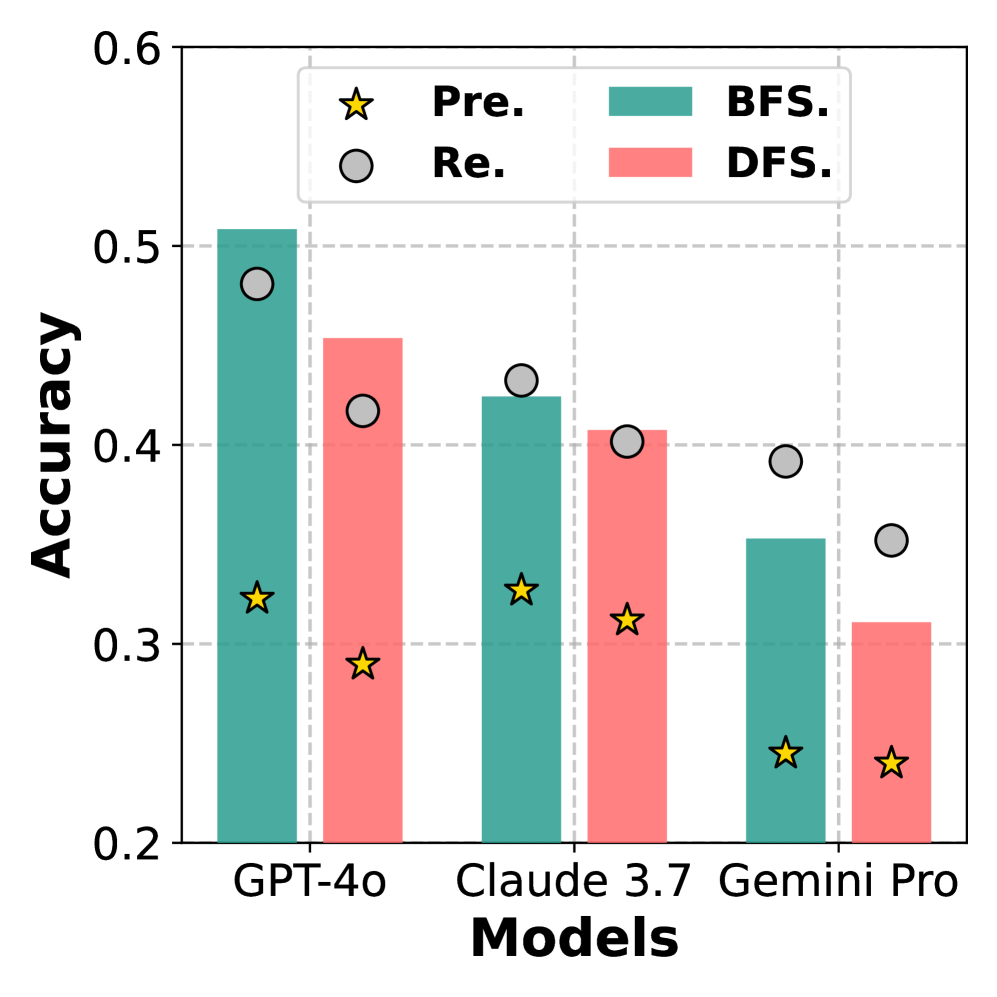

## Bar Chart: Model Accuracy Comparison

### Overview

This bar chart compares the accuracy of three large language models (GPT-4o, Claude 3.7, and Gemini Pro) using two different search strategies: Breadth-First Search (BFS) and Depth-First Search (DFS). Accuracy is measured on the y-axis, and the models are displayed on the x-axis. Within each model's bar grouping, there are two bars representing BFS and DFS, and two marker types representing "Pre." and "Re.".

### Components/Axes

* **X-axis:** "Models" with categories: GPT-4o, Claude 3.7, Gemini Pro.

* **Y-axis:** "Accuracy" ranging from approximately 0.2 to 0.6.

* **Legend:**

* Green bar: BFS (Breadth-First Search)

* Red bar: DFS (Depth-First Search)

* Yellow star: Pre. (Precision)

* White circle: Re. (Recall)

### Detailed Analysis

The chart consists of three groups of bars, one for each model. Within each group, there's a green bar for BFS and a red bar for DFS. Superimposed on each bar group are yellow stars ("Pre.") and white circles ("Re.").

**GPT-4o:**

* BFS: The green bar reaches approximately 0.52 accuracy. A white circle ("Re.") is positioned at approximately 0.48 accuracy, and a yellow star ("Pre.") is at approximately 0.32 accuracy.

* DFS: The red bar reaches approximately 0.47 accuracy. A white circle ("Re.") is positioned at approximately 0.42 accuracy, and a yellow star ("Pre.") is at approximately 0.30 accuracy.

**Claude 3.7:**

* BFS: The green bar reaches approximately 0.44 accuracy. A white circle ("Re.") is positioned at approximately 0.43 accuracy, and a yellow star ("Pre.") is at approximately 0.32 accuracy.

* DFS: The red bar reaches approximately 0.38 accuracy. A white circle ("Re.") is positioned at approximately 0.40 accuracy, and a yellow star ("Pre.") is at approximately 0.32 accuracy.

**Gemini Pro:**

* BFS: The green bar reaches approximately 0.34 accuracy. A white circle ("Re.") is positioned at approximately 0.40 accuracy, and a yellow star ("Pre.") is at approximately 0.26 accuracy.

* DFS: The red bar reaches approximately 0.32 accuracy. A white circle ("Re.") is positioned at approximately 0.36 accuracy, and a yellow star ("Pre.") is at approximately 0.24 accuracy.

### Key Observations

* GPT-4o consistently demonstrates the highest accuracy for both BFS and DFS strategies.

* BFS generally outperforms DFS across all three models, although the difference is more pronounced for GPT-4o.

* The "Re." (Recall) values are consistently higher than the "Pre." (Precision) values for each model and search strategy.

* Gemini Pro exhibits the lowest accuracy for both search strategies.

### Interpretation

The data suggests that GPT-4o is the most accurate model among the three tested, regardless of the search strategy employed. The consistent outperformance of BFS indicates that a broader search approach is more effective for these models in the context of the task being evaluated. The higher recall values compared to precision values suggest that the models are better at identifying relevant items (high recall) but may also include some irrelevant items (lower precision). The relatively low accuracy of Gemini Pro suggests it may require further optimization or is less suited for this particular task. The separation of "Pre." and "Re." markers provides insight into the trade-offs between precision and recall for each model and search strategy. The consistent placement of the "Pre." markers lower than the "Re." markers suggests a general tendency towards higher recall at the expense of precision.