## Diagram: Knowledge Graph Query Processing

### Overview

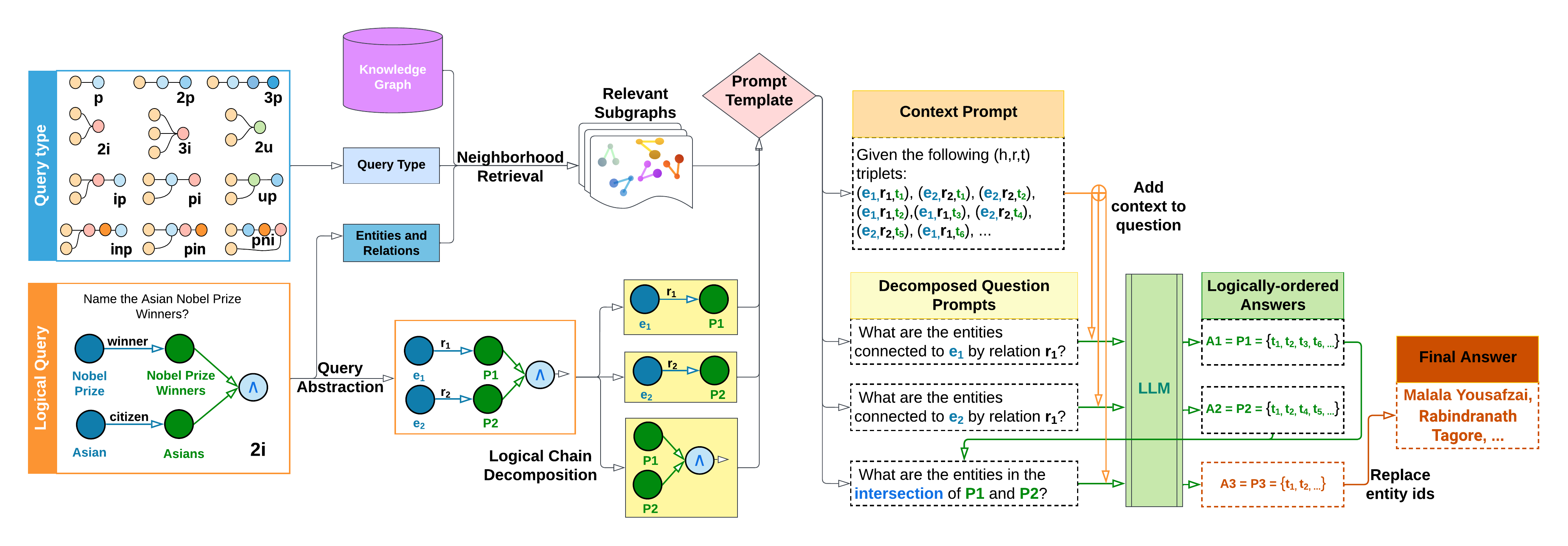

The image is a flowchart illustrating a process for querying a knowledge graph. It starts with a logical query, decomposes it into sub-queries, retrieves relevant subgraphs, and uses a prompt template to generate context prompts. These prompts are then used by a Large Language Model (LLM) to generate logically-ordered answers, which are combined to produce a final answer.

### Components/Axes

* **Top-Left**: "Query Type" - A table showing different query types represented as graphs.

* Rows: p, 2i, ip, inp

* Rows: 2p, 3i, pi, pin

* Rows: 3p, 2u, up, pni

* **Top-Center**: "Knowledge Graph" - A purple cylinder representing the knowledge graph.

* **Top-Center**: "Neighborhood Retrieval" - A process of retrieving relevant subgraphs from the knowledge graph.

* **Top-Center**: "Relevant Subgraphs" - A collection of subgraphs.

* **Top-Right**: "Prompt Template" - A diamond shape representing the prompt template.

* **Center-Left**: "Logical Query" - An orange box containing the question "Name the Asian Nobel Prize Winners?". It includes a graph with nodes labeled "Nobel Prize" (blue), "Nobel Prize Winners" (green), "citizen" (blue), and "Asians" (green), connected by edges labeled "winner".

* **Center-Left**: "Query Abstraction" - A process of abstracting the logical query into a graph representation.

* **Center-Left**: "Entities and Relations" - A blue box.

* **Center**: "Logical Chain Decomposition" - A process of decomposing the query into logical chains.

* **Center**: "Decomposed Question Prompts" - A yellow box containing questions derived from the logical chains.

* **Center-Right**: "Context Prompt" - A yellow box containing a set of (h,r,t) triplets.

* **Center-Right**: "LLM" - A green box representing the Large Language Model.

* **Right**: "Logically-ordered Answers" - A green box containing logically-ordered answers.

* **Right**: "Final Answer" - An orange box containing the final answer: "Malala Yousafzai, Rabindranath Tagore, ...".

### Detailed Analysis

1. **Query Type**:

* p: Two nodes connected by an edge.

* 2p: Three nodes, two connected to the central node.

* 3p: Four nodes, three connected to the central node.

* 2i: Two nodes connected to a central node.

* 3i: Three nodes connected to a central node.

* 2u: Two nodes connected to a central node.

* ip: Two nodes connected to a central node.

* pi: Two nodes connected to a central node.

* up: Two nodes connected to a central node.

* inp: Three nodes connected in a chain.

* pin: Three nodes connected in a chain.

* pni: Three nodes connected in a chain.

2. **Logical Query**:

* The query is "Name the Asian Nobel Prize Winners?".

* The query is represented as a graph with nodes "Nobel Prize", "Nobel Prize Winners", "citizen", and "Asians".

* The nodes are connected by edges labeled "winner".

3. **Logical Chain Decomposition**:

* The query is decomposed into logical chains involving entities e1, e2 and relations r1, r2.

* The chains are combined using a logical AND operation (Λ).

4. **Decomposed Question Prompts**:

* "What are the entities connected to e1 by relation r1?"

* "What are the entities connected to e2 by relation r1?"

* "What are the entities in the intersection of P1 and P2?"

5. **Context Prompt**:

* "Given the following (h,r,t) triplets: (e1,r1,t1), (e2,r2,t1), (e2,r2,t2), (e1,r1,t2), (e1,r1,t3), (e2,r2,t4), (e2,r2,t5), (e1,r1,t6), ..."

6. **Logically-ordered Answers**:

* A1 = P1 = {t1, t2, t3, t6, ...}

* A2 = P2 = {t1, t2, t4, t5, ...}

* A3 = P3 = {t1, t2, ...}

7. **Final Answer**:

* "Malala Yousafzai, Rabindranath Tagore, ..."

### Key Observations

* The diagram illustrates a multi-step process for answering complex questions using a knowledge graph and a large language model.

* The process involves decomposing the query into sub-queries, retrieving relevant information from the knowledge graph, and using a prompt template to generate context prompts for the LLM.

* The LLM generates logically-ordered answers, which are combined to produce the final answer.

### Interpretation

The diagram presents a sophisticated approach to question answering that leverages the strengths of both knowledge graphs and large language models. By decomposing complex queries into smaller, more manageable sub-queries, the system can effectively retrieve relevant information from the knowledge graph. The use of a prompt template ensures that the LLM receives the necessary context to generate accurate and logically-sound answers. The final answer is then constructed by combining the individual answers to the sub-queries. This approach is particularly useful for answering questions that require reasoning and inference over structured knowledge.