\n

## Diagram: Knowledge Graph to Answer Generation Pipeline

### Overview

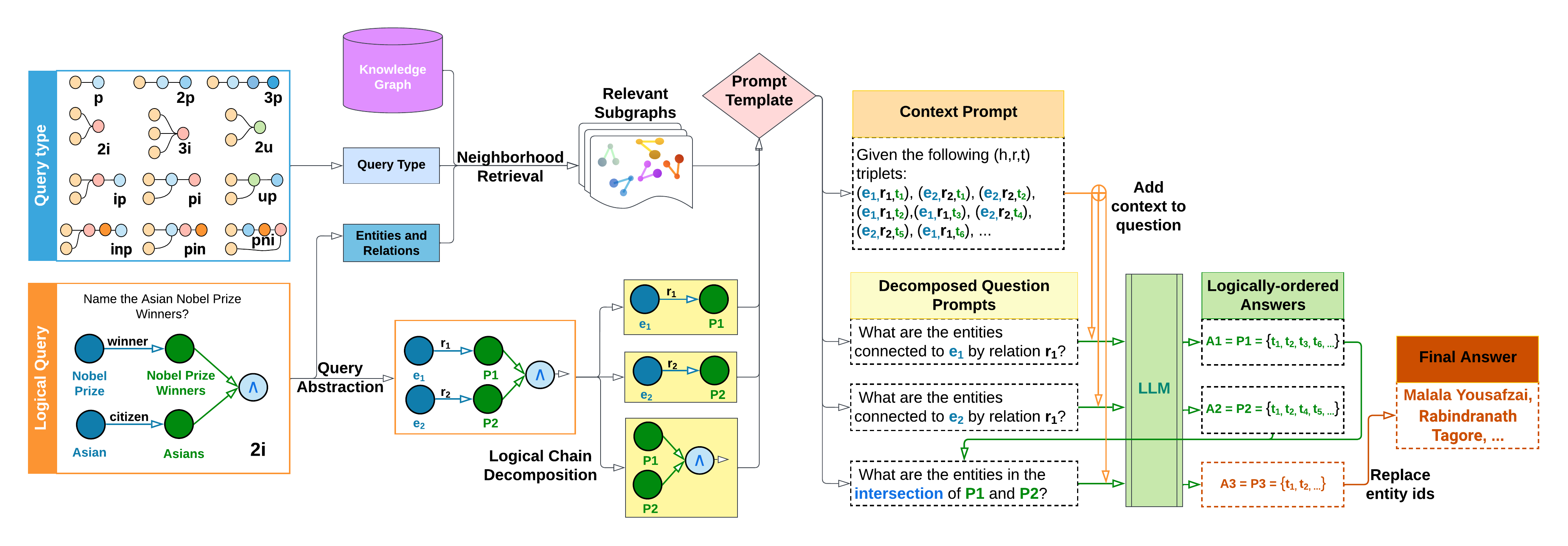

This diagram illustrates a pipeline for answering questions using a knowledge graph. The process begins with a logical query, transforms it into a query type, retrieves relevant subgraphs from a knowledge graph, decomposes the question into prompts, and finally uses a Large Language Model (LLM) to generate a logically-ordered answer. The diagram shows the flow of information and the key components involved in each stage.

### Components/Axes

The diagram is segmented into several key areas:

* **Logical Query:** Bottom-left, showing the initial query representation. Includes labels: "Nobel Prize", "Winners", "citizen", "Asian", "Asians", "2i". The query is "Name the Asian Nobel Prize Winners?".

* **Query Type:** Left-center, representing the query type as a network of nodes and edges. Labels include: "p", "2p", "3p", "zi", "2i", "3i", "up", "inp", "pin", "pi".

* **Knowledge Graph:** Top-left, a general representation of a knowledge graph.

* **Neighborhood Retrieval:** Center-left, showing the retrieval of relevant subgraphs.

* **Query Abstraction:** Center, showing the abstraction of the query into a logical chain decomposition. Includes nodes labeled "e1", "e2", "P1", "P2", and "A".

* **Decomposed Question Prompts:** Center-right, listing three prompts: "What are the entities connected to e1 by relation r1?", "What are the entities connected to e2 by relation r2?", "What are the entities in the intersection of P1 and P2?".

* **LLM & Answer Generation:** Right, showing the LLM processing the prompts and generating a logically-ordered answer. Includes labels: "LLM", "A1 = P1 = {e1, e2, e3...}", "A2 = P2 = {e1, e2, e3...}", "A3 = P3 = {e1, e2...}", "Final Answer: Malala Yousafzai, Rabindranath Tagore,...".

* **Context Prompt:** Top-right, showing the format of the context prompt given to the LLM: "Given the following (n,r) triplets: ...".

### Detailed Analysis or Content Details

The diagram illustrates a multi-step process:

1. **Logical Query:** The initial query, "Name the Asian Nobel Prize Winners?", is represented as a logical query with entities and relations.

2. **Query Type:** This query is then transformed into a query type, represented as a network of nodes and edges.

3. **Neighborhood Retrieval:** Relevant subgraphs are retrieved from the knowledge graph based on the query type. The retrieved subgraph is visually represented as a cluster of interconnected nodes.

4. **Query Abstraction:** The query is abstracted into a logical chain decomposition, represented by nodes e1, e2, P1, P2, and A. The connections between these nodes represent the logical steps required to answer the query.

5. **Decomposed Question Prompts:** The logical chain is then decomposed into three specific prompts for the LLM.

6. **LLM & Answer Generation:** The LLM processes these prompts and generates a logically-ordered answer. The answer is constructed in stages (A1, A2, A3) before arriving at the final answer, which includes examples like "Malala Yousafzai, Rabindranath Tagore,...".

7. **Context Prompt:** The LLM is provided with a context prompt containing (n,r) triplets, which provide the necessary information for answering the query.

### Key Observations

* The pipeline emphasizes a structured approach to question answering, breaking down a complex query into smaller, more manageable steps.

* The use of a knowledge graph allows for leveraging existing relationships between entities.

* The LLM plays a crucial role in synthesizing information from the knowledge graph and generating a coherent answer.

* The decomposition into prompts suggests a method for guiding the LLM towards a more accurate and relevant response.

* The final answer is built incrementally, demonstrating a logical reasoning process.

### Interpretation

This diagram demonstrates a sophisticated approach to knowledge-based question answering. It highlights the benefits of combining the structured knowledge representation of a knowledge graph with the natural language processing capabilities of a Large Language Model. The pipeline's modular design allows for flexibility and scalability. The decomposition of the query into prompts is a key innovation, enabling more precise control over the LLM's reasoning process. The diagram suggests that this approach can effectively address complex queries that require reasoning over multiple relationships within a knowledge graph. The inclusion of the context prompt is critical for providing the LLM with the necessary information to generate an accurate and informative answer. The iterative answer construction (A1, A2, A3) indicates a deliberate attempt to ensure the logical correctness and completeness of the final response. This pipeline represents a significant advancement in the field of question answering, offering a promising path towards more intelligent and reliable information retrieval systems.