## Flowchart: Knowledge Graph Query Processing System

### Overview

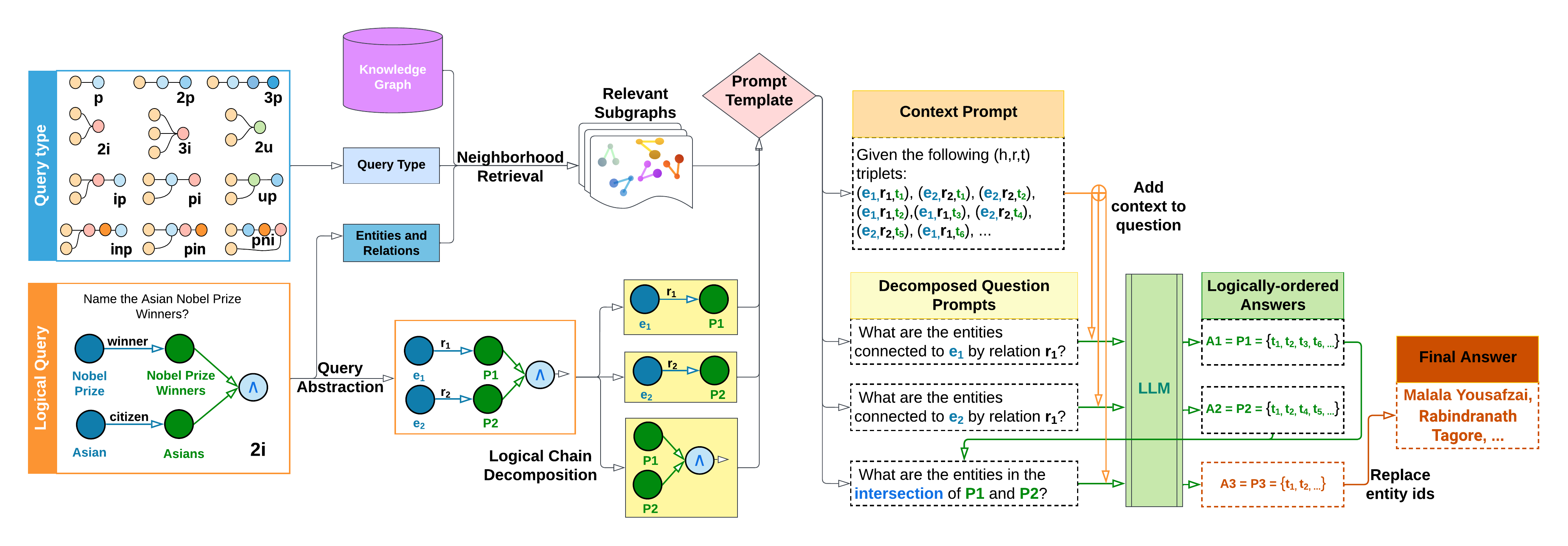

The diagram illustrates a multi-stage pipeline for processing complex queries using knowledge graphs and logical decomposition. It begins with query abstraction, progresses through subgraph retrieval and prompt engineering, and culminates in LLM-based answer synthesis. The final output combines entity-level reasoning with contextual knowledge to produce human-readable answers.

### Components/Axes

1. **Left Panel (Query Abstraction)**

- **Query Type Matrix**:

- Contains 9 query patterns (2p, 3p, 2i, ip, pi, up, inp, pin, pini)

- Color-coded nodes (blue, green, orange) representing entity types

- **Logical Query Example**:

- "Name the Asian Nobel Prize Winners?"

- Visualized with entity-relationship graph (Asian → winner → Nobel Prize)

2. **Central Processing Flow**

- **Knowledge Graph** (purple cylinder)

- **Neighborhood Retrieval** (blue box)

- **Relevant Subgraphs** (wavy-edged box with colored nodes)

- **Prompt Template** (pink diamond)

3. **Right Panel (LLM Processing)**

- **Context Prompt** (orange box with triplet examples)

- **Decomposed Question Prompts** (yellow box with 3 sub-questions)

- **LLM Processing** (green vertical box)

- **Logically-ordered Answers** (green dashed boxes with A1-A3)

- **Final Answer** (orange box with Malala Yousafzai, Rabindranath Tagore)

### Detailed Analysis

1. **Query Type System**

- 9 distinct query patterns categorized by:

- Node count (2p=2 nodes, 3p=3 nodes)

- Relationship types (p=property, i=instance)

- Special cases (inp=inverse property, pin=inverse with negation)

2. **Knowledge Graph Integration**

- Central data source represented as a purple cylinder

- Connected to query abstraction and neighborhood retrieval

3. **Logical Decomposition**

- Original query broken into 3 sub-questions:

1. Entities connected to e₁ by r₁

2. Entities connected to e₂ by r₂

3. Intersection of P1 and P2 entity sets

4. **LLM Processing**

- Takes decomposed prompts and generates:

- A1 = {t₁, t₂, t₃, t₆...} (entities for P1)

- A2 = {t₁, t₂, t₄, t₅...} (entities for P2)

- A3 = {t₁, t₂...} (intersection set)

5. **Answer Synthesis**

- Final answer combines entity IDs with real-world names

- Example output: "Malala Yousafzai, Rabindranath Tagore..."

### Key Observations

1. **Hierarchical Processing**:

- Query abstraction → subgraph retrieval → prompt engineering → LLM processing → answer synthesis

2. **Entity-Relationship Focus**:

- All stages maintain explicit connections between entities (e₁, e₂) and relations (r₁, r₂)

3. **Temporal Logic**:

- Intersection operation (A3) suggests temporal or logical dependency between sub-answers

4. **Contextual Enrichment**:

- Triplets in context prompt provide background knowledge for answer interpretation

### Interpretation

This system demonstrates a sophisticated approach to knowledge graph querying that:

1. **Handles Complexity**: Breaks down multi-hop queries into manageable components

2. **Leverages LLM Capabilities**: Uses large language models for contextual understanding and answer synthesis

3. **Maintains Logical Consistency**: Ensures answers respect original query constraints through decomposition and intersection operations

4. **Bridges Formal and Natural Language**: Converts formal entity-relationship representations into human-readable names

The process reveals an intentional design to handle both the structural complexity of knowledge graphs and the semantic nuances required for accurate answer generation. The use of color-coded nodes and explicit decomposition steps suggests a focus on making the reasoning process transparent and verifiable.