TECHNICAL ASSET FINGERPRINT

f745a54b475f61f580e1b708

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

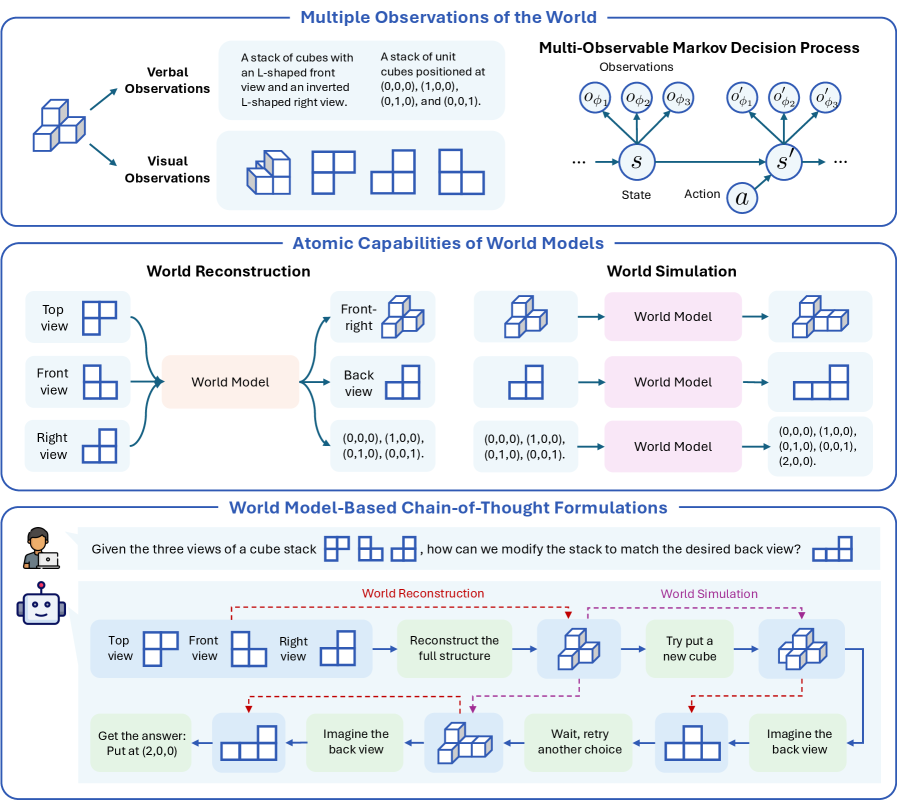

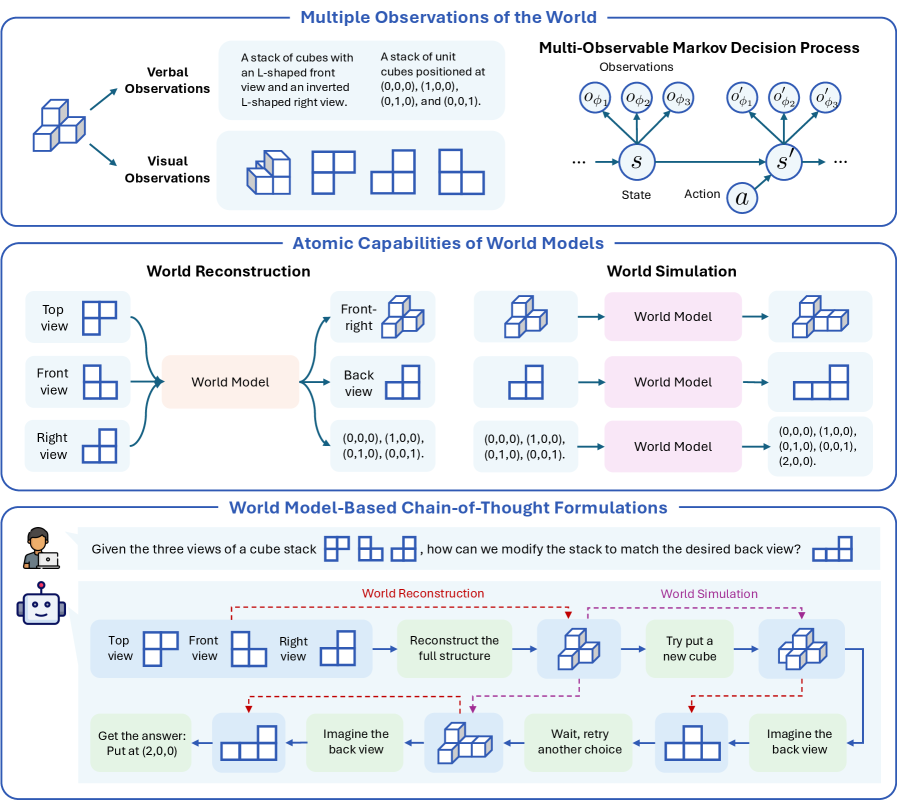

## Diagram: Multiple Observations and World Models

### Overview

The image presents a series of diagrams illustrating different aspects of world observation, reconstruction, and simulation using cube stacks. It covers multiple observation types, Markov decision processes, atomic capabilities of world models, and chain-of-thought formulations.

### Components/Axes

**Section 1: Multiple Observations of the World**

* **Verbal Observations:** An arrow points from the text "Verbal Observations" to a 3D cube stack.

* **Visual Observations:** An arrow points from the text "Visual Observations" to a 2D representation of the same cube stack.

* **Cube Stack Description 1:** "A stack of cubes with an L-shaped front view and an inverted L-shaped right view." This is accompanied by 2D projections of the cube stack.

* **Cube Stack Description 2:** "A stack of unit cubes positioned at (0,0,0), (1,0,0), (0,1,0), and (0,0,1)." This is also accompanied by 2D projections of the cube stack.

* **Multi-Observable Markov Decision Process:**

* Observations: Oφ1, Oφ2, Oφ3, O'φ1, O'φ2, O'φ3

* States: S, S'

* Action: a

**Section 2: Atomic Capabilities of World Models**

* **World Reconstruction:**

* Top view: 2D projection of a cube stack.

* Front view: 2D projection of a cube stack.

* Right view: 2D projection of a cube stack.

* World Model: A pink box labeled "World Model".

* Front-right view: 3D projection of a cube stack.

* Back view: 2D projection of a cube stack.

* Coordinates: (0,0,0), (1,0,0), (0,1,0), (0,0,1).

* **World Simulation:**

* 3D projection of a cube stack.

* World Model: A pink box labeled "World Model".

* 3D projection of a cube stack.

* Coordinates: (0,0,0), (1,0,0), (0,1,0), (0,0,1), (2,0,0).

**Section 3: World Model-Based Chain-of-Thought Formulations**

* **Question:** "Given the three views of a cube stack [Top, Front, Right], how can we modify the stack to match the desired back view? [Back view]"

* **World Reconstruction:**

* Top view: 2D projection of a cube stack.

* Front view: 2D projection of a cube stack.

* Right view: 2D projection of a cube stack.

* Reconstruct the full structure: 3D projection of a cube stack.

* Imagine the back view: 2D projection of a cube stack.

* Get the answer: Put at (2,0,0): 2D projection of a cube stack.

* **World Simulation:**

* Try put a new cube: 3D projection of a cube stack.

* Wait, retry another choice: 2D projection of a cube stack.

* Imagine the back view: 2D projection of a cube stack.

### Detailed Analysis or ### Content Details

**Section 1:**

* The "Verbal Observations" and "Visual Observations" both refer to the same cube stack, suggesting two different ways of perceiving the same object.

* The "Multi-Observable Markov Decision Process" illustrates a state transition model with observations, states, and actions.

**Section 2:**

* "World Reconstruction" shows how different views of an object can be used to create a world model and then infer the back view.

* "World Simulation" shows how a world model can be used to simulate different configurations of the object.

**Section 3:**

* The "World Model-Based Chain-of-Thought Formulations" section presents a problem-solving approach using world models. It involves reconstructing the full structure from three views, imagining the back view, and then either putting a new cube or retrying another choice.

### Key Observations

* The diagrams use 2D and 3D projections to represent cube stacks.

* The "World Model" is a central component in both reconstruction and simulation.

* The chain-of-thought formulation involves iterative steps of reconstruction, simulation, and decision-making.

### Interpretation

The image illustrates the concept of building and using world models for object understanding and manipulation. It demonstrates how different observations can be integrated into a coherent model, and how this model can be used for tasks such as reconstructing hidden views or simulating the effects of actions. The chain-of-thought formulation highlights the iterative and reasoning-based nature of problem-solving using world models. The diagrams suggest a system that can perceive an object from multiple viewpoints, create an internal representation of it, and then use that representation to reason about its properties and how it can be modified.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: World Model Capabilities & Chain-of-Thought Formulations

### Overview

This diagram illustrates the capabilities of world models, specifically focusing on how they integrate verbal and visual observations, reconstruct and simulate worlds, and formulate chain-of-thought solutions to problems. It presents a framework for understanding how an agent can reason about and interact with its environment. The diagram is divided into three main sections: Multiple Observations of the World, Atomic Capabilities of World Models, and World Model-Based Chain-of-Thought Formulations.

### Components/Axes

The diagram consists of several interconnected components:

* **Multiple Observations of the World:** This section includes "Verbal Observations" (text descriptions) and "Visual Observations" (cube stack images).

* **Multi-Observable Markov Decision Process:** Depicts a state transition system with observations (σ), states (S), actions (α), and next states (S').

* **Atomic Capabilities of World Models:** Divided into "World Reconstruction" and "World Simulation" sections.

* **World Reconstruction:** Takes top, front, and right views of a cube stack as input and attempts to reconstruct the full structure.

* **World Simulation:** Uses a "World Model" to simulate the effects of actions on the reconstructed world.

* **World Model-Based Chain-of-Thought Formulations:** Demonstrates a step-by-step reasoning process to solve a cube stack problem.

* **Person Icon:** Represents the agent performing the task.

* **Cube Stack Images:** Used as visual inputs and outputs throughout the diagram.

* **Text Boxes:** Contain descriptions of the process and problem statements.

### Detailed Analysis or Content Details

**1. Multiple Observations of the World:**

* **Verbal Observations:** "A stack of cubes with an L-shaped front view and an inverted L-shaped right view." and "A stack of cubes positioned at (0,0,0), (1,0,0), (0,1,0), and (0,0,1)."

* **Visual Observations:** Two 3D cube stack arrangements are shown. The first is an L-shape, and the second is a set of four cubes at coordinates (0,0,0), (1,0,0), (0,1,0), and (0,0,1).

**2. Multi-Observable Markov Decision Process:**

* This section shows a sequence of observations (σ1, σ2, σ3…), a state (S), an action (α), and a resulting next state (S'). The ellipsis (…) indicates that this process continues iteratively.

**3. Atomic Capabilities of World Models:**

* **World Reconstruction:**

* Input Views: Top, Front, Right views of a cube stack.

* Intermediate Step: "World Model" is used to reconstruct the cube stack.

* Output: Reconstructed cube stack with coordinates (0,0,0), (1,0,0), (0,1,0), (0,0,1).

* **World Simulation:**

* Input: Reconstructed cube stack.

* Process: "World Model" simulates the effects of actions.

* Output: Simulated cube stack with coordinates (0,0,0), (1,0,0), (0,1,0), (2,0,0).

**4. World Model-Based Chain-of-Thought Formulations:**

* **Problem Statement:** "Given the three views of a cube stack… how can we modify the stack to match the desired back view?"

* **Chain-of-Thought Steps:**

* Step 1: Input Views (Top, Front, Right).

* Step 2: "Reconstruct the full structure" – resulting in a cube stack.

* Step 3: "Imagine the back view" – resulting in a cube stack.

* Step 4: "Try put a new cube" – resulting in a cube stack.

* Step 5: "Wait, retry another choice" – resulting in a cube stack.

* Step 6: "Imagine the back view" – resulting in a cube stack.

* Step 7: "Get the answer: Put at (2,0,0)" – resulting in a cube stack.

### Key Observations

* The diagram emphasizes the iterative nature of world modeling, with reconstruction and simulation steps being repeated.

* The chain-of-thought formulation demonstrates a problem-solving approach that involves hypothesis generation, testing, and refinement.

* The use of both verbal and visual observations highlights the importance of multi-modal input for world modeling.

* The Markov Decision Process section suggests that the agent's actions are based on observations and lead to state transitions.

### Interpretation

The diagram presents a conceptual framework for how an intelligent agent can build and utilize world models to understand and interact with its environment. The agent leverages both verbal and visual information to reconstruct a representation of the world, simulate potential actions, and formulate solutions to problems. The chain-of-thought approach demonstrates a deliberate reasoning process, where the agent explores different possibilities and refines its understanding based on feedback.

The Markov Decision Process component suggests that the agent operates within a probabilistic framework, where actions have uncertain outcomes. The world model serves as a crucial component in this process, allowing the agent to predict the consequences of its actions and make informed decisions.

The diagram highlights the importance of abstraction and representation in intelligent systems. The world model provides a simplified representation of the environment, enabling the agent to reason about complex scenarios without being overwhelmed by details. The use of coordinates (e.g., (0,0,0), (1,0,0)) suggests that the world model employs a symbolic representation of objects and their relationships.

The diagram's overall message is that effective world modeling requires the integration of multiple sources of information, a robust simulation capability, and a systematic reasoning process. This framework has implications for the development of artificial intelligence systems that can operate in complex and dynamic environments.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: World Model Capabilities and Formulations

### Overview

This image is a technical diagram illustrating the concept of "World Models" in artificial intelligence, specifically focusing on their atomic capabilities (reconstruction and simulation) and their application in chain-of-thought reasoning. The diagram is divided into three horizontal panels, each exploring a different facet of the concept using a consistent example of 3D cube structures.

### Components/Axes

The diagram is structured into three main panels, each with a blue header:

1. **Top Panel: "Multiple Observations of the World"**

* **Left Section:** Shows a 3D cube structure (an L-shape with an inverted L on top) and two types of observations:

* **Verbal Observations:** Text descriptions: "A stack of cubes with an L-shaped front view and an inverted L-shaped right view." and "A stack of unit cubes positioned at (0,0,0), (1,0,0), (0,1,0), and (0,0,1)."

* **Visual Observations:** Four 2D line-drawing projections of the cube stack from different angles.

* **Right Section:** A diagram titled **"Multi-Observable Markov Decision Process"**.

* **Components:** A state circle (`s`), an action circle (`a`), and a next state circle (`s'`). Arrows indicate transitions.

* **Observations:** Above the state circles are observation nodes (`o_φ1`, `o_φ2`, `o_φ3` for state `s`; `o'_φ1`, `o'_φ2`, `o'_φ3` for state `s'`), indicating multiple observation types for each state.

* **Legend/Labels:** "Observations" (top), "State" (below `s`), "Action" (below `a`).

2. **Middle Panel: "Atomic Capabilities of World Models"**

* **Left Sub-panel: "World Reconstruction"**

* **Inputs:** Three 2D views labeled "Top view", "Front view", "Right view".

* **Process:** Arrows point from the views into a central pink box labeled "World Model".

* **Outputs:** Arrows point from the "World Model" to:

1. A 3D reconstruction of the cube stack.

2. A "Back view" (2D projection).

3. A coordinate list: "(0,0,0), (1,0,0), (0,1,0), (0,0,1)".

* **Right Sub-panel: "World Simulation"**

* **Inputs:** Three different starting states (a 3D cube stack, a 2D view, and a coordinate list).

* **Process:** Each input has an arrow pointing to a pink "World Model" box.

* **Outputs:** Each "World Model" box has an arrow pointing to a predicted next state:

1. A new 3D cube configuration.

2. A new 2D view.

3. A new coordinate list: "(0,0,0), (1,0,0), (0,1,0), (0,0,1), (2,0,0)".

3. **Bottom Panel: "World Model-Based Chain-of-Thought Formulations"**

* **Problem Statement:** A user icon asks: "Given the three views of a cube stack [icon] [icon] [icon], how can we modify the stack to match the desired back view [icon]?"

* **Process Flow:** A robot icon initiates a flowchart with two main phases, connected by red dashed lines indicating feedback loops.

* **Phase 1: "World Reconstruction"** (Left side, blue background)

* Steps: "Top view", "Front view", "Right view" → "Reconstruct the full structure" (3D icon) → "Imagine the back view" (2D icon).

* **Phase 2: "World Simulation"** (Right side, pink background)

* Steps: "Try put a new cube" (3D icon) → "Imagine the back view" (2D icon) → Decision point: "Wait, retry another choice" (loops back) or proceed.

* **Final Output:** An arrow leads to "Get the answer: Put at (2,0,0)".

### Detailed Analysis

* **Cube Structure:** The primary example is a 4-cube structure. Its verbal description and coordinate list define it as occupying positions (0,0,0), (1,0,0), (0,1,0), and (0,0,1) in a 3D grid.

* **World Model Functions:**

* **Reconstruction:** The model takes multiple 2D perspectives (top, front, right) as input and infers the complete 3D structure, its other 2D projections (back view), and its explicit coordinate representation.

* **Simulation:** The model takes a current state (in any representation: 3D, 2D, or coordinates) and an implied action (e.g., "add a cube") to predict the resulting future state. The example shows adding a cube at (2,0,0).

* **Chain-of-Thought Logic:** The bottom panel demonstrates a problem-solving loop. The agent first reconstructs the current object from given views. It then simulates potential actions (adding a cube), imagines the resulting back view, and compares it to the target. If mismatched, it loops back to try a different action. The solution is to place a cube at coordinate (2,0,0).

### Key Observations

1. **Multi-Modal Representation:** The diagram emphasizes that a "world" can be represented interchangeably as 3D models, 2D views, or numerical coordinates. The World Model operates across these modalities.

2. **MDP Integration:** The top-right explicitly frames the problem within a Multi-Observable Markov Decision Process, where a single underlying state (`s`) can produce multiple observation types (`o_φ`).

3. **Feedback-Driven Reasoning:** The chain-of-thought process is not linear but iterative, using simulation and imagination ("Imagine the back view") to test hypotheses before committing to an action.

4. **Consistent Example:** The same 4-cube L-shaped structure is used throughout all panels, providing a concrete thread to understand the abstract concepts.

### Interpretation

This diagram argues that robust AI reasoning about the physical world requires two core, interconnected capabilities: **reconstruction** (building an internal model from partial sensory data) and **simulation** (predicting the consequences of actions within that model). The "World Model" is presented as the central engine enabling both.

The **Peircean investigative reading** suggests the diagram is making a case for a specific architecture of intelligence:

* **The Sign (Representation):** The cube in its various forms (3D, 2D, coordinates) is the representational sign.

* **The Object (The Actual World):** The true, complete 3D structure is the object the signs point to.

* **The Interpretant (The Reasoning Process):** The chain-of-thought flowchart is the interpretant—the process of using signs (reconstructions) and predictive models (simulations) to derive meaning and solve problems. The feedback loops are critical, showing that understanding is an active, abductive process of hypothesizing and testing.

The practical implication is that for an AI to answer a question like "how do I change this object to look like that?", it cannot rely on pattern matching alone. It must first *understand* the current state (reconstruction), then *imagine* the effects of its actions (simulation), and use that internal simulation to guide its physical or logical intervention. The coordinate "(2,0,0)" is not just an answer; it's the output of a simulated experiment conducted within the model's internal world.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Multi-Observational World Modeling and Decision-Making Framework

### Overview

The image presents a technical framework for multi-observational world modeling, decision-making processes, and chain-of-thought reasoning. It combines visual-spatial reasoning with formal computational models, using cube-stack examples to illustrate concepts.

### Components/Axes

1. **Top Section: Multi-Observable Markov Decision Process**

- **Visual Elements**:

- Cube stack with labeled positions: (0,0,0), (1,0,0), (0,1,0), (0,0,1)

- L-shaped front view and inverted L-shaped right view

- **Textual Elements**:

- "Verbal Observations" (textual descriptions of cube positions)

- "Visual Observations" (diagrammatic representations)

- "Multi-Observable Markov Decision Process" (formal model with state transitions)

- **Key Symbols**:

- State (s) → Action (a) → Next State (s')

- Observations (oφ₁, oφ₂, oφ₃) and modified observations (o'φ₁, o'φ₂, o'φ₃)

2. **Middle Section: Atomic Capabilities of World Models**

- **Left Subsection: World Reconstruction**

- Input: Top view, Front view, Right view

- Output: Coordinate representations (e.g., (0,0,0), (1,0,0))

- **Right Subsection: World Simulation**

- Input: Coordinate representations

- Output: Modified cube configurations

- **Central Element**: "World Model" (black box processing inputs to outputs)

3. **Bottom Section: World Model-Based Chain-of-Thought Formulations**

- **Visual Flow**:

- Robot interface with cube stack

- Three-step process: Reconstruction → Simulation → Iterative Refinement

- **Key Text**:

- "Given the three views... how can we modify the stack to match the desired back view?"

- "Reconstruct the full structure" → "Try put a new cube" → "Wait, retry another choice"

### Detailed Analysis

1. **Multi-Observable Markov Decision Process**

- States represent cube configurations with positional coordinates

- Actions modify cube positions (e.g., adding/removing cubes)

- Observations (oφ) and modified observations (o'φ) show different perspective representations

2. **World Model Architecture**

- Takes three orthogonal views (top/front/right) as input

- Outputs 3D coordinate representations of cube positions

- Processes spatial relationships through formal coordinate systems

3. **Chain-of-Thought Workflow**

- Starts with visual observations (top/front/right views)

- Uses world model to reconstruct 3D structure

- Simulates cube additions/removals

- Iterates through multiple attempts to achieve target configuration

### Key Observations

1. **Spatial Reasoning Integration**

- Combines 2D visual observations with 3D coordinate representations

- Uses formal coordinate systems (x,y,z) for spatial reasoning

2. **Iterative Problem Solving**

- Demonstrates trial-and-error process in cube manipulation

- Shows feedback loop between simulation and observation

3. **Formal Model Components**

- Markov decision process framework for sequential decision making

- World model as central processing unit for spatial transformations

### Interpretation

This framework demonstrates how AI systems can integrate multiple sensory inputs (visual observations) with formal spatial reasoning (coordinate systems) to perform complex tasks. The cube-stack example illustrates:

1. **Perception**: Converting 2D views into 3D spatial understanding

2. **Reasoning**: Using world models to predict outcomes of actions

3. **Action**: Iteratively modifying the environment based on simulated outcomes

The Markov decision process component suggests a probabilistic approach to decision-making under uncertainty, while the chain-of-thought formulation emphasizes the importance of iterative refinement in complex problem-solving tasks. The system appears designed to handle tasks requiring both spatial reasoning and sequential decision-making capabilities.

DECODING INTELLIGENCE...