## Multi-Line Chart: Model Accuracy Across Mathematical Topics

### Overview

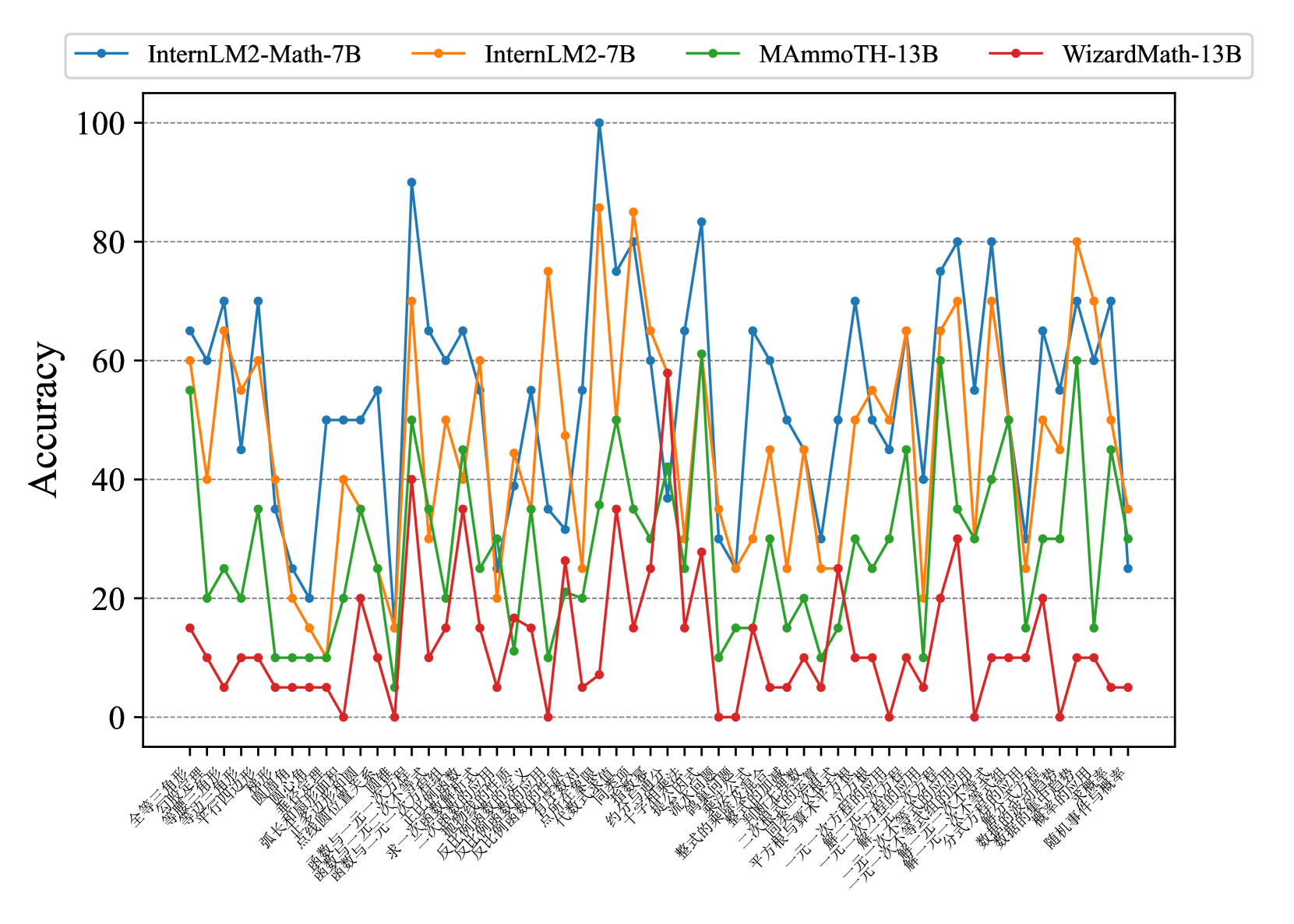

This is a multi-line chart comparing the accuracy (0-100%) of four different large language models across a wide range of mathematical topics. The chart is dense, with approximately 40 distinct topics plotted on the x-axis. The overall visual impression is one of high variability, with models showing significant performance differences depending on the specific mathematical domain.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear, from 0 to 100.

* **Major Ticks:** 0, 20, 40, 60, 80, 100.

* **Grid Lines:** Horizontal dashed lines at each major tick.

* **X-Axis:**

* **Label:** Not explicitly labeled, but contains a series of mathematical topic names.

* **Language:** The labels are in **Chinese**.

* **Content:** A dense series of approximately 40 mathematical topic labels, rotated at a 45-degree angle for readability. The topics span geometry, algebra, functions, equations, and probability/statistics.

* **Legend:**

* **Position:** Top center, above the plot area.

* **Content:** Four entries, each with a colored line and marker:

1. **Blue line with circle markers:** `InternLM2-Math-7B`

2. **Orange line with circle markers:** `InternLM2-7B`

3. **Green line with circle markers:** `MAmmoTH-13B`

4. **Red line with circle markers:** `WizardMath-13B`

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

The chart shows highly variable performance. No single model dominates across all topics. The lines frequently cross, indicating model strengths are topic-specific.

* **InternLM2-Math-7B (Blue):** This model shows the highest peak performance, reaching near 100% accuracy on one topic (likely "有理数的混合运算" - Mixed Operations with Rational Numbers). It generally performs in the upper tier (40-80% range) for many topics but has significant dips, including one near 0% (likely "随机事件与概率" - Random Events and Probability). Its trend is highly volatile.

* **InternLM2-7B (Orange):** This model closely follows the trend of its math-specialized counterpart (Blue) but often at a slightly lower accuracy level. Its peaks are strong (80-85%) but not as high as the Blue model's maximum. It also experiences deep troughs, sometimes below 20%.

* **MAmmoTH-13B (Green):** This model's performance is generally in the middle-to-lower range (10-60%). It has a few notable peaks around 60% but is frequently the third-best performer. Its trend line is less volatile than the top two but still shows significant variation.

* **WizardMath-13B (Red):** This model consistently performs the worst across almost all topics. Its accuracy rarely exceeds 40% and frequently hovers between 0-20%. It has a few small peaks but is the clear bottom performer in this comparison.

**Cluster Analysis (Grouping similar x-axis positions):**

* **High-Performance Cluster (Left side, ~60-70% for top models):** Topics like "全等三角形" (Congruent Triangles), "等腰三角形" (Isosceles Triangles), "平行四边形" (Parallelograms). Blue and Orange models lead here.

* **Peak Performance Cluster (Center):** A sharp peak for Blue (~100%) and Orange (~85%) on a topic related to rational number operations. Green peaks here as well (~50%).

* **Low-Performance Cluster (Right side):** Topics related to probability and statistics ("数据的收集、整理与描述" - Data Collection, Organization, and Description; "随机事件与概率" - Random Events and Probability). All models show a sharp decline, with WizardMath (Red) and often MAmmoTH (Green) near 0-10%.

* **Notable Outlier Point:** There is a data point where the Red line (WizardMath) spikes to ~60%, briefly matching the Green line (MAmmoTH). This occurs on a topic in the middle of the chart (possibly "分式方程" - Fractional Equations).

### Key Observations

1. **Specialization Matters:** The `InternLM2-Math-7B` (Blue) model, presumably fine-tuned for mathematics, achieves the highest overall accuracy and generally outperforms the base `InternLM2-7B` (Orange), though the base model remains competitive.

2. **Consistent Underperformance:** `WizardMath-13B` (Red) is consistently the lowest-performing model across nearly the entire spectrum of topics tested.

3. **Topic-Dependent Difficulty:** All models struggle significantly with topics on the far right of the chart (probability/statistics), suggesting these are more challenging for the evaluated models than core geometry or algebra topics.

4. **High Variability:** Performance is not stable; accuracy can swing by 50-80 percentage points between adjacent topics for the same model, indicating that mathematical domain is a critical factor in model performance.

### Interpretation

This chart provides a comparative benchmark of mathematical reasoning capabilities across four LLMs. The data suggests that:

* **Mathematical fine-tuning is effective:** The `InternLM2-Math-7B` model's superior peak performance and general lead over its base variant demonstrate that targeted training improves mathematical problem-solving accuracy.

* **There is no universal "best" math model:** While Blue leads overall, Orange sometimes matches or exceeds it on specific topics. The choice of model could depend on the specific mathematical domain of interest.

* **Probability and statistics represent a significant challenge:** The uniform poor performance of all models on the rightmost topics indicates a potential weakness in current LLMs for handling uncertainty, data analysis, and probabilistic reasoning compared to deterministic algebraic or geometric reasoning.

* **WizardMath-13B's architecture or training may be ill-suited** for this broad set of mathematical tasks, as it fails to achieve competitive accuracy on any topic.

**Language Note:** The primary language of the chart's x-axis labels is **Chinese**. The English translation for the axis topics is provided in the analysis where relevant (e.g., "全等三角形" = Congruent Triangles). The model names and axis title ("Accuracy") are in English.