## Violin Plot Chart: US Foreign Policy Model Accuracy

### Overview

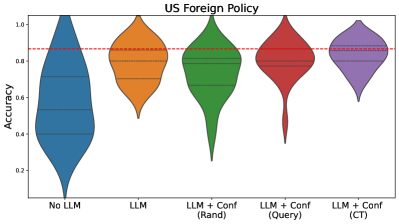

The image displays a violin plot comparing the accuracy distributions of five different models or configurations related to "US Foreign Policy." The chart visualizes the probability density of accuracy scores for each category, showing their median, interquartile range, and overall distribution shape.

### Components/Axes

* **Chart Title:** "US Foreign Policy" (centered at the top).

* **Y-Axis:** Labeled "Accuracy." The scale runs from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Contains five categorical labels for the models being compared:

1. No LLM

2. LLM

3. LLM + Conf (Randi)

4. LLM + Conf (Query)

5. LLM + Conf (CT)

* **Reference Line:** A horizontal red dashed line is drawn across the chart at an accuracy value of approximately 0.85.

* **Legend:** There is no separate legend box. The categories are identified by their labels on the x-axis and are distinguished by color (blue, orange, green, red, purple).

### Detailed Analysis

The chart presents five violin plots, each showing the distribution of accuracy scores. The internal horizontal lines within each violin represent the quartiles (median and interquartile range).

1. **No LLM (Blue):**

* **Trend/Shape:** This distribution is very wide at the bottom (low accuracy) and tapers sharply towards the top. It has the largest spread and the lowest median.

* **Key Points:** The median accuracy is approximately 0.5. The bulk of the data (interquartile range) lies between roughly 0.35 and 0.65. The distribution extends down to near 0.0 and up to about 0.95.

2. **LLM (Orange):**

* **Trend/Shape:** This distribution is more symmetric and centered higher than "No LLM." It has a classic violin shape, wider in the middle.

* **Key Points:** The median accuracy is approximately 0.7. The interquartile range spans from about 0.6 to 0.8. The distribution ranges from ~0.45 to ~0.95.

3. **LLM + Conf (Randi) (Green):**

* **Trend/Shape:** This distribution is skewed, with a high concentration of scores near the top but a long, thin tail extending downwards.

* **Key Points:** The median accuracy is high, approximately 0.85, aligning with the red dashed reference line. The interquartile range is relatively narrow, between ~0.75 and ~0.9. However, the long tail indicates some runs resulted in very low accuracy, down to ~0.1.

4. **LLM + Conf (Query) (Red):**

* **Trend/Shape:** Similar in shape to the "Randi" configuration but slightly less skewed. It has a high median and a pronounced lower tail.

* **Key Points:** The median accuracy is slightly below the red line, approximately 0.82. The interquartile range is between ~0.7 and ~0.9. The lower tail extends to about 0.2.

5. **LLM + Conf (CT) (Purple):**

* **Trend/Shape:** This is the most compact and symmetric distribution of the five. It is concentrated around a high median with minimal spread.

* **Key Points:** The median accuracy is approximately 0.85, matching the red reference line. The interquartile range is very tight, between ~0.8 and ~0.9. The overall range is the smallest, from ~0.7 to ~0.95.

### Key Observations

* **Performance Hierarchy:** There is a clear progression in median accuracy from left to right: "No LLM" < "LLM" < "LLM + Conf (Query)" < "LLM + Conf (Randi)" ≈ "LLM + Conf (CT)".

* **Stability vs. Peak Performance:** While "LLM + Conf (Randi)" and "LLM + Conf (CT)" share a similar high median (~0.85), the "CT" variant is far more stable (compact distribution), whereas "Randi" has high variance with a risk of very poor performance (long lower tail).

* **Benchmark Line:** The red dashed line at ~0.85 appears to represent a target or benchmark accuracy. Only the three "LLM + Conf" variants have medians at or near this line.

* **Impact of LLM:** The addition of an LLM ("LLM" vs. "No LLM") significantly raises the median accuracy and reduces the extreme low-end performance.

### Interpretation

This chart demonstrates the effectiveness of different approaches for a US Foreign Policy-related task, measured by accuracy. The data suggests that:

1. Using a Large Language Model (LLM) alone provides a substantial improvement over not using one.

2. Augmenting the LLM with a confidence-based method ("Conf") further improves median accuracy to a benchmark level (~0.85).

3. The choice of confidence method critically impacts reliability. The "CT" method yields the most consistent high performance, making it the most robust choice. The "Randi" and "Query" methods can achieve high accuracy but are prone to occasional catastrophic failures (very low scores), as indicated by their long lower tails.

4. The "No LLM" baseline shows that without this technology, the task is highly unreliable, with a wide spread of outcomes and a low median score.

The visualization effectively argues for the use of an LLM with the "CT" confidence method for this application, as it optimizes for both high median accuracy and low variance.