\n

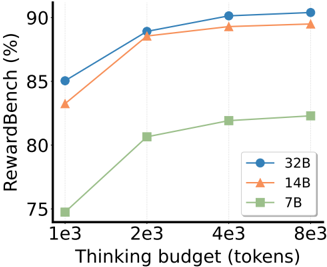

## Line Chart: RewardBench Performance vs. Thinking Budget

### Overview

This line chart illustrates the relationship between "Thinking budget" (in tokens) and "RewardBench" performance (in percentage) for three different model sizes: 32B, 14B, and 7B. The chart shows how performance changes as the thinking budget increases.

### Components/Axes

* **X-axis:** "Thinking budget (tokens)" with markers at 1e3 (1000), 2e3 (2000), 4e3 (4000), and 8e3 (8000).

* **Y-axis:** "RewardBench (%)" with a scale ranging from approximately 75% to 92%.

* **Legend:** Located in the bottom-right corner, identifying the three data series:

* Blue circle: 32B

* Orange triangle: 14B

* Green square: 7B

### Detailed Analysis

* **32B (Blue Line):** The blue line shows an upward trend, starting at approximately 85% at 1e3 tokens. It rises to around 90% at 2e3 tokens, plateaus slightly, and reaches approximately 91% at 8e3 tokens.

* 1e3 tokens: ~85%

* 2e3 tokens: ~90%

* 4e3 tokens: ~90.5%

* 8e3 tokens: ~91%

* **14B (Orange Line):** The orange line also exhibits an upward trend, beginning at approximately 82% at 1e3 tokens. It increases sharply to around 89% at 2e3 tokens, then plateaus, remaining at approximately 89% through 8e3 tokens.

* 1e3 tokens: ~82%

* 2e3 tokens: ~89%

* 4e3 tokens: ~89%

* 8e3 tokens: ~89%

* **7B (Green Line):** The green line shows a consistent upward trend, starting at approximately 75% at 1e3 tokens. It rises to around 81% at 2e3 tokens, continues to approximately 84% at 4e3 tokens, and reaches approximately 84% at 8e3 tokens.

* 1e3 tokens: ~75%

* 2e3 tokens: ~81%

* 4e3 tokens: ~84%

* 8e3 tokens: ~84%

### Key Observations

* The 32B model consistently outperforms the 14B and 7B models across all thinking budget levels.

* The 14B model shows a significant performance increase between 1e3 and 2e3 tokens, but then plateaus.

* The 7B model exhibits the lowest performance but demonstrates a steady improvement with increasing thinking budget.

* All models show diminishing returns in performance as the thinking budget increases beyond 2e3 tokens.

### Interpretation

The data suggests that increasing the thinking budget generally improves the performance of these models on the RewardBench benchmark. However, the benefit of increasing the thinking budget diminishes as it grows larger. The 32B model benefits the most from a larger thinking budget, achieving the highest performance levels. The 7B model, while starting with lower performance, still shows a positive correlation between thinking budget and RewardBench score. This indicates that even smaller models can benefit from increased computational resources for reasoning tasks. The plateauing of the 14B model suggests that its performance is limited by other factors beyond the thinking budget, such as model capacity or training data. The differences in performance between the models highlight the importance of model size in achieving high performance on complex reasoning tasks.