## Line Chart: Scaling up Test-Time Compute with Recurrent Depth

### Overview

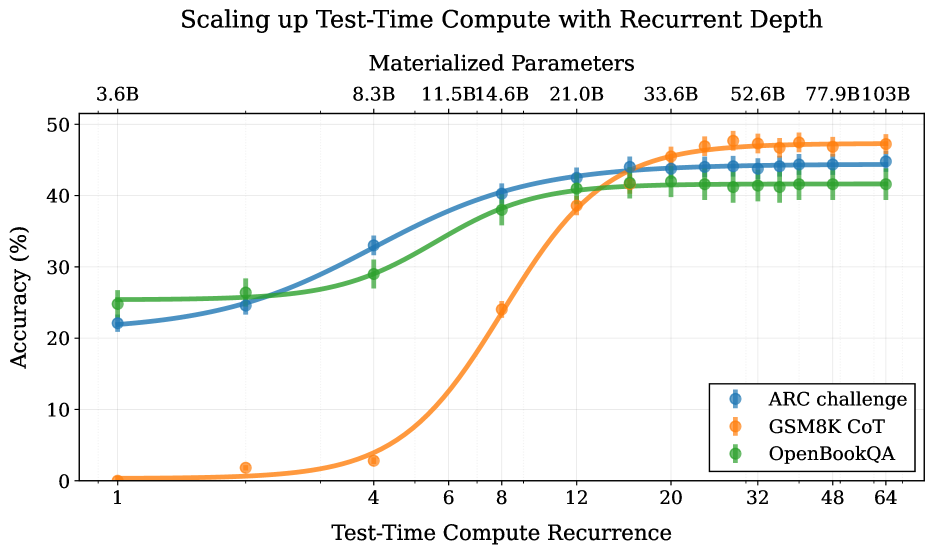

The chart illustrates the relationship between test-time compute recurrence (x-axis) and accuracy (y-axis) for three datasets: ARC challenge, GSM8K CoT, and OpenBookQA. Accuracy improves with increased compute recurrence, with distinct performance patterns across datasets.

### Components/Axes

- **X-axis**: "Test-Time Compute Recurrence" (logarithmic scale: 1, 4, 8, 12, 20, 32, 48, 64)

- **Y-axis**: "Accuracy (%)" (linear scale: 0–50%)

- **Legend**: Located in the bottom-right corner, mapping colors to datasets:

- Blue: ARC challenge

- Orange: GSM8K CoT

- Green: OpenBookQA

### Detailed Analysis

1. **ARC Challenge (Blue Line)**:

- Starts at ~22% accuracy at 1x compute.

- Gradually increases to ~45% at 64x compute.

- Steady upward trend with minimal fluctuations.

2. **GSM8K CoT (Orange Line)**:

- Begins near 0% at 1x compute.

- Sharp upward spike after 8x compute, reaching ~47% at 64x.

- Highest accuracy among datasets at higher compute levels.

3. **OpenBookQA (Green Line)**:

- Starts at ~25% at 1x compute.

- Gradual increase to ~42% at 64x compute.

- Flattens near 42% after 32x compute, showing diminishing returns.

### Key Observations

- **GSM8K CoT** demonstrates the most significant performance gains with increased compute, particularly after 8x recurrence.

- **ARC challenge** shows consistent improvement but plateaus near 45% at 64x compute.

- **OpenBookQA** exhibits the lowest accuracy gains, stabilizing at ~42% despite higher compute.

- All datasets converge near 45–47% accuracy at 64x compute, suggesting diminishing returns at extreme compute levels.

### Interpretation

The data suggests that scaling test-time compute improves accuracy across all datasets, but the rate of improvement varies. GSM8K CoT benefits disproportionately from increased compute, likely due to its reliance on iterative reasoning. OpenBookQA's plateau indicates potential architectural or data limitations. The convergence at high compute levels implies that further scaling may yield minimal additional gains, highlighting the importance of optimizing compute allocation rather than purely increasing it.