## Line Chart: Surprisal vs. Training Steps for Match and Mismatch Conditions

### Overview

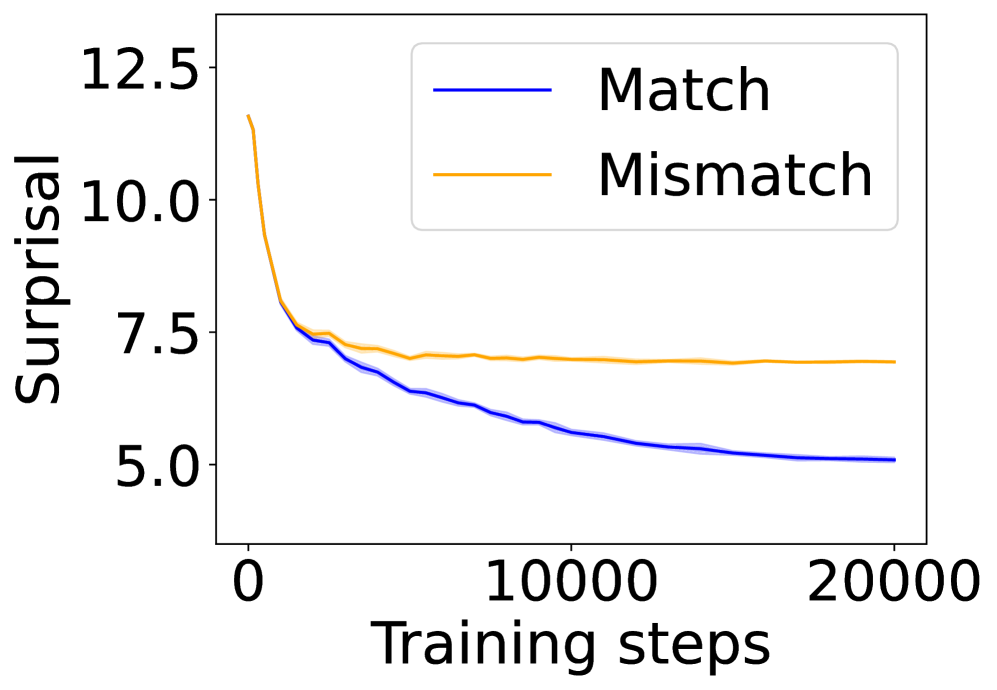

This image is a line chart comparing the "Surprisal" metric over the course of "Training steps" for two distinct conditions: "Match" and "Mismatch." The chart demonstrates how the surprisal value changes as training progresses for each condition.

### Components/Axes

* **Chart Type:** Line chart with two data series.

* **Y-Axis (Vertical):**

* **Label:** "Surprisal"

* **Scale:** Linear scale.

* **Range:** Approximately 5.0 to 12.5.

* **Major Tick Marks:** 5.0, 7.5, 10.0, 12.5.

* **X-Axis (Horizontal):**

* **Label:** "Training steps"

* **Scale:** Linear scale.

* **Range:** 0 to 20,000.

* **Major Tick Marks:** 0, 10000, 20000.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Entry 1:** A solid blue line labeled "Match".

* **Entry 2:** A solid orange line labeled "Mismatch".

### Detailed Analysis

**1. "Match" Series (Blue Line):**

* **Trend:** The line shows a consistent, monotonic downward slope across the entire training period. The rate of decrease is steepest at the beginning and gradually slows, approaching an asymptote.

* **Data Points (Approximate):**

* Step 0: ~12.0

* Step 2500: ~7.5

* Step 5000: ~6.5

* Step 10000: ~5.8

* Step 15000: ~5.3

* Step 20000: ~5.1

**2. "Mismatch" Series (Orange Line):**

* **Trend:** The line exhibits a very sharp initial decrease, followed by a rapid transition to a near-plateau. After approximately 5,000 steps, the line remains relatively flat with minor fluctuations.

* **Data Points (Approximate):**

* Step 0: ~12.0 (similar starting point to Match)

* Step 1000: ~8.0

* Step 2500: ~7.5

* Step 5000: ~7.2

* Step 10000: ~7.1

* Step 15000: ~7.0

* Step 20000: ~7.0

**3. Relationship Between Series:**

* Both series begin at approximately the same high surprisal value (~12.0) at step 0.

* They diverge significantly after the first few hundred steps.

* The "Match" line continues to improve (lower surprisal) throughout training, while the "Mismatch" line's improvement stalls early.

* The gap between the two lines widens progressively over time. By step 20,000, the "Mismatch" surprisal (~7.0) is approximately 37% higher than the "Match" surprisal (~5.1).

### Key Observations

1. **Divergent Learning Trajectories:** The primary observation is the stark difference in learning outcomes. The model continues to optimize effectively for the "Match" condition but hits a performance ceiling for the "Mismatch" condition.

2. **Asymptotic Behavior:** Both curves show signs of approaching an asymptote, but at very different levels. The "Match" curve is still gently descending at step 20,000, suggesting potential for further minor improvement. The "Mismatch" curve has effectively plateaued.

3. **Initial Similarity:** The identical starting point indicates that before any training, the model's surprisal (uncertainty/error) is equally high for both conditions.

### Interpretation

This chart likely illustrates a fundamental concept in machine learning or cognitive science: the difference between learning within a consistent, expected framework ("Match") versus encountering data that violates or mismatches that framework ("Mismatch").

* **What the data suggests:** The model learns to predict or process "Matched" data efficiently over time, as shown by the steadily decreasing surprisal. Surprisal, a measure of unexpectedness or prediction error, falls as the model's internal representations align with the data structure.

* **The "Mismatch" plateau:** The rapid plateau for "Mismatched" data indicates a limit to the model's adaptability. After an initial adjustment, the model cannot further reduce the fundamental unexpectedness or error associated with this condition. This could represent a boundary of the model's capacity, a persistent distributional shift, or an inherent incompatibility between the model's learned priors and the mismatched data.

* **Why it matters:** This visualization provides clear evidence that training does not benefit all data types equally. It highlights a potential failure mode or limitation where a system performs well on in-distribution ("Match") data but fails to generalize to or improve upon out-of-distribution or conflicting ("Mismatch") data, despite extensive training. The widening gap quantifies the growing disparity in performance.