## Diagram: Three-Step Process for Predictive Posterior Inference

### Overview

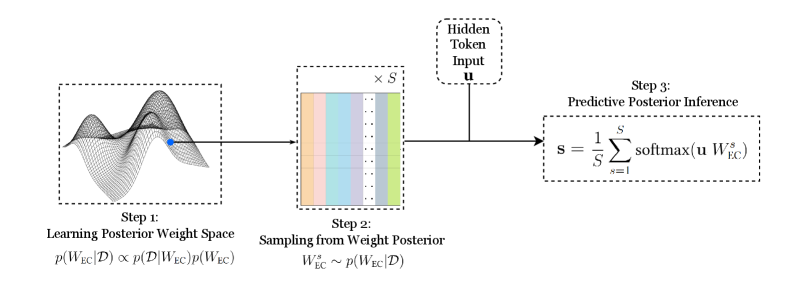

The image is a technical diagram illustrating a three-step computational process, likely for Bayesian inference or uncertainty estimation in a machine learning model. The flow moves from left to right, starting with learning a weight distribution, sampling from it, and finally using those samples to make a prediction. The diagram combines graphical representations, mathematical notation, and text labels.

### Components/Axes

The diagram is segmented into three primary components, connected by directional arrows indicating the flow of data or process.

1. **Step 1: Learning Posterior Weight Space** (Leftmost box)

* **Visual:** A 3D wireframe surface plot with multiple peaks and valleys, representing a complex probability distribution. A single blue dot is placed on one of the slopes.

* **Text Label:** "Step 1: Learning Posterior Weight Space"

* **Mathematical Notation:** `p(W_EC|D) ∝ p(D|W_EC)p(W_EC)`

* **Spatial Position:** Located on the far left of the diagram.

2. **Step 2: Sampling from Weight Posterior** (Central box)

* **Visual:** A rectangular block divided into multiple vertical columns of different colors (from left to right: orange, light blue, purple, grey, green). The notation "× S" is placed above the top-right corner of this block.

* **Text Label:** "Step 2: Sampling from Weight Posterior"

* **Mathematical Notation:** `W_EC^s ~ p(W_EC|D)`

* **Spatial Position:** Centered in the diagram, receiving an arrow from Step 1.

3. **Step 3: Predictive Posterior Inference** (Rightmost box)

* **Visual:** A rectangular box containing a mathematical formula.

* **Text Label:** "Step 3: Predictive Posterior Inference"

* **Mathematical Notation:** `s = (1/S) * Σ_{s=1}^{S} softmax(u W_EC^s)`

* **Spatial Position:** Located on the far right of the diagram.

4. **Hidden Token Input** (Top-center box)

* **Visual:** A dashed-line box containing text.

* **Text Label:** "Hidden Token Input u"

* **Spatial Position:** Positioned above the arrow connecting Step 2 to Step 3, indicating it is an input to the final step.

### Detailed Analysis

The process is defined by the following sequence and relationships:

* **Flow Direction:** The process flows unidirectionally from Step 1 → Step 2 → Step 3. An additional input (`u`) is introduced between Step 2 and Step 3.

* **Step 1 Details:** This step represents the training or fitting phase. The formula `p(W_EC|D) ∝ p(D|W_EC)p(W_EC)` is Bayes' theorem, indicating the model is learning the posterior distribution of weights (`W_EC`) given some data (`D`). The 3D plot visually represents this complex, multi-modal posterior distribution.

* **Step 2 Details:** This step involves generating `S` samples from the learned posterior distribution. The colored block represents a collection of `S` weight matrices or vectors (`W_EC^s`), where each color likely corresponds to a different sample `s`. The notation `~` means "sampled from."

* **Step 3 Details:** This is the inference or prediction phase. For a given input (the "Hidden Token Input u"), the model computes a prediction `s`. This is done by:

1. Taking each of the `S` weight samples (`W_EC^s`) from Step 2.

2. Computing the softmax of the product `u * W_EC^s` for each sample.

3. Averaging these `S` softmax outputs (the `(1/S) * Σ` operation).

* **Input `u`:** The "Hidden Token Input u" is a vector or matrix that is multiplied by each sampled weight matrix `W_EC^s` before the softmax function is applied.

### Key Observations

* The diagram explicitly models **uncertainty** by using a distribution over weights (`p(W_EC|D)`) rather than a single point estimate.

* The final prediction `s` is an **ensemble average** over `S` different models, each parameterized by a weight sample from the posterior. This is a common technique for Bayesian neural networks or Monte Carlo dropout.

* The use of the **softmax** function in Step 3 suggests the final output `s` is a probability distribution over classes (e.g., for a classification task).

* The visual metaphor in Step 1 (a complex landscape) effectively communicates the idea of a high-dimensional, non-convex posterior distribution that is difficult to characterize with a simple formula.

### Interpretation

This diagram outlines a **Bayesian neural network inference pipeline**. The core idea is to move beyond a single "best guess" model and instead maintain a probability distribution over all plausible models (weights) that fit the training data.

1. **Learning (Step 1):** The model doesn't just find one set of optimal weights; it learns the entire landscape of probable weights. The blue dot on the surface may represent a maximum a posteriori (MAP) estimate, but the process considers the whole distribution.

2. **Sampling (Step 2):** To make this intractable distribution usable, the model draws a finite number (`S`) of representative weight configurations. Each colored column is a different "version" of the model.

3. **Prediction (Step 3):** When presented with new data (`u`), each version of the model makes its own prediction (via `softmax(u W_EC^s)`). The final output is the average of all these predictions. This averaging smooths out the idiosyncrasies of any single weight sample, leading to a more robust and calibrated prediction that inherently quantifies uncertainty. A high variance among the individual `softmax` outputs would indicate high model uncertainty for that input.

**In essence, the diagram shows how to transform a complex, learned probability distribution over model parameters into a practical, averaged prediction for new data, providing a principled way to handle uncertainty in machine learning.**