## Diagram: Two Drafting Methods for Large Language Models

### Overview

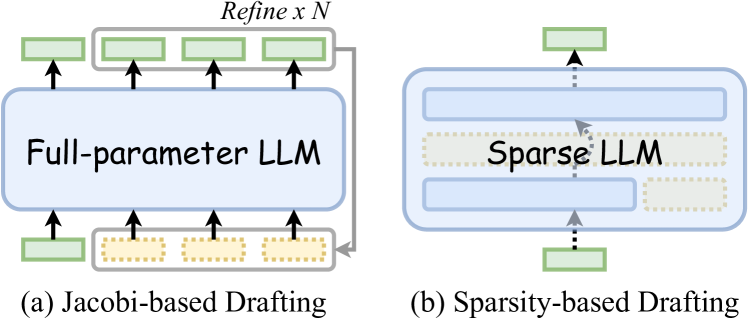

The image displays two side-by-side technical diagrams illustrating different architectural approaches for "drafting" in the context of Large Language Models (LLMs). The diagrams are labeled (a) and (b) and contrast a "Jacobi-based" method using a full-parameter model with a "Sparsity-based" method using a sparse model.

### Components/Axes

The image contains two distinct diagrams with the following labeled components:

**Diagram (a): Jacobi-based Drafting**

* **Central Component:** A large, light-blue rounded rectangle labeled **"Full-parameter LLM"**.

* **Input/Output Blocks:** Four smaller, green-outlined rectangular blocks are positioned below the central LLM, with arrows pointing upward into it. Four similar green-outlined blocks are positioned above the central LLM, with arrows pointing upward out of it.

* **Refinement Loop:** A gray, rounded rectangular container encloses the top four output blocks. This container is labeled **"Refine x N"** at its top center. A gray arrow originates from the right side of this container and loops back down to point at the rightmost input block at the bottom.

* **Flow Indicators:** Black arrows show the direction of data flow: from the bottom input blocks into the LLM, and from the LLM out to the top output blocks.

**Diagram (b): Sparsity-based Drafting**

* **Central Component:** A large, light-blue rounded rectangle containing the label **"Sparse LLM"**. Inside this rectangle, there are two solid light-blue horizontal bars (one top, one bottom) and a central, dashed-outline yellow bar.

* **Input/Output Blocks:** A single green-outlined rectangular block is positioned below the central component. A single green-outlined rectangular block is positioned above it.

* **Flow Indicators:** Dashed gray arrows connect the bottom input block to the central component and the central component to the top output block. A solid gray arrow points from the central dashed yellow bar upward to the top solid blue bar.

### Detailed Analysis

The diagrams visually encode the following technical processes:

**For (a) Jacobi-based Drafting:**

1. **Process:** The system takes multiple input drafts (represented by the four bottom blocks) and processes them simultaneously through a single, full-parameter LLM.

2. **Iteration:** The outputs (top blocks) are collected and subjected to a refinement process that is repeated N times ("Refine x N").

3. **Feedback:** The refined outputs are fed back into the system as new inputs for the next iteration, creating a closed-loop, iterative refinement cycle.

**For (b) Sparsity-based Drafting:**

1. **Process:** The system uses a "Sparse LLM," which is visually represented as having both active (solid blue bars) and inactive or pruned (dashed yellow bar) components.

2. **Flow:** A single input draft is processed. The dashed arrows suggest a potentially conditional or selective data path through the sparse model.

3. **Internal Routing:** The solid gray arrow inside the Sparse LLM indicates a specific internal data pathway from the sparse (yellow) component to an active (blue) component, highlighting the model's sparse activation pattern.

### Key Observations

* **Structural Contrast:** Diagram (a) emphasizes parallel processing and iterative refinement with a monolithic model. Diagram (b) emphasizes internal model sparsity and a more streamlined, single-pass data flow.

* **Visual Metaphors:** The use of solid vs. dashed lines is a key visual metaphor. In (a), solid lines represent active data flow. In (b), dashed lines represent the sparse or conditional nature of the model's internal pathways and connections.

* **Complexity:** The Jacobi-based method appears more complex, involving multiple data streams and a feedback loop. The Sparsity-based method appears more streamlined at the system level but implies complexity within the model's architecture.

### Interpretation

These diagrams illustrate two distinct paradigms for improving LLM inference or training efficiency, likely in the context of speculative decoding or iterative refinement.

* **Jacobi-based Drafting** suggests a method where multiple candidate drafts are generated and refined in parallel through the full model, leveraging iterative correction (akin to a Jacobi iterative method in numerical analysis). The "Refine x N" loop is central to its operation, indicating that quality is improved through repeated passes.

* **Sparsity-based Drafting** suggests a method that relies on the inherent sparse architecture of a model (e.g., a Mixture-of-Experts model or a pruned model) to process drafts more efficiently. The single input/output path and internal sparse routing imply a focus on reducing computational cost per draft by activating only relevant parts of the network.

The core contrast is between **improving output through iterative, full-model refinement** (a) and **improving efficiency through architectural sparsity** (b). The choice between them would involve a trade-off between the quality gains from multiple refinement steps and the computational savings from sparse activation.