# Technical Document Extraction: GPT-4 vs Prometheus Analysis

## 1. Chart Identification

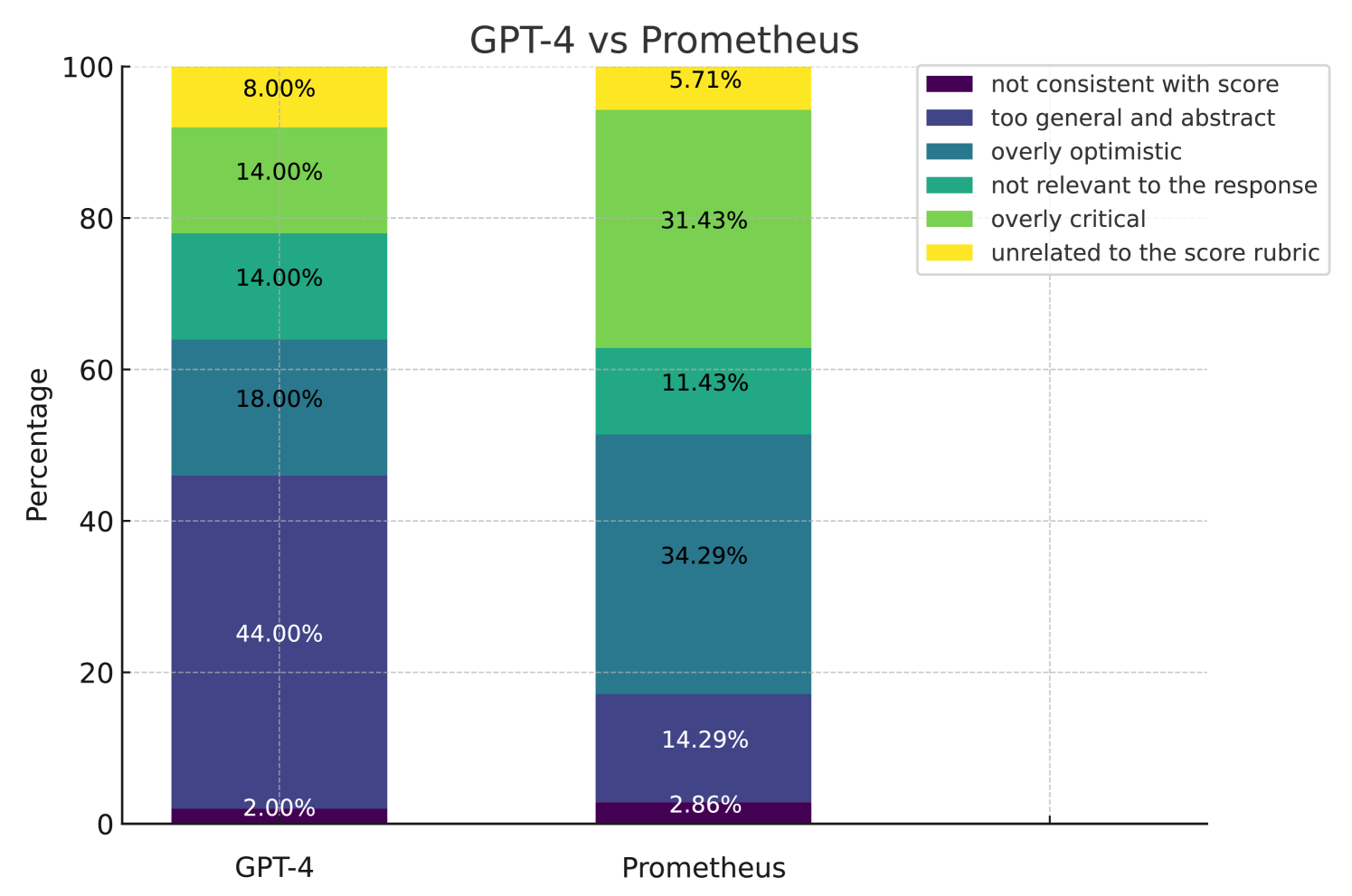

- **Type**: Stacked bar chart

- **Title**: "GPT-4 vs Prometheus"

- **Purpose**: Comparative analysis of response quality metrics between two AI models

## 2. Axis Labels and Markers

- **X-axis**:

- Labels: ["GPT-4", "Prometheus"]

- Position: Horizontal axis at bottom

- **Y-axis**:

- Label: "Percentage"

- Range: 0–100% (increments of 20%)

- Position: Vertical axis on left

## 3. Legend Analysis

- **Location**: Right side of chart

- **Color-Category Mapping**:

- Purple: "not consistent with score"

- Dark Blue: "too general and abstract"

- Teal: "overly optimistic"

- Green: "not relevant to the response"

- Light Green: "overly critical"

- Yellow: "unrelated to the score rubric"

## 4. Data Extraction

### GPT-4 Bar (Left)

- **Total Height**: 100%

- **Segment Breakdown**:

- Purple: 2.00% ("not consistent with score")

- Dark Blue: 44.00% ("too general and abstract")

- Teal: 18.00% ("overly optimistic")

- Green: 14.00% ("not relevant to the response")

- Light Green: 14.00% ("overly critical")

- Yellow: 8.00% ("unrelated to the score rubric")

### Prometheus Bar (Right)

- **Total Height**: 100%

- **Segment Breakdown**:

- Purple: 2.86% ("not consistent with score")

- Dark Blue: 14.29% ("too general and abstract")

- Teal: 34.29% ("overly optimistic")

- Green: 11.43% ("not relevant to the response")

- Light Green: 31.43% ("overly critical")

- Yellow: 5.71% ("unrelated to the score rubric")

## 5. Trend Verification

- **GPT-4 Dominant Category**:

- "too general and abstract" (44.00%) - tallest segment

- **Prometheus Dominant Category**:

- "overly critical" (31.43%) - tallest segment

- **Notable Differences**:

- Prometheus shows 19.29% higher "overly optimistic" responses vs GPT-4

- GPT-4 has 29.71% higher "too general and abstract" responses vs Prometheus

- Prometheus has 22.43% higher "overly critical" responses vs GPT-4

## 6. Spatial Grounding

- **Legend Position**: [x=100%, y=0–100%] (right edge)

- **Bar Orientation**: Vertical stacking from bottom (lowest category) to top (highest category)

- **Color Consistency Check**:

- All purple segments match "not consistent with score" category

- All dark blue segments match "too general and abstract" category

- (Repeat verification for all six categories)

## 7. Component Isolation

### Header

- Title: "GPT-4 vs Prometheus"

- Subtitle: None

### Main Chart

- Two vertical bars side-by-side

- Each bar divided into six color-coded segments

- Percentage labels inside each segment

### Footer

- No visible footer elements in image

## 8. Data Table Reconstruction

| Model | not consistent with score | too general and abstract | overly optimistic | not relevant to response | overly critical | unrelated to rubric |

|-------------|---------------------------|--------------------------|-------------------|--------------------------|-----------------|---------------------|

| GPT-4 | 2.00% | 44.00% | 18.00% | 14.00% | 14.00% | 8.00% |

| Prometheus | 2.86% | 14.29% | 34.29% | 11.43% | 31.43% | 5.71% |

## 9. Language Analysis

- **Primary Language**: English

- **Secondary Language**: None detected

## 10. Critical Observations

1. Prometheus demonstrates significantly higher criticism tendency (31.43% vs 14.00%)

2. GPT-4 shows stronger tendency toward generic responses (44.00% vs 14.29%)

3. Both models exhibit similar "not consistent with score" rates (<3%)

4. Optimism bias is 91% higher in Prometheus responses