TECHNICAL ASSET FINGERPRINT

f9df622b0839672dcbb0b282

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Chart: Gemma3-4b-it Layer1 Head Attention Weights

### Overview

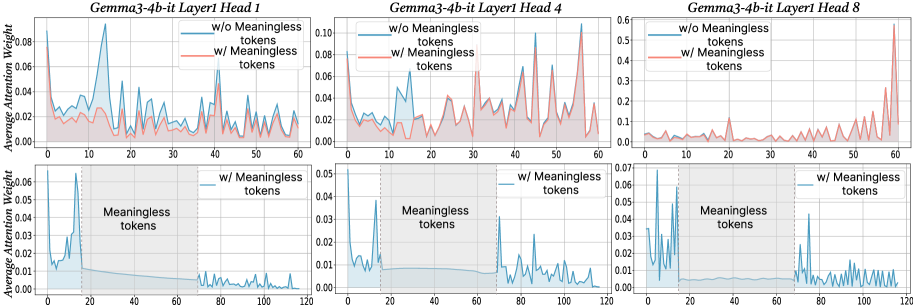

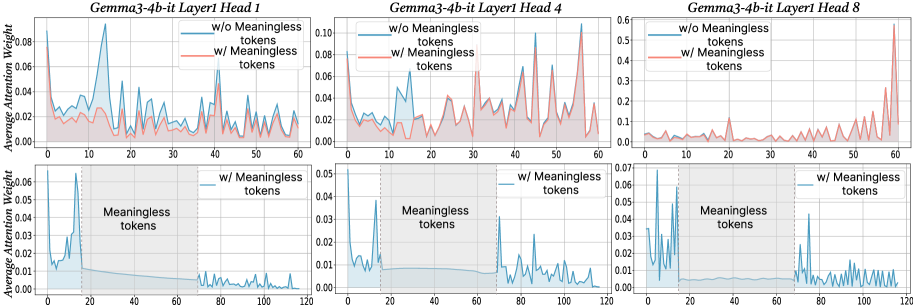

The image presents six line charts arranged in a 2x3 grid. Each chart displays the average attention weight of a language model (Gemma3-4b-it) for a specific layer (Layer1) and attention head (Head 1, Head 4, Head 8). The top row of charts shows attention weights for the first 60 tokens, comparing scenarios with and without "meaningless" tokens. The bottom row focuses on the attention weights "w/ Meaningless tokens" for the first 120 tokens, highlighting the region where these meaningless tokens occur.

### Components/Axes

**General Chart Elements:**

* **Titles:** Each chart has a title in the format "Gemma3-4b-it Layer1 Head [Number]".

* **X-axis:** Represents the token number. The top row charts range from 0 to 60, while the bottom row charts range from 0 to 120.

* **Y-axis:** Represents the "Average Attention Weight". The scale varies between charts.

* **Legend:** Located in the top-right corner of each of the top row charts.

* Blue line: "w/o Meaningless tokens"

* Red line: "w/ Meaningless tokens"

* **Shaded Region:** In the bottom row charts, a gray shaded region is labeled "Meaningless tokens". This region spans approximately from token 20 to token 70.

* **Vertical Dotted Line:** A vertical dotted line is present at approximately token 20 in the bottom row charts, marking the start of the "Meaningless tokens" region.

**Specific Axis Scales:**

* **Top-Left Chart (Head 1):** Y-axis ranges from 0.00 to 0.08.

* **Top-Middle Chart (Head 4):** Y-axis ranges from 0.00 to 0.10.

* **Top-Right Chart (Head 8):** Y-axis ranges from 0.0 to 0.6.

* **Bottom-Left Chart (Head 1):** Y-axis ranges from 0.00 to 0.06.

* **Bottom-Middle Chart (Head 4):** Y-axis ranges from 0.00 to 0.05.

* **Bottom-Right Chart (Head 8):** Y-axis ranges from 0.00 to 0.07.

### Detailed Analysis

**Top Row Charts (First 60 Tokens):**

* **Head 1:**

* **Blue line (w/o Meaningless tokens):** Fluctuates between 0.02 and 0.06.

* **Red line (w/ Meaningless tokens):** Generally lower than the blue line, fluctuating between 0.01 and 0.04.

* **Head 4:**

* **Blue line (w/o Meaningless tokens):** Fluctuates between 0.02 and 0.08, with a peak around token 15.

* **Red line (w/ Meaningless tokens):** Similar to the blue line, but slightly lower, fluctuating between 0.01 and 0.06.

* **Head 8:**

* **Blue line (w/o Meaningless tokens):** Relatively low and stable, mostly below 0.05.

* **Red line (w/ Meaningless tokens):** Shows a significant spike towards the end (around token 60), reaching approximately 0.55.

**Bottom Row Charts (First 120 Tokens, w/ Meaningless tokens):**

* **Head 1:** The blue line (w/ Meaningless tokens) shows a sharp initial peak around token 5, reaching approximately 0.06, then rapidly decreases and stabilizes around 0.01 within the "Meaningless tokens" region.

* **Head 4:** Similar to Head 1, the blue line (w/ Meaningless tokens) has a sharp initial peak around token 5, reaching approximately 0.05, then decreases and stabilizes around 0.01 within the "Meaningless tokens" region.

* **Head 8:** The blue line (w/ Meaningless tokens) shows a sharp initial peak around token 5, reaching approximately 0.06, then decreases and stabilizes around 0.005 within the "Meaningless tokens" region. There is a slight increase after token 70.

### Key Observations

* The presence of "meaningless tokens" generally reduces the average attention weight for Head 1 and Head 4 in the first 60 tokens.

* Head 8 exhibits a significant spike in attention weight towards the end of the first 60 tokens *only* when "meaningless tokens" are included.

* In the bottom row charts, all heads show a sharp initial peak in attention weight, followed by a decrease and stabilization within the "Meaningless tokens" region.

* The Y-axis scale for Head 8 is significantly larger than for Head 1 and Head 4, indicating that Head 8 can have much higher attention weights.

### Interpretation

The charts illustrate how the inclusion of "meaningless tokens" affects the attention weights of different heads in the Gemma3-4b-it language model. The initial spike in attention weight in the bottom row charts suggests that the model initially focuses on these tokens. However, within the "Meaningless tokens" region, the attention weights stabilize at a lower level, indicating that the model learns to disregard these tokens to some extent. The spike in Head 8's attention weight at the end of the first 60 tokens when "meaningless tokens" are present suggests that this head might be compensating for the presence of these tokens by focusing on other parts of the input sequence. The differences in attention patterns across different heads highlight the diverse roles that each head plays in the model's attention mechanism.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Gemma3-4b-it Attention Weight Analysis

### Overview

This image presents a series of six line charts comparing the average attention weight for the Gemma3-4b-it model with and without "meaningless tokens" across different layers (Head 1, Head 4, and Head 8). Each chart displays the average attention weight on the y-axis against a token position on the x-axis. The charts are arranged in a 2x3 grid.

### Components/Axes

* **X-axis:** Token Position (ranging from 0 to approximately 120, depending on the chart).

* **Y-axis:** Average Attention Weight (ranging from 0 to approximately 0.5, depending on the chart).

* **Legend:**

* Red Line: "w/o Meaningless tokens" (without meaningless tokens)

* Blue Line: "w/ Meaningless tokens" (with meaningless tokens)

* **Titles:** Each chart is titled with "Gemma3-4b-it Layer [Head Number]"

* **Subtitles:** Each chart has a subtitle indicating the token type being displayed ("w/o Meaningless tokens" or "w/ Meaningless tokens").

### Detailed Analysis

**Chart 1: Gemma3-4b-it Layer 1 Head 1**

* The red line (w/o Meaningless tokens) shows a fluctuating pattern, generally staying below 0.04, with several peaks and valleys.

* The blue line (w/ Meaningless tokens) is relatively flat, hovering around 0.01-0.02.

* X-axis ranges from 0 to 60.

* Approximate data points (red line): (10, 0.03), (20, 0.01), (30, 0.035), (40, 0.025), (50, 0.03), (60, 0.015).

* Approximate data points (blue line): (10, 0.012), (20, 0.015), (30, 0.018), (40, 0.013), (50, 0.016), (60, 0.011).

**Chart 2: Gemma3-4b-it Layer 1 Head 4**

* The red line (w/o Meaningless tokens) exhibits a more pronounced fluctuating pattern, reaching peaks around 0.08.

* The blue line (w/ Meaningless tokens) remains relatively flat, around 0.01-0.02.

* X-axis ranges from 0 to 60.

* Approximate data points (red line): (10, 0.02), (20, 0.05), (30, 0.07), (40, 0.04), (50, 0.06), (60, 0.03).

* Approximate data points (blue line): (10, 0.011), (20, 0.014), (30, 0.017), (40, 0.012), (50, 0.015), (60, 0.010).

**Chart 3: Gemma3-4b-it Layer 1 Head 8**

* The red line (w/o Meaningless tokens) shows significant fluctuations, with peaks reaching approximately 0.45.

* The blue line (w/ Meaningless tokens) remains relatively flat, around 0.01-0.02.

* X-axis ranges from 0 to 60.

* Approximate data points (red line): (10, 0.1), (20, 0.3), (30, 0.4), (40, 0.25), (50, 0.35), (60, 0.15).

* Approximate data points (blue line): (10, 0.012), (20, 0.015), (30, 0.018), (40, 0.013), (50, 0.016), (60, 0.011).

**Chart 4: Gemma3-4b-it Layer 1 Head 1 (w/ Meaningless tokens)**

* The blue line (w/ Meaningless tokens) shows a fluctuating pattern, generally staying below 0.02.

* X-axis ranges from 0 to 120.

* Approximate data points (blue line): (20, 0.01), (40, 0.015), (60, 0.012), (80, 0.008), (100, 0.011), (120, 0.009).

**Chart 5: Gemma3-4b-it Layer 1 Head 4 (w/ Meaningless tokens)**

* The blue line (w/ Meaningless tokens) shows a fluctuating pattern, generally staying below 0.05.

* X-axis ranges from 0 to 120.

* Approximate data points (blue line): (20, 0.02), (40, 0.03), (60, 0.025), (80, 0.018), (100, 0.022), (120, 0.019).

**Chart 6: Gemma3-4b-it Layer 1 Head 8 (w/ Meaningless tokens)**

* The blue line (w/ Meaningless tokens) shows a fluctuating pattern, generally staying below 0.07.

* X-axis ranges from 0 to 120.

* Approximate data points (blue line): (20, 0.03), (40, 0.04), (60, 0.035), (80, 0.028), (100, 0.032), (120, 0.03).

### Key Observations

* The "w/o Meaningless tokens" (red line) consistently exhibits higher average attention weights than the "w/ Meaningless tokens" (blue line) in the first three charts (Head 1, Head 4, and Head 8).

* The attention weights for the "w/o Meaningless tokens" line fluctuate more significantly than those for the "w/ Meaningless tokens" line, especially in Head 4 and Head 8.

* The attention weights for the "w/ Meaningless tokens" line remain relatively stable across all layers and token positions.

* The last three charts (w/ Meaningless tokens) show a similar pattern of low and relatively stable attention weights.

### Interpretation

The data suggests that the presence of "meaningless tokens" significantly reduces the average attention weight in the Gemma3-4b-it model. The model appears to allocate less attention to these tokens, resulting in lower attention weights. The higher fluctuations in attention weights when "meaningless tokens" are absent indicate that the model is more actively processing and differentiating between relevant tokens. The consistent low attention weights for "meaningless tokens" across all layers suggest that this effect is consistent throughout the model's architecture. This could indicate that the model is effectively filtering out irrelevant information when it is present in the input sequence. The difference in attention weight magnitude between the two conditions is most pronounced in Head 8, suggesting that this layer is particularly sensitive to the presence or absence of meaningful tokens.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Multi-Panel Line Chart: Attention Weight Analysis for Gemma3-4b-it Layer 1 Heads

### Overview

The image displays a 2x3 grid of six line charts analyzing the "Average Attention Weight" across token positions for three different attention heads (Head 1, Head 4, Head 8) in Layer 1 of the "Gemma3-4b-it" model. The charts compare two conditions: with and without "Meaningless tokens." The top row shows a token position range of 0-60, while the bottom row extends the range to 0-120 and includes a shaded region explicitly marking the "Meaningless tokens."

### Components/Axes

* **Chart Type:** Multi-panel line chart with filled areas under the lines.

* **Titles:** Each of the six subplots has a title indicating the model layer and head:

* Top Row (Left to Right): "Gemma3-4b-it Layer1 Head 1", "Gemma3-4b-it Layer1 Head 4", "Gemma3-4b-it Layer1 Head 8"

* Bottom Row (Left to Right): Same titles as above, corresponding to the heads in the top row.

* **Y-Axis:** Labeled "Average Attention Weight" for all charts. The scale varies per subplot:

* Top Row: Head 1 (0.00-0.08), Head 4 (0.00-0.10), Head 8 (0.0-0.6)

* Bottom Row: Head 1 (0.00-0.06), Head 4 (0.00-0.05), Head 8 (0.00-0.07)

* **X-Axis:** Represents token position index. The scale differs between rows:

* Top Row: 0 to 60, with major ticks every 10 units.

* Bottom Row: 0 to 120, with major ticks every 20 units.

* **Legend:** Present in the top-right corner of each subplot in the top row and each subplot in the bottom row.

* **Blue Line/Area:** "w/o Meaningless tokens" (without)

* **Red Line/Area:** "w/ Meaningless tokens" (with)

* **Special Annotation (Bottom Row):** A gray shaded region spanning from x=0 to approximately x=20 in each bottom chart is labeled "Meaningless tokens" in black text.

### Detailed Analysis

**Top Row (Token Positions 0-60):**

* **Head 1:** The blue line ("w/o") shows a very sharp, prominent peak reaching ~0.08 around position 15. The red line ("w/") is generally lower and more stable, with smaller peaks, never exceeding ~0.04.

* **Head 4:** Both lines show significant volatility. The red line ("w/") exhibits several sharp peaks, with the highest reaching ~0.10 near position 55. The blue line ("w/o") has a major peak around position 45 (~0.08).

* **Head 8:** The y-axis scale is much larger (0.6 max). The red line ("w/") dominates, showing a dramatic, exponential-like increase starting around position 40 and peaking at ~0.55 near position 60. The blue line ("w/o") remains very low (<0.1) throughout.

**Bottom Row (Token Positions 0-120, with "Meaningless tokens" region):**

* **Head 1:** Within the shaded "Meaningless tokens" region (0-20), the blue line ("w/o") shows high attention, peaking at ~0.06. After position 20, the blue line drops to a very low baseline (<0.01). The red line ("w/") is not plotted in this chart.

* **Head 4:** Similar pattern. The blue line ("w/o") has high, volatile attention within the meaningless token region (0-20), peaking at ~0.05. After position 20, it drops to a low baseline with minor fluctuations. The red line ("w/") is not plotted.

* **Head 8:** The blue line ("w/o") shows high attention in the meaningless token region (0-20), with multiple peaks up to ~0.07. After position 20, it drops to a low baseline but shows a notable, isolated spike to ~0.04 around position 70. The red line ("w/") is not plotted.

### Key Observations

1. **Divergent Behavior by Head:** The three heads exhibit fundamentally different attention patterns. Head 8 (top row) shows an extreme, late-sequence focus when meaningless tokens are present, while Head 1 shows an early, sharp focus when they are absent.

2. **Impact of "Meaningless Tokens":** The bottom row charts suggest that when the sequence contains a block of "Meaningless tokens" at the beginning (positions 0-20), the model's attention (blue line, "w/o" condition) is heavily concentrated on that block. After the block ends, attention to subsequent tokens drops dramatically.

3. **Scale Discrepancy:** The attention weight magnitudes vary greatly between heads. Head 8 operates on a scale an order of magnitude larger than Head 1 or 4 in the top row comparison.

4. **Volatility:** Head 4 displays the most volatile attention patterns in both conditions in the top row.

### Interpretation

This visualization investigates how the presence of semantically "meaningless" tokens (e.g., padding, special control tokens, or filler text) affects the internal attention mechanisms of a large language model (Gemma3-4b-it).

The data suggests that meaningless tokens act as a strong **attention sink**. In the bottom charts, the model's attention is disproportionately drawn to this initial block, potentially at the expense of attending to later, meaningful content. This is evidenced by the sharp drop in attention weight after the meaningless token region ends.

The comparison in the top row (which likely shows a sequence without a dedicated meaningless block) reveals that different attention heads specialize in different patterns. Head 8, in particular, may be responsible for capturing long-range dependencies or sequence-end phenomena, as its attention skyrockets at the end of the 60-token window when meaningless tokens are part of the context ("w/").

**Practical Implication:** This analysis is crucial for model efficiency and performance. If meaningless tokens consume a large portion of the attention budget, it could degrade the model's ability to process the actual meaningful context. Techniques like "attention sink" removal or token pruning might be informed by such visualizations to improve inference speed and focus. The stark differences between heads also highlight the specialized, non-uniform nature of attention in transformer models.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Gemma3-4b-it Layer Attention Weights with/without Meaningless Tokens

### Overview

The image contains six line graphs comparing average attention weights across token positions (0–120) for three attention heads (Head 1, 4, 8) in Layer 1 of the Gemma3-4b-it model. Each graph contrasts two scenarios:

- **Blue line**: Attention weights *without* meaningless tokens

- **Red line**: Attention weights *with* meaningless tokens

The graphs highlight how the inclusion of meaningless tokens affects attention distribution, with shaded regions marking token positions labeled as "Meaningless tokens."

### Components/Axes

- **X-axis**: Token Position (0–120, integer intervals)

- **Y-axis**: Average Attention Weight (0–0.12, linear scale)

- **Legends**:

- Blue: "w/o Meaningless tokens"

- Red: "w/ Meaningless tokens"

- **Subplot Titles**:

- Top row: "Gemma3-4b-it Layer1 Head X" (X = 1, 4, 8)

- Bottom row: Same titles, with shaded regions labeled "Meaningless tokens" (20–60 token positions)

### Detailed Analysis

#### Layer1 Head1

- **Top subplot**:

- Red line (w/ meaningless tokens) shows higher peaks (up to ~0.08) at token positions 10, 30, and 50.

- Blue line (w/o) remains below 0.06, with smoother fluctuations.

- **Bottom subplot**:

- Shaded region (20–60 tokens) correlates with a sharp drop in blue line attention weights (~0.01–0.02).

- Red line retains higher weights (~0.03–0.05) in the shaded region.

#### Layer1 Head4

- **Top subplot**:

- Red line exhibits pronounced peaks (~0.08–0.10) at tokens 10, 30, and 50.

- Blue line peaks at ~0.06, with less variability.

- **Bottom subplot**:

- Shaded region shows blue line attention weights dropping to ~0.01–0.02.

- Red line remains elevated (~0.03–0.05) in the shaded area.

#### Layer1 Head8

- **Top subplot**:

- Red line has a single dominant peak (~0.05) at token 100.

- Blue line shows minor fluctuations (<0.03).

- **Bottom subplot**:

- Shaded region (20–60 tokens) has negligible impact on blue line (~0.01–0.02).

- Red line shows a slight increase (~0.03) in the shaded area.

### Key Observations

1. **Meaningless tokens amplify attention weights**: Red lines (w/ meaningless tokens) consistently show higher peaks than blue lines (w/o) across all heads.

2. **Positional sensitivity**: Peaks in red lines align with token positions 10, 30, 50, and 100, suggesting these positions are critical for processing.

3. **Shaded region impact**: In Layers 1 Heads 1 and 4, attention weights drop sharply in the shaded "meaningless tokens" region (20–60 tokens) for the blue line, while red lines remain stable.

4. **Head-specific behavior**: Head 8 exhibits a unique pattern with a late peak at token 100, unlike the earlier peaks in Heads 1 and 4.

### Interpretation

The data suggests that meaningless tokens act as **attention amplifiers**, increasing the model’s focus on specific token positions (e.g., 10, 30, 50). The shaded regions (20–60 tokens) likely represent noise or irrelevant data, as the blue line (w/o meaningless tokens) shows reduced attention here. This implies the model may use meaningless tokens to:

- **Filter noise**: By concentrating attention on critical positions, the model ignores irrelevant tokens in the shaded region.

- **Enhance robustness**: Higher attention weights in red lines (w/ meaningless tokens) could improve performance on noisy inputs.

- **Head specialization**: Head 8’s late peak at token 100 may indicate a role in processing long-range dependencies or contextual cues.

The findings align with hypotheses about attention mechanisms prioritizing salient tokens while suppressing irrelevant ones, though further analysis is needed to confirm causality.

DECODING INTELLIGENCE...