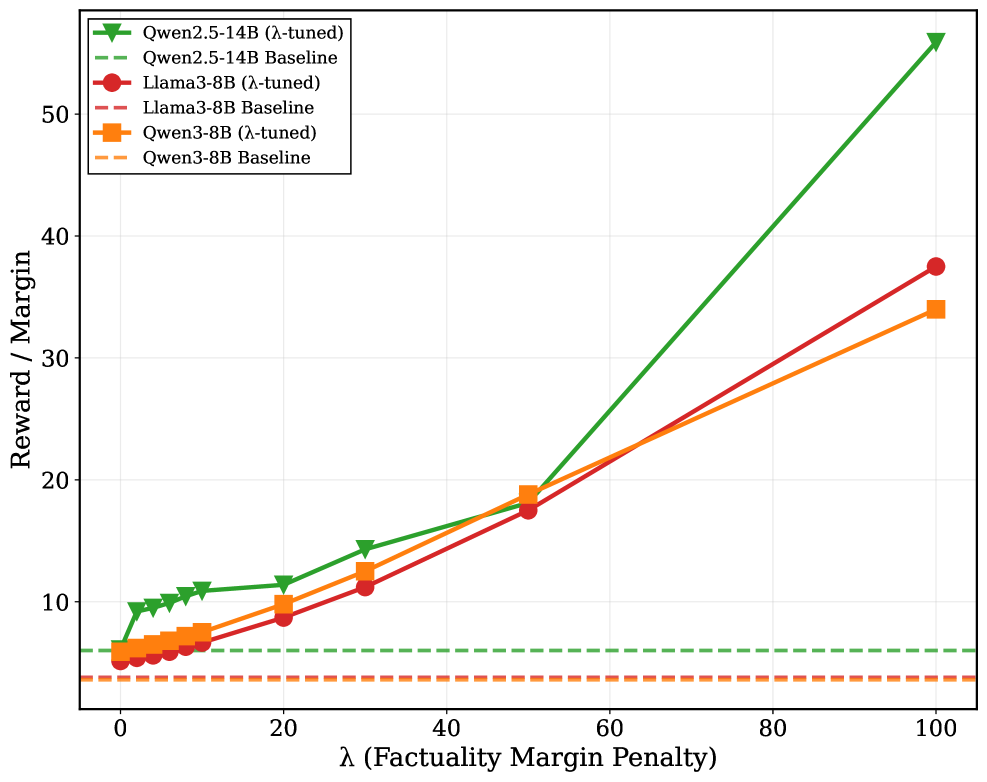

## Line Chart: Reward/Margin vs. Factuality Margin Penalty (λ)

### Overview

This is a line chart comparing the performance of three different Large Language Models (LLMs) under two conditions: a "λ-tuned" version and a "Baseline" version. The chart plots the "Reward / Margin" metric against an increasing "Factuality Margin Penalty" parameter, denoted by λ (lambda). The data demonstrates how the reward/margin for the λ-tuned models scales with the penalty parameter, while the baseline models remain constant.

### Components/Axes

* **Chart Type:** Multi-series line chart with markers.

* **X-Axis:**

* **Label:** `λ (Factuality Margin Penalty)`

* **Scale:** Linear, ranging from 0 to 100.

* **Major Ticks:** 0, 20, 40, 60, 80, 100.

* **Y-Axis:**

* **Label:** `Reward / Margin`

* **Scale:** Linear, ranging from 0 to approximately 55.

* **Major Ticks:** 0, 10, 20, 30, 40, 50.

* **Legend:** Positioned in the top-left corner of the plot area. It contains six entries, differentiating models and their tuning state.

1. **Green line with downward-pointing triangle markers:** `Qwen2.5-14B (λ-tuned)`

2. **Green dashed line (no markers):** `Qwen2.5-14B Baseline`

3. **Red line with circle markers:** `Llama3-8B (λ-tuned)`

4. **Red dashed line (no markers):** `Llama3-8B Baseline`

5. **Orange line with square markers:** `Qwen3-8B (λ-tuned)`

6. **Orange dashed line (no markers):** `Qwen3-8B Baseline`

* **Grid:** A light gray grid is present, aiding in value estimation.

### Detailed Analysis

**Data Series Trends & Approximate Values:**

1. **Qwen2.5-14B (λ-tuned) - Green solid line with triangles:**

* **Trend:** Shows the steepest, near-exponential upward slope. It starts as the highest-performing model at λ=0 and its advantage grows dramatically as λ increases.

* **Key Points (Approximate):**

* λ=0: Reward/Margin ≈ 6

* λ=10: Reward/Margin ≈ 11

* λ=20: Reward/Margin ≈ 12

* λ=30: Reward/Margin ≈ 15

* λ=50: Reward/Margin ≈ 19

* λ=100: Reward/Margin ≈ 55 (Highest point on the chart)

2. **Llama3-8B (λ-tuned) - Red solid line with circles:**

* **Trend:** Shows a steady, approximately linear upward slope. It starts as the lowest-performing λ-tuned model but consistently improves.

* **Key Points (Approximate):**

* λ=0: Reward/Margin ≈ 5

* λ=10: Reward/Margin ≈ 6

* λ=20: Reward/Margin ≈ 9

* λ=30: Reward/Margin ≈ 11

* λ=50: Reward/Margin ≈ 18

* λ=100: Reward/Margin ≈ 38

3. **Qwen3-8B (λ-tuned) - Orange solid line with squares:**

* **Trend:** Shows a steady, approximately linear upward slope, very similar in trajectory to Llama3-8B (λ-tuned). It starts slightly above Llama3-8B and maintains a small, consistent lead.

* **Key Points (Approximate):**

* λ=0: Reward/Margin ≈ 6

* λ=10: Reward/Margin ≈ 7

* λ=20: Reward/Margin ≈ 10

* λ=30: Reward/Margin ≈ 13

* λ=50: Reward/Margin ≈ 19

* λ=100: Reward/Margin ≈ 34

4. **Baseline Models (All Dashed Lines):**

* **Trend:** All three baseline series (Qwen2.5-14B, Llama3-8B, Qwen3-8B) are horizontal lines, indicating their Reward/Margin is constant and unaffected by the λ parameter.

* **Key Points (Approximate):**

* **Qwen2.5-14B Baseline (Green dashed):** Constant at ≈ 6.

* **Llama3-8B Baseline (Red dashed):** Constant at ≈ 4.

* **Qwen3-8B Baseline (Orange dashed):** Constant at ≈ 4. (This line appears to overlap or be very close to the Llama3-8B Baseline).

### Key Observations

1. **Effect of λ-Tuning:** The primary observation is that applying λ-tuning enables all three models to achieve a higher Reward/Margin that scales positively with the factuality margin penalty (λ). The baselines do not scale.

2. **Model Performance Hierarchy:** At λ=0, the order from highest to lowest Reward/Margin is: Qwen2.5-14B (λ-tuned) ≈ Qwen3-8B (λ-tuned) > Llama3-8B (λ-tuned). The baselines are lower.

3. **Divergence with Increasing λ:** As λ increases, the performance gap between the models widens significantly. The Qwen2.5-14B (λ-tuned) model diverges sharply from the other two, suggesting it benefits most from higher penalty values.

4. **Similar Trajectories:** The Llama3-8B (λ-tuned) and Qwen3-8B (λ-tuned) lines follow very similar, nearly parallel upward paths, with Qwen3-8B maintaining a slight edge.

5. **Baseline Values:** The baseline Reward/Margin for Qwen2.5-14B is higher (≈6) than that of Llama3-8B and Qwen3-8B (both ≈4).

### Interpretation

This chart visualizes the results of an experiment likely aimed at improving the factuality or reliability of LLMs through a technique involving a "factuality margin penalty" (λ). The "Reward / Margin" is the objective function being optimized.

* **What the data suggests:** The λ-tuning method is effective. It successfully creates a trade-off where increasing the penalty for factual errors (higher λ) leads to a higher overall reward/margin for the model's outputs. This implies the models are learning to generate more factually consistent or confident responses to avoid the penalty.

* **Relationship between elements:** The λ parameter is the independent variable controlling the strength of the regularization or penalty during tuning. The Reward/Margin is the dependent variable measuring the outcome. The stark contrast between the rising λ-tuned lines and the flat baselines isolates the effect of the tuning procedure itself.

* **Notable trends/anomalies:**

* The **non-linear, explosive growth** of the Qwen2.5-14B (λ-tuned) curve is the most significant finding. It indicates this particular model architecture or size may be uniquely responsive to this form of tuning, achieving disproportionately higher rewards at high λ values.

* The **near-identical starting points and slopes** for the two 8B parameter models (Llama3 and Qwen3) suggest similar learning dynamics or capacity when subjected to this tuning method, despite their different origins.

* The fact that the **Qwen2.5-14B Baseline** starts higher than the 8B model baselines is expected, as larger models generally have higher base capabilities. The tuning amplifies this inherent advantage.

**In summary, the chart provides strong evidence that λ-tuning is a viable method for scaling model performance on a factuality-related metric, with the benefit being highly model-dependent, offering dramatic gains for the larger Qwen2.5-14B model.**