## Bar Charts: LLM Performance Comparison Across Datasets

### Overview

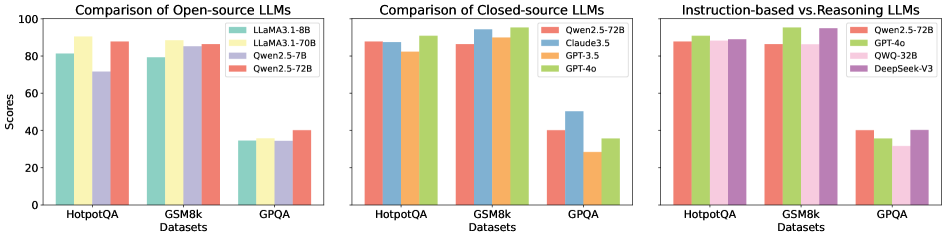

The image displays three side-by-side bar charts comparing the performance scores of various Large Language Models (LLMs) on three distinct evaluation datasets: HotpotQA, GSM8k, and GPQA. The charts are categorized by model type: open-source, closed-source, and a comparison of instruction-tuned versus reasoning-focused models.

### Components/Axes

* **Chart Titles (Top):**

* Left: "Comparison of Open-source LLMs"

* Center: "Comparison of Closed-source LLMs"

* Right: "Instruction-based vs Reasoning LLMs"

* **Y-Axis (All Charts):** Labeled "Scores". The scale runs from 0 to 100 with major tick marks at 0, 20, 40, 60, 80, and 100.

* **X-Axis (All Charts):** Labeled "Datasets". The three categorical datasets are, from left to right: "HotpotQA", "GSM8k", and "GPQA".

* **Legends (Top-Right of each chart):**

* **Left Chart (Open-source):**

* Teal bar: `LLaMA3.1-8B`

* Yellow bar: `LLaMA3.1-70B`

* Light purple bar: `Qwen2.5-7B`

* Salmon/Red bar: `Qwen2.5-72B`

* **Center Chart (Closed-source):**

* Salmon/Red bar: `Qwen2.5-72B`

* Blue bar: `Claude3.5`

* Yellow bar: `GPT-3.5`

* Green bar: `GPT-4o`

* **Right Chart (Instruction vs Reasoning):**

* Salmon/Red bar: `Qwen2.5-72B`

* Green bar: `GPT-4o`

* Pink bar: `QWQ-32B`

* Purple bar: `DeepSeek-V3`

### Detailed Analysis

**1. Left Chart: Comparison of Open-source LLMs**

* **HotpotQA:** `LLaMA3.1-70B` (yellow) scores highest (~90), followed by `Qwen2.5-72B` (salmon, ~88), `LLaMA3.1-8B` (teal, ~82), and `Qwen2.5-7B` (purple, ~72).

* **GSM8k:** `LLaMA3.1-70B` (yellow) again leads (~90), with `Qwen2.5-72B` (salmon, ~86) and `LLaMA3.1-8B` (teal, ~80) close behind. `Qwen2.5-7B` (purple) scores ~78.

* **GPQA:** All models show a dramatic performance drop. `Qwen2.5-72B` (salmon) scores highest (~40), followed by `LLaMA3.1-70B` (yellow, ~38), `Qwen2.5-7B` (purple, ~36), and `LLaMA3.1-8B` (teal, ~35).

* **Trend:** Performance is relatively high and stable for HotpotQA and GSM8k but collapses for GPQA across all open-source models. Larger models (70B/72B) consistently outperform their smaller counterparts (8B/7B).

**2. Center Chart: Comparison of Closed-source LLMs**

* **HotpotQA:** `GPT-4o` (green) scores highest (~92), followed by `Claude3.5` (blue, ~88), `Qwen2.5-72B` (salmon, ~88), and `GPT-3.5` (yellow, ~86).

* **GSM8k:** `GPT-4o` (green) leads (~94), with `Claude3.5` (blue, ~92) and `Qwen2.5-72B` (salmon, ~90) close. `GPT-3.5` (yellow) scores ~88.

* **GPQA:** A significant drop occurs again. `Claude3.5` (blue) scores highest (~50), followed by `GPT-4o` (green, ~36), `Qwen2.5-72B` (salmon, ~40), and `GPT-3.5` (yellow, ~28).

* **Trend:** Similar to open-source models, performance is strong on HotpotQA/GSM8k but weak on GPQA. `GPT-4o` and `Claude3.5` are the top performers overall. `GPT-3.5` shows the most significant relative decline on GPQA.

**3. Right Chart: Instruction-based vs Reasoning LLMs**

* **HotpotQA:** `GPT-4o` (green) and `DeepSeek-V3` (purple) are nearly tied for highest (~92), with `Qwen2.5-72B` (salmon, ~88) and `QWQ-32B` (pink, ~86) slightly behind.

* **GSM8k:** `GPT-4o` (green) leads (~94), followed by `DeepSeek-V3` (purple, ~92), `Qwen2.5-72B` (salmon, ~90), and `QWQ-32B` (pink, ~88).

* **GPQA:** `DeepSeek-V3` (purple) scores highest (~40), followed by `Qwen2.5-72B` (salmon, ~40), `GPT-4o` (green, ~36), and `QWQ-32B` (pink, ~32).

* **Trend:** The pattern of high performance on the first two datasets and low performance on GPQA persists. `GPT-4o` and `DeepSeek-V3` are the strongest models in this comparison. The reasoning-focused model `QWQ-32B` (pink) generally scores lower than the others, especially on GPQA.

### Key Observations

1. **Dataset Difficulty:** GPQA is universally the most challenging dataset, causing a performance drop of 40-60 points for every model compared to HotpotQA and GSM8k.

2. **Model Scaling:** Within model families (e.g., LLaMA, Qwen), larger parameter models (70B/72B) consistently outperform smaller ones (8B/7B).

3. **Top Performers:** `GPT-4o` and `Claude3.5` are the top-performing closed-source models. Among open-source models, `Qwen2.5-72B` and `LLaMA3.1-70B` are the strongest.

4. **Instruction vs. Reasoning:** The dedicated reasoning model `QWQ-32B` does not outperform general instruction-tuned models like `GPT-4o` or `DeepSeek-V3` on these benchmarks, and it scores the lowest on the difficult GPQA task.

### Interpretation

The data suggests a clear hierarchy of task difficulty for current LLMs. HotpotQA (likely a multi-hop reasoning QA task) and GSM8k (grade-school math) appear to be tasks where state-of-the-art models have achieved high proficiency. In contrast, GPQA (likely a more complex, specialized, or adversarial dataset) represents a significant frontier where all models, regardless of size or training paradigm (open/closed, instruction/reasoning), struggle.

The consistent performance gap between model sizes underscores the continued importance of scale. The strong showing of `DeepSeek-V3` and `Qwen2.5-72B` indicates that the performance gap between leading open-source and closed-source models is narrow on these specific benchmarks. However, the catastrophic drop on GPQA for all models implies that current evaluation metrics may not fully capture robustness or generalization to highly complex problems. The charts collectively highlight that while LLMs have mastered certain benchmark tasks, achieving reliable performance on more demanding, real-world-like challenges remains an open problem.