\n

## Document: Prompt for Scoring Error Reasons

### Overview

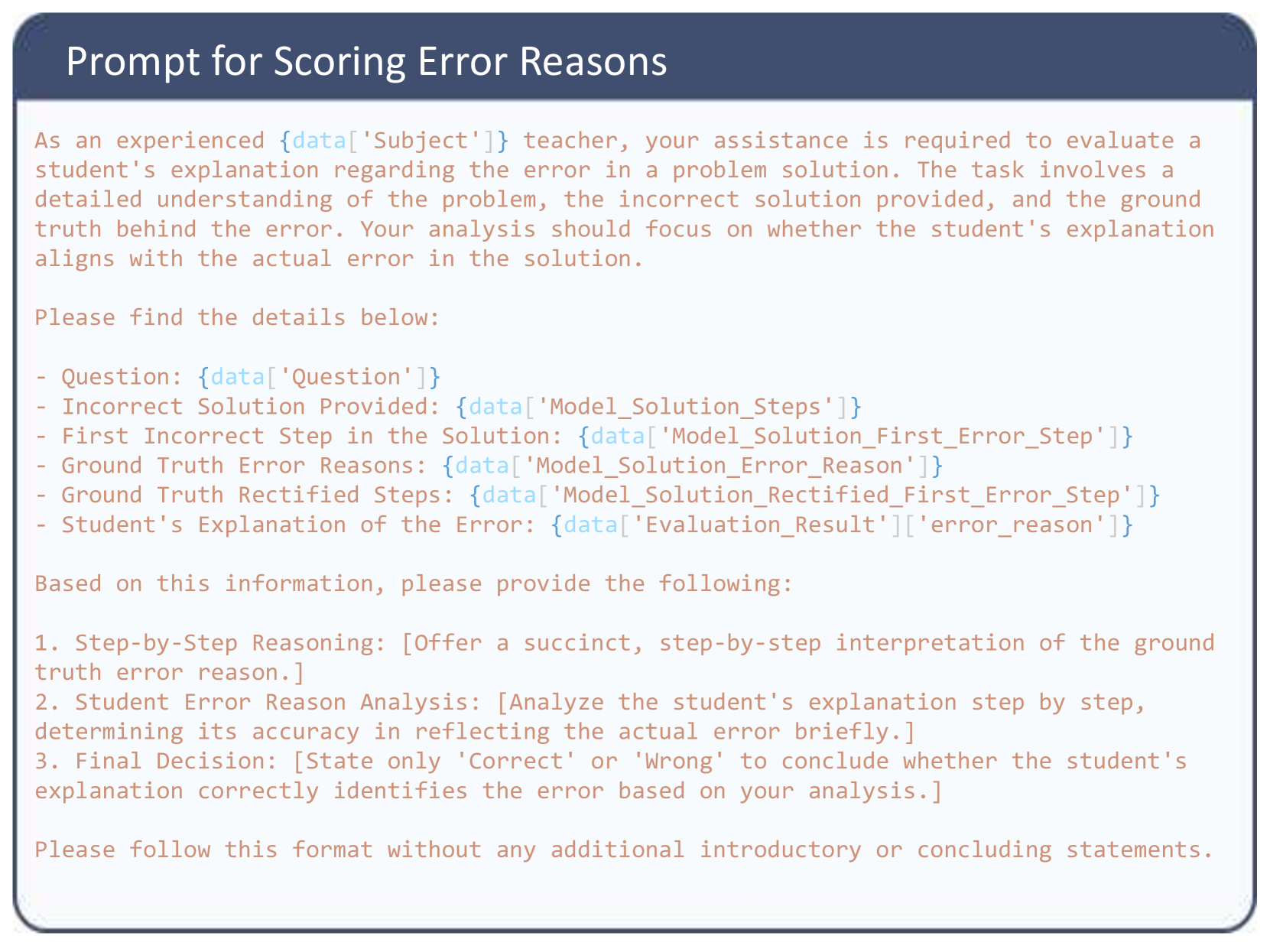

The image presents a text-based prompt intended for a scoring system, specifically designed to evaluate a student's explanation of an error in a problem solution. The prompt outlines the information required to assess the student's reasoning and provides a structured format for the evaluation.

### Components/Axes

The document is structured as a set of instructions with bullet points defining the input data and the expected output. There are no axes or charts present. The key components are:

* **Title:** "Prompt for Scoring Error Reasons"

* **Introduction:** A paragraph describing the task for an "experienced {data['Subject']} teacher".

* **Input Data:** A list of data points to be provided:

* Question: {data['Question']}

* Incorrect Solution Provided: {data['Model_Solution_Steps']}

* First Incorrect Step in the Solution: {data['Model_Solution_First_Error_Step']}

* Ground Truth Error Reasons: {data['Model_Solution_Error_Reason']}

* Ground Truth Rectified Steps: {data['Model_Solution_Rectified_First_Error_Step']}

* Student's Explanation of the Error: {data['Evaluation_Result']]['error_reason']}

* **Output Requirements:** A numbered list of expected outputs:

* Step-by-Step Reasoning

* Student Error Reason Analysis

* Final Decision (Correct or Wrong)

* **Formatting Instructions:** A final instruction to adhere to a specific format without introductory or concluding statements.

### Detailed Analysis or Content Details

The document is entirely textual. Here's a transcription of the text:

"Prompt for Scoring Error Reasons

As an experienced {data['Subject']} teacher, your assistance is required to evaluate a student’s explanation regarding the error in a problem solution. The task involves a detailed understanding of the problem, the incorrect solution provided, and the ground truth behind the error. Your analysis should focus on whether the student’s explanation aligns with the actual error in the solution.

Please find the details below:

- Question: {data['Question']}

- Incorrect Solution Provided: {data['Model_Solution_Steps']}

- First Incorrect Step in the Solution: {data['Model_Solution_First_Error_Step']}

- Ground Truth Error Reasons: {data['Model_Solution_Error_Reason']}

- Ground Truth Rectified Steps: {data['Model_Solution_Rectified_First_Error_Step']}

- Student’s Explanation of the Error: {data['Evaluation_Result']]['error_reason']}

Based on this information, please provide the following:

1. Step-by-Step Reasoning: [Offer a succinct, step-by-step interpretation of the ground truth error reason.]

2. Student Error Reason Analysis: [Analyze the student’s explanation step by step, determining its accuracy in reflecting the actual error briefly.]

3. Final Decision: [State only ‘Correct’ or ‘Wrong’ to conclude whether the student’s explanation correctly identifies the error based on your analysis.]

Please follow this format without any additional introductory or concluding statements."

### Key Observations

The document is a template or instruction set. The bracketed `{data[...]}` elements indicate placeholders for dynamic data input. The prompt is highly structured and aims for objective evaluation. The emphasis on a concise "Correct" or "Wrong" final decision suggests a binary scoring system.

### Interpretation

This document outlines a process for automated or semi-automated scoring of student error explanations. It's designed to ensure consistency and objectivity in evaluation. The use of placeholders indicates that this prompt is part of a larger system where student work and correct solutions are fed into the process. The prompt's structure suggests a focus on identifying whether the student understands *why* their solution was incorrect, rather than simply correcting the error itself. The prompt is a clear example of a task designed for a machine learning model or a human evaluator following a strict protocol. The inclusion of "Ground Truth" data points is crucial for comparison and validation of the student's reasoning.